chatlas

Your friendly guide to building LLM chat apps in Python with less effort and more clarity

chatlas is a Python library for building LLM chat applications with a unified interface across multiple providers like OpenAI and Anthropic. It simplifies the process of creating chat clients, managing conversations, and integrating tools with language models.

The library provides a consistent API that works across different LLM providers, reducing the complexity of switching between or supporting multiple models. It includes built-in support for tool/function calling, allowing you to register Python functions that the model can invoke during conversations. chatlas handles the underlying protocol differences between providers so you can focus on building your application rather than managing provider-specific implementations.

Contributors#

Events featuring chatlas#

Resources featuring chatlas#

Data analysis with Posit AI-assistants | Sara Altman & Simon Couch | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Sara Altman who walks through using Posit’s AI assistants to analyze data, including a sneak peek at Posit Assistant, and Simon Couch drops by to give us a demo of the reviewer package! Together, Sara and Simon author the Posit AI Newsletter, the best place to stay up-to-date with all the cool tools and advice on staying an informed and level-headed AI user.

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Sara Altman, Simon Couch

Sara’s Bluesky: https://bsky.app/profile/sara-altman.bsky.social Sara’s LinkedIn: https://www.linkedin.com/in/sarakaltman/ Sara’s GitHub: https://github.com/skaltman Posit AI Newsletter by Sara and Simon: https://posit.co/blog/?category=roundups

Resources from the hosts and chat:

Positron IDE → https://positron.posit.co/ Databot Extension → https://positron.posit.co/databot.html Getting started with Positron Assistant → https://positron.posit.co/assistant-getting-started.html Posit Assistant (Private Beta) → https://posit-ai-beta.share.connect.posit.cloud/ Reviewer Package (by Simon Couch) → https://github.com/simonpcouch/reviewer ellmer Package → https://elmer.tidyverse.org/ chatlas Package → https://github.com/posit-dev/chatlas Read the Posit AI Newsletter → https://posit.co/blog/?category=roundups Sign up to get the Posit AI Newsletter → http://pos.it/ai-news Simon’s blog post about local LLMs not quite being ready for primetime → https://posit.co/blog/local-models-are-not-there-yet/ Join the waitlist for Posit AI in RStudio → https://posit.co/products/ai/ Posit AI Known Issues & FAQs → https://posit-ai-beta.share.connect.posit.cloud/#frequently-asked-questions-faqs Blog post from Simon and Sara about Privacy and LLMs → https://posit.co/blog/trust-llm-tools/ DS Lab YouTube playlist → https://youtube.com/playlist?list=PL9HYL-VRX0oSeWeMEGQt0id7adYQXebhT&si=7tmU6EAJpO5S7GBh

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction 07:23 “Would you mind real quick just briefly explaining the differences between Positron Assistant and Databot?” 15:01 “Is there any way to configure reasoning efforts when signing in with GitHub Copilot?” 15:49 “Does DataBot already support other providers beyond Cloud?” 20:36 “What is the cases with monetary penalty in the console output?” 22:14 “Do you happen to know if the column names of the dataset are very, very messy?” 23:18 “Can you add skills to DataBot?” 26:36 “This code isn’t being saved anywhere. So where does it go?” 27:38 “There a way to know what all the slash commands are?” 28:51 Requesting Databot to use the namespace operator 33:58 “Is there a way to search within that Databot pane?” 39:34 “Have you noticed any time differences with how quickly things run-in RStudio versus Positron?” 40:33 “What happens if you open that URL that it mentions at the bottom in your browser?” 40:50 Clarifying the difference between Posit Assistant and Positron Assistant 43:18 “What is the typical token burn rate?” 53:31 “Is this on CRAN and working in both Positron and RStudio?”

The mall package: using LLMs with data frames in R & Python | Edgar Ruiz | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Edgar Ruiz as they walk through how mall works (with ellmer) in R, and then python. The mall package lets you use LLMs to process tabular or vectors of data, letting you do things such as feeding it a column of reviews and asking mall to use an anthropic model via ellmer to add a column of summaries or sentiments. Follow along with the code here: https://github.com/LibbyHeeren/mall-package-r

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Edgar Ruiz

Edgar’s Bluesky: https://bsky.app/profile/theotheredgar.bsky.social Edgar’s LinkedIn: https://www.linkedin.com/in/edgararuiz/ Edgar’s GitHub: https://github.com/edgararuiz

Resources from the hosts and chat:

Ollama → https://ollama.com/download Posit Data Science Lab → https://posit.co/dslab mall package → https://mlverse.github.io/mall/ ellmer package → https://elmer.tidyverse.org/ Libby’s Positron theme (Catppuccin) → https://marketplace.visualstudio.com/items?itemName=Catppuccin.catppuccin-vsc GitHub repo with Libby and Edgar’s code → https://github.com/LibbyHeeren/mall-package-r LLM providers supported by ellmer → https://ellmer.tidyverse.org/index.html#providers vitals package → https://vitals.tidyverse.org/ chatlas package → https://posit-dev.github.io/chatlas/ polars package → https://pola.rs/ narwhals package → https://narwhals-dev.github.io/narwhals/ pandas package → https://pandas.pydata.org/ LM Studio → https://lmstudio.ai/ Simon Couch’s blog → https://www.simonpcouch.com/ Edgar’s dataset: TidyTuesday Animal Crossing Dataset (May 5, 2020) → https://github.com/rfordatascience/tidytuesday Libby’s dataset: Kaggle Tweets Dataset → https://www.kaggle.com/datasets/mmmarchetti/tweets-dataset Blog from Sara and Simon on evaluating LLMs → https://posit.co/blog/r-llm-evaluation-03/ Data Science Lab YouTube playlist → https://www.youtube.com/watch?v=LDHGENv1NP4&list=PL9HYL-VRX0oSeWeMEGQt0id7adYQXebhT&index=2 AWS Bedrock → https://aws.amazon.com/bedrock/ Anthropic → https://www.anthropic.com/ Google Gemini → https://gemini.google.com/ What is rubber duck debugging anyway?? → https://en.wikipedia.org/wiki/Rubber_duck_debugging

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction to Libby, Isabella, Edgar, and the mall package + ellmer package 07:14 “What’s the difference between using mall for these NLP tasks versus traditional or classical NLP?” 09:37 “Can mall be used with a local LLM?” 17:32 “What kind of laptop specs should I realistically have to make good use of these models?” 22:12 “Are you limited to three output options?” 22:55 “Can mall return the prediction probabilities?” 24:14 “What are a rule of thumb set of specs for a machine so local LLMs are practically feasible?” 24:47 “Would that be in the additional prompt area where you’re defining things?” 25:04 “You could use the vitals package to compare models, right?” 25:24 “Can we use LM Studio instead of Ollama?” 28:35 “How do you iterate and validate the model?” 36:39 “Why use paste if it is all text?” 37:31 “Are these recent tweets (from X) or older ones from actual Twitter?” 40:23 “Is there a playlist for the Data Science Labs on YouTube?” 46:11 “Does that mean that the python version does not work with pandas?” 50:14 “Where is this data set from?”

Positron Assistant for Developing Shiny Apps - Tom Mock

Positron Assistant for Developing Shiny Apps - Tom Mock (Posit)

Abstract: This talk will explore building AI Apps with a focus on Positron Assistant for Shiny developer experience and in-IDE tooling for accelerating app creation. This talk will discuss tools like ellmer / chatlas / querychat / shinychat and compare it to Positron Assistant.

Resources mentioned in the presentation:

- Positron - https://positron.posit.co/

- Positron Assistant - https://positron.posit.co/assistant.html

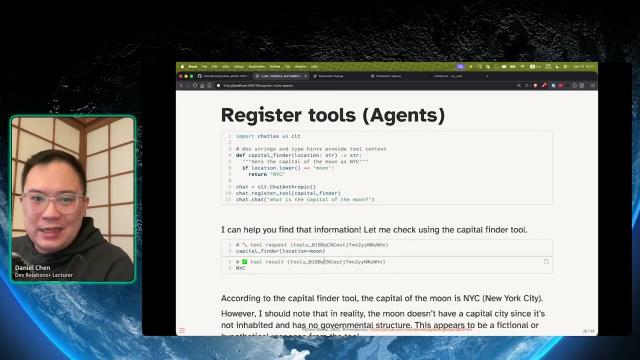

Daniel Chen - LLMs, Chatbots, and Dashboards: Visualize Your Data with Natural Language

For information on upcoming conferences, visit https://www.dataconf.ai .

LLMs, Chatbots, and Dashboards: Visualize Your Data with Natural Language by Daniel Chen

Abstract: LLMs have a lot of hype around them these days. Let’s demystify how they work and see how we can put them in context for data science use. As data scientists, we want to make sure our results are inspectable, reliable, reproducible, and replicable. We already have many tools to help us in this front. However, LLMs provide a new challenge; we may not always be given the same results back from a query. This means trying to work out areas where LLMs excel in, and use those behaviors in our data science artifacts. This talk will introduce you to LLms, the Ellmer, and Chatlas packages for R and Python, and how they can be integrated into a Shiny to create an AI-powered dashboard. We’ll see how we can leverage the tasks LLMs are good at to better our data science products.

Presented at The New York Data Science & AI Conference Presented by Lander Analytics (August 26, 2025)

Hosted by Lander Analytics

(https://www.landeranalytics.com )

Positron AI Session (George Stagg, Winston Chang, Tomasz Kalinowski , Carson Sievert) | posit::conf

George Stagg, Winston Chang, and Tomasz Kalinowski introduce AI capabilities in Positron, including Positron Assistant, a tool still in preview that enhances coding and data analysis.

0:00 Introduction to Posit’s approach to AI 0:23 George Stagg: Positron Assistant 11:03 Winston Chang: Databot 21:30 Tomasz Kalinowski: ragnar 31:13 Carson Sievert: chatlas 41:42 Q&A

posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Trust, but Verify: Lessons from Deploying LLMs in a Large Health System (Timothy Keyes)

Trust, but Verify: Lessons from Deploying LLMs in a Large Health System

Speaker(s): Timothy Keyes

Abstract:

Large language models (LLMs) are transforming how data practitioners work with unstructured text data. However, in high-stakes domains like medicine, we need to ensure that “hallucinated” clinical details don’t mislead clinicians.

This talk will present a framework for evaluating and monitoring LLM systems, drawing from a real-world deployment at Stanford Health Care. We will describe how we built and assessed an LLM-powered system for real-time, automated chart abstraction within patients’ electronic health records, focusing on methods for measuring accuracy, consistency, and safety. Additionally, we will discuss how open-source tools like Chatlas and Quarto powered the work across our team’s combined Python- and R-based workflows. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Harnessing LLMs for Data Analysis | Led by Joe Cheng, CTO at Posit

When we think of LLMs (large language models), usually what comes to mind are general purpose chatbots like ChatGPT or code assistants like GitHub Copilot. But as useful as ChatGPT and Copilot are, LLMs have so much more to offer—if you know how to code. In this demo Joe Cheng will explain LLM APIs from zero, and have you building and deploying custom LLM-empowered data workflows and apps in no time.

Posit PBC hosts these Workflow Demos the last Wednesday of every month. To join us for future events, you can register here: https://posit.co/events/

Slides: https://jcheng5.github.io/workflow-demo/ GitHub repo: https://github.com/jcheng5/workflow-demo

Resources shared during the demo: Ellmer https://ellmer.tidyverse.org/ Chatlas https://posit-dev.github.io/chatlas/

Environment variable management: For R: https://docs.posit.co/ide/user/ide/guide/environments/r/managing-r.html#renviron For Python https://pypi.org/project/python-dotenv/

Shiny chatbot UI: For R, Shinychat https://posit-dev.github.io/shinychat/ For Python, ui.Chat https://shiny.posit.co/py/docs/genai-inspiration.html

Deployment Cloud hosting https://connect.posit.cloud On-premises (Enterprise) https://posit.co/products/enterprise/connect/ On-premises (Open source) https://posit.co/products/open-source/shiny-server/

Querychat Demo: https://jcheng.shinyapps.io/sidebot/ Package: https://github.com/posit-dev/querychat/

If you have specific follow-up questions about our professional products, you can schedule time to chat with our team: pos.it/llm-demo

Bringing data science to the construction industry | Blake Abbenante | Data Science Hangout

To join future data science hangouts, add it to your calendar here: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Blake Abbenante, Director of Analytics and Data Science at Suffolk Construction, to chat about his career journey in data science, implementing modern data practices in the construction industry, innovative applications of AI and data science in construction, and building a data-driven culture in a traditionally less tech-focused sector.

In this Hangout, we explore innovative applications of AI and data science in construction. Blake shared how Suffolk Construction is leveraging cutting-edge technologies like AI to revolutionize traditional processes. One focus is their GenAI scheduling tool, which aims to augment and speed up the design and planning phases of building projects. This tool has the potential to significantly reduce the time planners spend on creating schedules, moving from weeks to potentially minutes or hours for an 80% completion rate. Blake discussed the development and implementation of safety models that forecast risk on projects, enabling proactive measures to ensure safer construction sites by predicting which projects might require additional safety personnel based on historical data.

Resources mentioned in the video and zoom chat: The ellmer R package → https://ellmer.tidyverse.org/ The chatlas R package → https://github.com/posit-dev/chatlas Posit Blog Post on ellmer → https://posit.co/blog/announcing-ellmer/

If you didn’t join live, one great discussion you missed from the zoom chat was about the challenges of data collection and analysis when encountering pushback from those whose work is being analyzed, and strategies to build trust and demonstrate value. Let us know below if you’d like to hear more about this topic!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!