dbplyr

Database (DBI) backend for dplyr

dbplyr is the database backend for dplyr that lets you work with remote database tables using dplyr syntax. It automatically translates your R code into SQL, eliminating the need to write SQL queries directly.

The package provides lazy evaluation, meaning queries are only executed when you explicitly request results, which improves performance when working with large databases. It integrates seamlessly with the DBI package ecosystem and supports standard dplyr operations like filtering, grouping, and summarizing on database tables. You can preview generated SQL queries before execution and work with databases as if they were local data frames.

Contributors#

Resources featuring dbplyr#

Coding vs. thinking programmatically | Samia Baig | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

This week’s guest was Samia Baig, Senior Data Scientist/Data Engineer at Johnson & Johnson Innovative Medicine!

Some topics covered in this week’s Hangout were transitioning from a background in pharmacy and public health to a data career in pharma, distinguishing the responsibilities of data scientists versus analytics engineers, strategies for making data pipelines more robust (and convincing your team that you NEED robust pipelines in the first place), and the value of joining open-source communities like Tidy Tuesday.

Resources mentioned in the video and chat: Posit Data Science Lab → https://pos.it/dslab Tidy Tuesday GitHub Repository → https://github.com/rfordatascience/tidytuesday {dbplyr} → https://dbplyr.tidyverse.org/ The Missing Semester of Your CS Education → https://missing.csail.mit.edu/

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh The Lab: https://pos.it/dslab LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps 00:00 Introduction 03:22 “Do you feel like analytics engineer is a good descriptor for what you do?” 04:44 “How did you get into data from being on a pharmacist’s job path?” 11:55 “What was it like in the move that you made from public health to pharma?” 16:57 “What do you say are distinguishing factors between data science and engineering?” 20:16 “What are the most popular tools that you and your team use, in your job at J&J?” 24:00 “What do you use SQL in?” 27:40 “How would you go about convincing a team of the need for a more robust pipeline?” 31:10 “Can you define robust?” 33:31 “Do you happen to have any specific resources or strategies or examples that might help students or others with that mindset of thinking programmatically?” 37:06 “Are there any non data science skills that are very helpful in your either current or former job?” 40:23 “Is there any kind of community among data scientists across the whole company?” 45:44 “What are your biggest data challenges that you have?” 46:12 “If you had a magic wand, what problem would you solve in that area?” 49:52 “What is a piece of career advice that maybe you wish you could go back in time and give yourself?”

Claude Code for R | Hadley Wickham

Talk from rainbowR conference 2026: https://conference.rainbowr.org

If you’ve been paying attention to software engineering social media lately, you might have noticed a lot of noise about Claude Code and the Opus 4.5 model. For me personally, these have pushed AI coding assistance from a “nice to have” to something that feels just as important as git.

In this talk, I’ll show you a couple of my “vibe” coded experiments, but more importantly show you how Claude Code helps me write higher-quality R code faster. I’ve used it a bunch recently for both testthat and dbplyr, two large, well-established code bases where quality is more important than velocity

Supporting 100 Data Scientists with a Small Team | Mike Thomson | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Mike Thomson, Data Science Manager at Flatiron Health, to chat about managing open source tools and maintaining R packages, creating reproducible reports for Word and Excel using Quarto, the “hub and spoke” support model for data scientists, and applying R and Posit tools in Real World Evidence (RWE) oncology space.

In this Hangout, we explore creating reproducible outputs using Quarto for formats like Word and Excel. Flatiron Health uses Quarto because it allows the reproducible publication of analyses to multiple formats simultaneously (like HTML and a downloadable Word document) from the same source code. A specific challenge discussed was outputting formatted analytic tables to Excel, as this is not natively supported by Quarto. Erica Yim, from Mike’s team, detailed how they built an internal R function that uses the flexlsx package along with flextable to easily output pre-existing formatted tables from a Quarto document into an Excel template.

Resources mentioned in the video and zoom chat: flexlsx R package GitHub repository → https://github.com/pteridin/flexlsx DBPlier PR for Snowflake Translations (contributed to by Flat Iron Health) → https://github.com/tidyverse/dbplyr/pull/860

If you didn’t join live, one great discussion you missed from the zoom chat was about the pain points of exporting data from Quarto to Word or Excel, particularly concerning table formatting and styles. Attendees in the chat strongly highlighted the difficulty of managing table formatting, including issues with table cross-references, headers, and footers. They noted that dealing with styles often requires workarounds, such as creating flextables that match desired Word styles instead of relying on default table styles. Let us know below if you’d like to hear more about this topic!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps: 00:00 Introduction 02:23 “Can you talk about what Flatiron does and what your teams do?” 03:29 “Could you give us a few examples of the data types or collections that you might be working with?” 05:00 “Do you have longitudinal data?” 07:46 “Are you aware of any computer vision applications in the health care industry from your perspective?” 09:38 “Do you use mixed models or Bayesian MCMC?” 10:56 “How does your team use Quarto?” 16:59 “How do you convince stakeholders of the value of going open source (and handle security concerns)?” 22:56 “Do you allow people to have a certain amount of time to contribute back to open source?” 26:03 “I just want to understand a little bit about your support model for that group.” 29:57 “Do you have any tips for asynchronous working?” 31:02 “Are you like a Jira team or an Asana team for assigning tasks or tickets?” 32:10 “How many people on your platform team support Posit teams?” 34:24 “What does your team use for unstructured document analysis?” 36:24 “How important is domain knowledge in your recruitment?” 40:02 “Where do you store all of this stuff (data storage and databases)?” 42:04 “What is the approximate timeline from the time you do analysis to final deployment of results in the real world?” 44:31 “Is there a process for people getting things approved to use in your environment?” 47:39 “How do you handle the challenge of going back from Word to Quarto source code (after changes are tracked)?” 50:22 “What does a typical Workday look like for you?” 51:47 “Is there a piece of career advice that has either really helped you, that you’ve really liked, that you try to give to other people?”

Election Night Reporting Using R & Quarto (Andrew Heiss & Gabe Osterhout) | posit::conf(2025)

Election Night Reporting Using R & Quarto

Speaker(s): Gabe Osterhout; Andrew Heiss

Abstract:

Election night reporting (ENR) is often clunky, outdated, and overpriced. The Idaho Secretary of State’s office leveraged R and Quarto to create a better ENR product for the end user while driving down costs using the open-source software we all know and love. With help from Dr. Andrew Heiss, R was used in every step of the process—from {dbplyr} backend to visualizing the results using {reactable} tables and {leaflet} maps, combining the output into a visually appealing Quarto website. Quarto was the ideal solution due to its scalability, quick deployment, responsive design, and easy navigation. In addition, Dr. Heiss will discuss the advantages of using a {targets} pipeline and creating programmatic code chunks in Quarto.

GitHub Repo - https://github.com/andrewheiss/election-desk posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Computing and recommending company-wide employee training pair decisions at scale… posit conf 2024

Regis A. James developed MAGNETRON AI, an innovative, patent-pending tool that automates at-scale generation of high-quality mentor/mentee matches at Regeneron to enable rapid, yet accurate, company-wide pairings between employees seeking skill training/growth in any domain and others specifically capable of facilitating it. Built using R, Python, LLMs, shiny, MySQL, Neo4j, JavaScript, CSS, HTML, and bash, it transforms months of manual collaborative work into days. The reticulate, bs4dash, DT, plumber API, dbplyr, and neo4r packages were particularly helpful in enabling its full-stack data science. The expert recommendation engine of the AI tool has been successfully used for training a 400-member data science community of practice, and also for larger career development mentoring cohorts for thousands of employees across the company, demonstrating its practical value and potential for wider application.

Talk by Regis A. James

Slides: https://drive.google.com/file/d/1jq-WjuFz1Lp3m6v0SYjNoW1WcCBKPMs3/view?usp=sharing Referenced talks: https://www.youtube.com/@datadrivendecisionmaking

Full Title: Computing and recommending company-wide employee training pair decisions at scale via an AI matching and administrative workflow platform developed completely in-house

Edgar Ruiz - Easing the pain of connecting to databases

Overview of the current and planned work to make it easier to connect to databases. We will review packages such as odbc, dbplyr, as well as the documentation found on our Solutions site (https://solutions.posit.co/connections/db/databases) , which will soon include the best practices we find on how to connect to these vendors via Python.

Talk by Edgar Ruiz

Analyze and explore data stored in Snowflake using R

James Blair, Senior Product Manager, Cloud Integrations at Posit, will demonstrate using the R language to analyze and explore data stored in Snowflake. He will also show you how easy it is to set up an R environment inside Posit Workbench that runs as a native app on Snowpark Container Services.

You also find out how using the dbplyr interface can be used to push computation data into Snowflake, giving you access to greater memory and compute power than in a standard R session.

It’s easy to get started. In just a few minutes, you can work in your R session securely inside Snowflake using the RStudio Pro IDE in Posit Workbench. Posit also supports VS Code and Jupyter for data scientists who prefer to work in other languages like Python, so you can continue to use the tools you know and love.

Learn more about the Snowflake and Posit integration: https://posit.co/solutions/snowflake/

duckplyr: Tight Integration of duckdb with R and the tidyverse - posit::conf(2023)

Presented by Kirill Müller

The duckplyr R package combines the convenience of dplyr with the performance of DuckDB. Better than dbplyr: Data frame in, data frame out, fully compatible with dplyr.

duckdb is the new high-performance analytical database system that works great with R, Python, and other host systems. dplyr is the grammar of data manipulation in the tidyverse, tightly integrated with R, but it works best for small or medium-sized data. The former has been designed with large or big data in mind, but currently, you need to formulate your queries in SQL.

The new duckplyr package offers the best of both worlds. It transforms a dplyr pipe into a query object that duckdb can execute, using an optimized query plan. It is better than dbplyr because the interface is “data frames in, data frames out”, and no intermediate SQL code is generated.

The talk first presents our results, a bit of the mechanics, and an outlook for this ambitious project.

Materials: https://github.com/duckdblabs/duckplyr/

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Databases for data science with duckdb and dbt. Session Code: TALK-1100

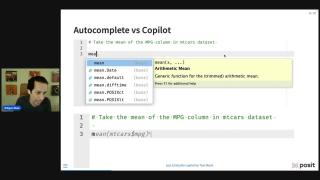

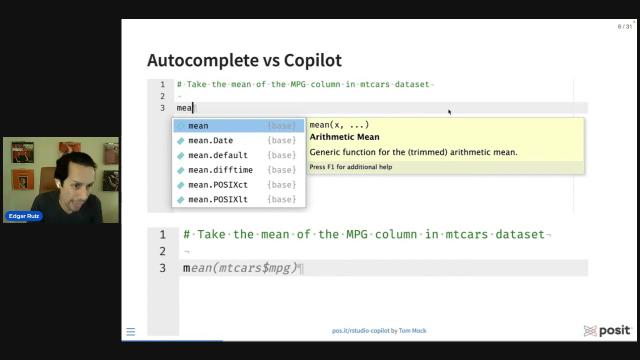

Edgar Ruiz - GitHub Copilot in RStudio

GitHub Copilot in RStudio - Edgar Ruiz

Presentation slides available at https://colorado.posit.co/rsc/rstudio-copilot/#/TitleSlide

Speaker Bio: Edgar Ruiz is a solutions engineer at Posit with a background in deploying enterprise reporting and business intelligence solutions. He is the author of multiple articles and blog posts sharing analytics insights and server infrastructure for data science. Edgar is the author and administrator of the https://db.rstudio.com web site, and current administrator of the sparklyr web site: https://spark.rstudio.com . Co-author of the dbplyr package, and creator of the dbplot, tidypredict and modeldb package.

Presented at the 2023 R/Pharma Conference (October 26, 2023)

Edgar Ruiz | Programación con R | RStudio (2019)

Hay ocasiones que, cuando trabajamos en un análisis en R, necesitamos dividir nuestros datos en grupos, y después tenemos que correr la misma operación sobre cada grupo. Por ejemplo, puede ser que los datos que tenemos contienen varios países, y necesitamos crear un modelo por cada país. Otro caso sería el de correr múltiples operaciones sobre los mismos datos. Estos casos requieren que sepamos cómo usar iteraciones con R. Este webinar se concentrará en cómo utilizar el paquete llamado purrr para ayudarnos a resolver este tipo de problema.

Descargar materiales: https://rstudio.com/resources/webinars/programacio-n-con-r/

About Edgar: Edgar Ruiz es un Ingeniero de Soluciones en RStudio. Es el administrador de los sitios oficiales de sparklyr y de R para bases de datos. También es autor de los paquetes de R: dbplot, tidypredict y modeldb, y co-autor de el paquete dbplyr

Edgar Ruiz | Databases using R The latest | RStudio (2019)

Learn about the latest packages and techniques that can help you access and analyze data found inside databases using R. Many of the techniques we will cover are based on our personal and the community’s experiences of implementing concepts introduced last year, such as offloading most of the data wrangling to the database using dplyr, and using the RStudio IDE to preview the database’s layout and data. Also, learn more about the most recent improvements to the RStudio products that are geared to aid developers in using R with databases effectively.

VIEW MATERIALS https://github.com/edgararuiz/databases-w-r

About the Author Edgar Ruiz Edgar is the author and administrator of the https://db.rstudio.com web site, and current administrator of the [sparklyr] web site: https://spark.rstudio.com . Author of the Data Science in Spark with sparklyr cheatsheet. Co-author of the dbplyr package and creator of the dbplot package