mcptools

Model Context Protocol For R

mcptools implements the Model Context Protocol (MCP) in R, enabling bidirectional integration between R and MCP-enabled AI tools. It allows R to function as both an MCP server (letting AI assistants like Claude Desktop, Claude Code, and VS Code Copilot run R code in your active R sessions) and as an MCP client (connecting third-party MCP servers to R-based chat applications).

The package solves the problem of AI assistants being unable to access or interact with your live R sessions and data. When used as a server, it enables models to run R code directly in your working sessions to answer questions about your data and environment. When used as a client, it integrates external tools (like GitHub, Confluence, or Google Drive) into R chat applications through the ellmer package, providing additional context for AI-powered workflows.

Contributors#

Resources featuring mcptools#

Simon Couch - Practical AI for data science

Practical AI for data science (Simon Couch)

Abstract: While most discourse about AI focuses on glamorous, ungrounded applications, data scientists spend most of their days tackling unglamorous problems in sensitive data. Integrated thoughtfully, LLMs are quite useful in practice for all sorts of everyday data science tasks, even when restricted to secure deployments that protect proprietary information. At Posit, our work on ellmer and related R packages has focused on enabling these practical uses. This talk will outline three practical AI use-cases—structured data extraction, tool calling, and coding—and offer guidance on getting started with LLMs when your data and code is confidential.

Presented at the 2025 R/Pharma Conference Europe/US Track.

Resources mentioned in the presentation:

- {vitals}: Large Language Model Evaluations https://vitals.tidyverse.org/

- {mcptools}: Model Context Protocol for R https://posit-dev.github.io/mcptools/

- {btw}: A complete toolkit for connecting R and LLMs https://posit-dev.github.io/btw/

- {gander}: High-performance, low-friction Large Language Model chat for data scientists https://simonpcouch.github.io/gander/

- {chores}: A collection of large language model assistants https://simonpcouch.github.io/chores/

- {predictive}: A frontend for predictive modeling with tidymodels https://github.com/simonpcouch/predictive

- {kapa}: RAG-based search via the kapa.ai API https://github.com/simonpcouch/kapa

- Databot https://positron.posit.co/dat

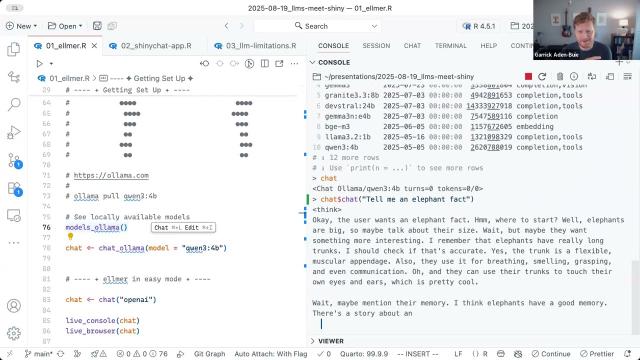

Building the Future of Data Apps: LLMs Meet Shiny

GenAI in Pharma 2025 kicks off with Posit’s Phil Bowsher and Garrick Aiden-Buie sharing a technical overview of how LLMs can integrate with Shiny applications and much more!

Abstract: When we think of LLMs (large language models), usually what comes to mind are general purpose chatbots like ChatGPT or code assistants like GitHub Copilot. But as useful as ChatGPT and Copilot are, LLMs have so much more to offer—if you know how to code. In this demo Garrick will explain LLM APIs from zero, and have you building and deploying custom LLM-empowered data workflows and apps in no time.

Resources mentioned in the session:

- GitHub Repository for session: https://github.com/gadenbuie/genAI-2025-llms-meet-shiny

- {mcptools} - Model Context Protocols servers and clients https://posit-dev.github.io/mcptools/

- {vitals} - Large language model evaluation for R https://vitals.tidyverse.org/