odbc

Connect to ODBC databases (using the DBI interface)

The odbc package provides a DBI-compliant interface to ODBC drivers, allowing R users to connect to databases like SQL Server, Oracle, Databricks, and Snowflake.

This package offers a faster alternative to RODBC and RODBCDBI packages, built on the nanodbc C++ library for performance. It works seamlessly with DBI functions for common database operations (reading/writing tables, executing queries) and integrates with dbplyr for automatic SQL generation from dplyr code. The package handles the communication between R and database driver managers, supporting both named data sources and direct driver connections.

Contributors#

Resources featuring odbc#

From Data to Dollars: Improving Medical Billing Accuracy Using NLP (Julianne Gent, Emory Healthcare)

Protecting our Healthcare Heroes: Using Natural Language Processing to Prevent Billing Mistakes in Healthcare

Speaker(s): Julianne Gent

Abstract:

Maintaining accurate billing documentation in healthcare is essential to prevent revenue loss and preserve patient satisfaction. I’m Julianne Gent, Analytics Developer for Emory Digital, and I’m here to discuss the natural language processing algorithm we built utilizing an automated SQL-to-R pipeline. This algorithm uses packages ‘odbc’ and ‘stringr’ to import SQL queries into R, recognize billing patterns, and extract billing time. Our algorithm accurately captured billing data for 93% of over 250,000 notes. The billing provided by our hospital’s medical software? Only 40%. Our algorithm showed that an SQL-to-R pipeline can improve billing documentation and accuracy, and we are confident that it can be applied to many other industries. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Edgar Ruiz - Easing the pain of connecting to databases

Overview of the current and planned work to make it easier to connect to databases. We will review packages such as odbc, dbplyr, as well as the documentation found on our Solutions site (https://solutions.posit.co/connections/db/databases) , which will soon include the best practices we find on how to connect to these vendors via Python.

Talk by Edgar Ruiz

Workflow Demo Live Q&A - September 25th!

On September 25th, we hosted a Workflow Demo on data-level permissions using Posit Connect (with Databricks, Snowflake, OAuth: https://youtu.be/ivEoeyWJzVY?feature=shared )

Links mentioned in the Q&A: Release Blurb: https://docs.posit.co/connect/news/#posit-connect-2024.08.0 Security: https://docs.posit.co/connect/admin/integrations/oauth-integrations/security.html Publishing Quarto: https://docs.posit.co/connect/how-to/basic/publish-databricks-quarto-notebook/ sparklyr: https://github.com/sparklyr/sparklyr?tab=readme-ov-file#connecting-through-databricks-connect-v2 odbc: https://github.com/r-dbi/odbc?tab=readme-ov-file#odbc-

Helpful resources for this workflow: Full examples to get you started: https://github.com/posit-dev/posit-sdk-py/tree/main/examples/connect Admins will likely be most interested in starting here: https://docs.posit.co/connect/admin/integrations/oauth-integrations/databricks/ End users will be most interested here: https://docs.posit.co/connect/user/oauth-integrations/ Databricks Integrations with Python Cookbook: https://docs.posit.co/connect/cookbook/content/integrations/databricks/python/ Databricks Integrations with R Cookbook: https://docs.posit.co/connect/cookbook/content/integrations/databricks/r/ Snowflake Integrations with Python Cookbook: https://docs.posit.co/connect/cookbook/content/integrations/snowflake/python/

Data-level permissions using Posit Connect (with Databricks, Snowflake, OAuth)

Should one viewer of your app be able to see more (or different) data than another? Maybe colleagues in California should only see data relevant to them? Or managers should only have access to their own employee data?

The Connect team joined us for a demo on inheriting data-level permissions using Posit Connect and Databricks Unity Catalog. While this workflow uses Databricks to illustrate federated data access controls, this same methodology can also be applied to Snowflake or any external data source that supports OAuth.

During this workflow demo, you will learn:

- How to define row-level access controls in Databricks Unity Catalog

- How to create a Databricks OAuth integration in Posit Connect

- How to write interactive applications that utilize the viewer’s Databricks credentials when reading data from Databricks Unity Catalog, providing the viewer with a personalized experience depending on their level of data access

- How to deploy this application to Posit Connect and share it within your organization

If you’d like to talk further with our team 1:1 about doing this, you can find a time to chat here: https://posit.co/schedule-a-call/?booking_calendar__c=WorkflowDemo

Ps. To enable OAuth integrations, your team will need to upgrade to Posit Connect 2024.08.0. This feature is available in Enhanced and Advanced product tiers.

Helpful resources for this workflow: Full examples to get you started: https://github.com/posit-dev/posit-sdk-py/tree/main/examples/connect Admins will likely be most interested in starting here: https://docs.posit.co/connect/admin/integrations/oauth-integrations/databricks/ End users will be most interested here: https://docs.posit.co/connect/user/oauth-integrations/ Q&A Link: https://youtube.com/live/TZQY6rm6hU4?feature=share

Additional resources shared: Release Blurb: https://docs.posit.co/connect/news/#posit-connect-2024.08.0 Security: https://docs.posit.co/connect/admin/integrations/oauth-integrations/security.html Publishing Quarto: https://docs.posit.co/connect/how-to/basic/publish-databricks-quarto-notebook/ sparklyr: https://github.com/sparklyr/sparklyr?tab=readme-ov-file#connecting-through-databricks-connect-v2 odbc: https://github.com/r-dbi/odbc?tab=readme-ov-file#odbc-

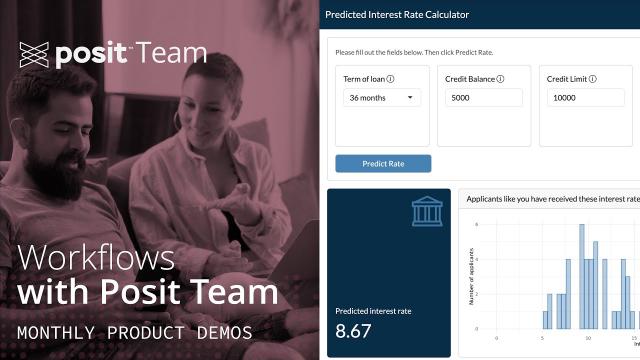

Predicting Lending Rates with Databricks, tidymodels, and Posit Team

Machine learning algorithms are reshaping financial decision-making, changing how the industry manages financial risk.

For our workflow demo today on June 26th at 11 am ET, Garrett Grolemund at Posit will show how to use both Posit and Databricks to apply machine learning methods to the consumer credit market, where accurately predicting lending rates is critical for customer acquisition.

*Please note that while the workflow focuses on a financial example, the general workflow will be useful to those using Databricks and R together across any industry.

During this workflow demo, you will learn how to:

- Connect to historical lending rate data stored in Databricks Delta Lake

- Tune and cross-validate a penalized linear regression (LASSO) that predicts interest rates

- Select variables with the penalized linear regression model (LASSO)

- Build an interactive Shiny app to provide a customer-facing user interface for our model

- Deploy the app to production on Posit Connect, and arrange for the app to access Databricks

Resources for the demo: GitHub repo for today’s materials: https://github.com/posit-dev/databricks-finance-app Accompanying Guide: https://pub.demo.posit.team/public/predicting-lending-rates/lending-rate-prediction.html Q&A Recording: https://youtube.com/live/wNI3AhHP7uM

Additional follow-up links: GitHub Repo: https://github.com/posit-dev/databricks-finance-app Accompanying Guide: https://pub.demo.posit.team/content/fec42b3d-3aa9-43e1-8312-0ff553d09851/lending-rate-prediction.html While this demo uses ODBC package to connect to Databricks, you can also use sparklyr R package. Learn more about both here: https://docs.posit.co/ide/server-pro/user/rstudio-pro/guide/databricks.html Example using sparklyr instead of ODBC: https://posit.co/blog/reporting-on-nyc-taxi-data-with-rstudio-and-databricks/ Posit Workbench provides additional features for managing Databricks Credentials, learn more here: https://docs.posit.co/ide/server-pro/user/posit-workbench/guide/databricks.html#databricks-with-r For more on the Posit x Databricks partnership: https://posit.co/solutions/databricks/ Blog post on Edgar’s workshop on Databricks at conf: https://posit.co/blog/using-databricks-with-r-conf-workshop/ Solutions article on ODBC and Databricks: https://solutions.posit.co/connections/db/databases/databricks/

Want to chat more with Posit? To talk with Posit about integrating Posit & Databricks: https://posit.co/schedule-a-call/?booking_calendar__c=DatabricksJune2024Demo

Had fun and want to join again? You can add the monthly recurring event to your calendar with this link: https://pos.it/team-demo

Connecting RStudio and Databricks with ODBC

The odbc package, in conjunction with a driver, provides DBI support and an ODBC connection.

With the new odbc::databricks_connect function, you can create an ODBC connection to determine and configure the necessary settings to access your Databricks account. Your Databricks HTTP path is the only argument you need to run databricks_connect(). Provide your HTTP path and you will be able to see your Databricks data in the RStudio Connections Pane. Then, you can analyze your data in RStudio.

Learn more:

- Databricks x Posit: https://posit.co/solutions/databricks/

- Empowering R and Python Developers: Databricks and Posit Announce New Integrations: https://posit.co/blog/databricks-and-posit-announce-new-integrations/

- RStudio IDE and Posit Workbench 2023.12.0: What’s New: https://posit.co/blog/rstudio-2023-12-0-whats-new/

- Posit Professional Drivers 2024.03.0: Support for Apple Silicon: https://posit.co/blog/pro-drivers-2024-03-0/

Contact our sales team to schedule a demo: https://posit.co/schedule-a-call/?booking_calendar__c=Databricks

Databricks Pro Driver in Posit Workbench

We’ve added a Databricks driver to our Professional Drivers. The RStudio Connections Pane allows users to connect to their Databricks clusters from the IDE. Select the Databricks driver from the list of available drivers. Select the Driver to establish the connection. The driver can be used with the new databricks() function from the odbc package to connect to Databricks clusters and SQL warehouses.

Learn more:

- Databricks x Posit: https://posit.co/solutions/databricks/

- Empowering R and Python Developers: Databricks and Posit Announce New Integrations: https://posit.co/blog/databricks-and-posit-announce-new-integrations/

- RStudio IDE and Posit Workbench 2023.12.0: What’s New: https://posit.co/blog/rstudio-2023-12-0-whats-new/

- Posit Professional Drivers 2024.03.0: Support for Apple Silicon: https://posit.co/blog/pro-drivers-2024-03-0/

Contact our sales team to schedule a demo: https://posit.co/schedule-a-call/?booking_calendar__c=Databricks

Databricks x Posit | Improved Productivity for your Data Teams

Timestamps: 0:00-7:30 Introduction to Databricks 7:31-15:40 Introduction to Posit 15:41-27:51 Demo of VS Code, Model Serving 27:52-30:00 Demo of signing into Posit Workbench via OAuth 30:01-37:50 New Databricks pane: accessing Databricks compute and exploring data in Unity Catalog via Databricks Connect 37:51-43:40 New Simplified ODBC access to Databricks 43:41-50:06 Publishing data apps to Posit Connect with a Databricks backend 50:07-51:18 Demo recap 51:19-54:15 Roadmap and the future 54:16-1:07:33 Q&A

Talk with our team at Posit by scheduling a call here: https://pos.it/databricks-posit-event

Databricks and Posit are partnering to help professional data teams do more with the power of their favorite data science tools seamlessly integrated with the Databricks Lakehouse.

Our partnership aims to help Python and R developers build and share valuable data-driven content using familiar, state-of-the-art tools that maximize their productivity, all while leveraging the data processing and governance capabilities of Databricks.

In this joint event, James Blair, Product Manager of Cloud Integrations at Posit, and Rafi Kurlansik, Lead Product Specialist from Databricks, will be sharing a live demo covering how data teams can build and share data-driven content more easily with the joint power of Posit and Databricks, along with an exciting look into the future of the partnership

Leveraging R & Python in Tableau with RStudio Connect | James Blair | RStudio

Leveraging R & Python in Tableau with RStudio Connect Overview Demo / Q&A with James Blair

Tableau combines the ease of drag-and-drop visual analytics with an open, extensible platform. RStudio develops free and open tools for data science, including the world’s most popular IDE for R. RStudio also develops an enterprise-ready, modular data science platform to help data science teams using R and Python scale and share their work.

Now, with new functionality in RStudio Connect, users can have the best of both worlds. Tableau users can call R and Python APIs from Tableau calculated fields, getting access to all the power and analytic depth of these open-source data science ecosystems in real-time.

For Tableau users, this makes it easy to add dynamic, advanced analytic features from R and Python to a Tableau dashboard, such as scoring predictive models on Tableau data. They can leverage all the great work done by their organization’s data science team and even call both R and Python APIs from a single dashboard.

Data science teams can continue to use the code-first development and deployment tools from RStudio that they know and love. Using these tools, they can build and share R APIs (using the plumber package) and Python APIs (using the FastAPI framework).

Speaker Bio: James is a Solutions Engineer at RStudio, where he focuses on helping RStudio commercial customers successfully manage RStudio products. He is passionate about connecting R to other toolchains through tools like ODBC and APIs. He has a background in statistics and data science and finds any excuse he can to write R code.

A few other helpful links: Tableau Integration Documentation: https://docs.rstudio.com/rsc/integration/tableau/

Tableau / RStudio Connect Blog Post: https://blog.rstudio.com/2021/10/12/rstudio-connect-2021-09-0-tableau-analytics-extensions/

Embedding Shiny Apps in Tableau using shinytableau blog: https://blog.rstudio.com/2021/10/21/embedding-shiny-apps-in-tableau-dashboards-using-shinytableau/

James’ slides: https://github.com/blairj09-talks/rstudio-tableau-webinar

James Blaire & Barret Schloerke | Integrating R with Plumber APIs | RStudio (2020)

Full title: Expanding R Horizons: Integrating R with Plumber APIs

In this webinar we will focus on using the Plumber package as a tool for integrating R with other frameworks and technologies. Plumber is a package that converts your existing R code to a web API using unique one-line comments. Example use cases will be used to demonstrate the power of APIs in data science and to highlight new features of the Plumber package. Finally, we will look at methods for deploying Plumber APIs to make them widely accessible.

Webinar materials: https://rstudio.com/resources/webinars/expanding-r-horizons-integrating-r-with-plumber-apis/

About James: James is a Solutions Engineer at RStudio, where he focusses on helping RStudio commercial customers successfully manage RStudio products. He is passionate about connecting R to other toolchains through tools like ODBC and APIs. He has a background in statistics and data science and finds any excuse he can to write R code.

About Barret: I specialize in Large Data Visualization where I utilize the interactivity of a web browser, the fast iterations of the R programming language, and large data storage capacity of Hadoop