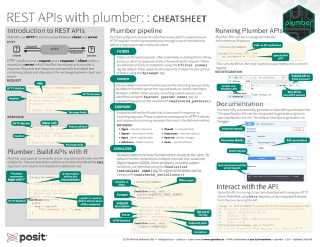

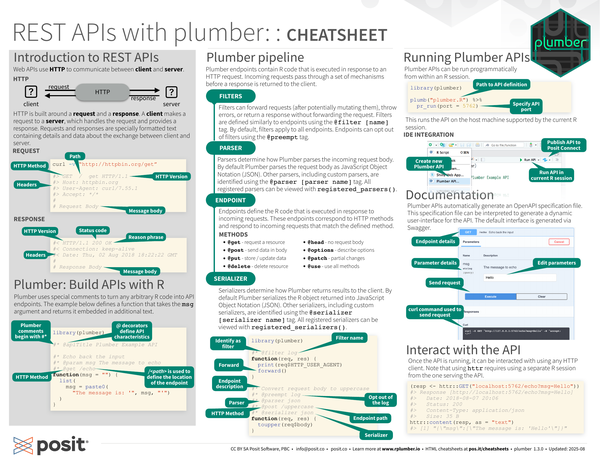

Plumber is an R package that turns R functions into web APIs by adding special comments to your code. It uses roxygen2-style annotations to define API endpoints, allowing you to expose R functions as HTTP services that accept parameters and return JSON, plots, or other data formats.

The package makes it simple to create RESTful APIs without learning a web framework, handling HTTP routing, parameter parsing, and response serialization automatically. It supports both GET and POST requests, accepts parameters from query strings or JSON bodies, and can return various output formats including JSON and images. This enables R developers to make their analysis and modeling work accessible to other applications and services.

Contributors#

Resources featuring plumber#

When R Met Python: A Meet Cute on Posit Connect (Blake Abbenante, Suffolk Construction)

When R Met Python: A Meet Cute on Posit Connect

Speaker(s): Blake Abbenante

Abstract:

Data teams often leverage multiple programming languages—driven by a multitude of reasons, be it task-specific requirements or personal preference. In this talk, I’ll share how we enabled our developer base to build in the language of their choice, and how we leverage Posit Connect to unify them. By exposing core functionality as APIs using R’s plumber and Python’s FastAPI packages, and building parity in internal packages for both languages, we created a shared toolkit that streamlines workflows and fosters collaboration. Join me to explore our journey in breaking down language barriers and empowering data innovation. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Lift Off! Building REST APIs that Fly (Joe Kirincic, RESTORE-Skills) | posit::conf(2025)

Lift Off! Building REST APIs that Fly

Speaker(s): Joe Kirincic

Abstract:

Picture the scene: you’ve successfully deployed your ML model as a plumber API into production. Your company loves it! One team uses the API’s predictions as an input to their own ML model. Another team displays the predictions in an internal Shiny app. But once adoption reaches a certain point, your API’s performance starts to degrade. What can you do to help your service maintain high performance in the face of high demand? In this talk, we’ll show some strategies for taking your API performance to the next level. Using two R packages, {yyjsonr} and {mirai}, we can augment our API with faster JSON processing and better responsiveness through asynchronous computing, allowing our services to do great things at scale at no additional cost. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

There’s a new Plumber in town (Thomas Lin Pedersen, Posit) | posit::conf(2025)

There’s a new Plumber in town

Speaker(s): Thomas Lin Pedersen

Abstract:

Announcing plumber2! The popular plumber package has gotten a successor. In this talk I’ll walk the audience through the “How” and the “Why”, what it means for existing plumber APIs, and of course showcase a range of amazing things that are suddenly possible with the new iteration. If you are an existing plumber user I hope to leave you full of excitement about the future of plumber. If you have never used plumber before I hope this talk will show you just how easy it is to create modern and powerful web servers in R. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

{mirai} and {crew}: next-generation async to supercharge {promises}, Plumber, Shiny, and {targets}

{mirai} is a minimalist, futuristic, and reliable way to parallelise computations – either on the local machine, or across the network. It combines the latest scheduling technologies with fast, secure connection types. With built-in integration to {promises}, {mirai} provides a simple and efficient asynchronous back-end for Shiny and Plumber apps. The {crew} package extends {mirai} to batch computing environments for massively parallel statistical pipelines, e.g. Bayesian modeling, simulations, and machine learning. It consolidates tasks in a central {R6} controller, auto-scales workers, and helps users create plug-ins for platforms like SLURM and AWS Batch. It is the new workhorse powering high-performance computing in {targets}.

Talk by Charlie Gao and Will Landau

Slides: https://wlandau.github.io/posit2024

GitHub Repo: https://github.com/wlandau/posit2024

mirai: https://shikokuchuo.net/mirai/

crew: https://wlandau.github.io/crew/

Computing and recommending company-wide employee training pair decisions at scale… posit conf 2024

Regis A. James developed MAGNETRON AI, an innovative, patent-pending tool that automates at-scale generation of high-quality mentor/mentee matches at Regeneron to enable rapid, yet accurate, company-wide pairings between employees seeking skill training/growth in any domain and others specifically capable of facilitating it. Built using R, Python, LLMs, shiny, MySQL, Neo4j, JavaScript, CSS, HTML, and bash, it transforms months of manual collaborative work into days. The reticulate, bs4dash, DT, plumber API, dbplyr, and neo4r packages were particularly helpful in enabling its full-stack data science. The expert recommendation engine of the AI tool has been successfully used for training a 400-member data science community of practice, and also for larger career development mentoring cohorts for thousands of employees across the company, demonstrating its practical value and potential for wider application.

Talk by Regis A. James

Slides: https://drive.google.com/file/d/1jq-WjuFz1Lp3m6v0SYjNoW1WcCBKPMs3/view?usp=sharing Referenced talks: https://www.youtube.com/@datadrivendecisionmaking

Full Title: Computing and recommending company-wide employee training pair decisions at scale via an AI matching and administrative workflow platform developed completely in-house

Lovekumar Patel - Empowering Decisions: Advanced Portfolio Analysis and Management through Shiny

This talk explores the creation of an advanced portfolio analysis system using Shiny and Plumber API. Focused on delivering real-time insights and interactive tools, the system transforms financial analysis with user-centric design and reusable Shiny modules. The talk will delve into how complex financial data is made dynamic and interactive via an internal R package integrating with an ag-grid javascript library to enhance user engagement and decision-making efficiency. A highlight is the Plumber API’s dual role: powering the current system and hosting other enterprise applications in other languages (python), demonstrating remarkable cross-platform integration. This system exemplifies the innovative potential of R in financial analytics.

Talk by Lovekumar Patel

Slides: https://docs.google.com/presentation/d/1dJJRBTxPL4x6anK_b8ya3iU4aIoFrC-z/edit?usp=sharing&ouid=110604780241045194549&rtpof=true&sd=true GitHub Repo: https://github.com/lkptl

Using R to develop production modeling workflows at Mayo Clinic - posit::conf(2023)

Presented by Brendan Broderick

Developing workflows that help train models and also help deploy them can be a difficult task. In this talk I will share some tools and workflow tips that I use to build production model pipelines using R. I will use a project of predicting patients who need specialized respiratory care after leaving the ICU as an example. I will show how to use the targets package to create a reproducible and easy to manage modeling and prediction pipeline, how to use the renv package to ensure a consistent environment for development and deployment, and how to use plumber, vetiver, and shiny applications to make the model accessible to care providers.

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Leave it to the robots: automating your work. Session Code: TALK-1149

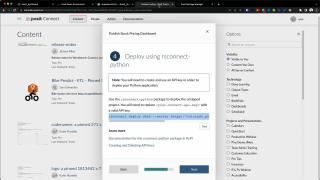

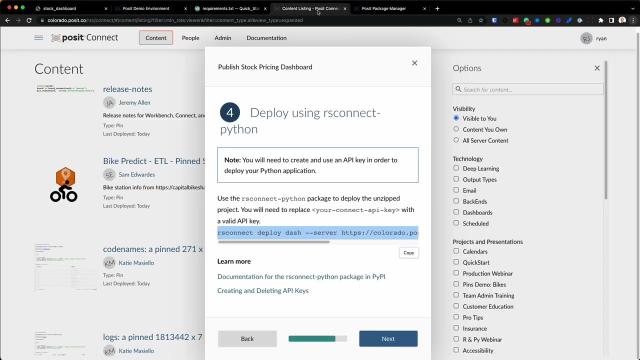

Deploying a Python application with Posit Connect

Posit Connect is our flagship publishing platform for data products built in R and Python.

Learn more: https://posit.co/products/enterprise/connect/

Book a demo of Connect: https://posit.co/schedule-a-call/?booking_calendar__c=RSC_YT_Demo

With Connect, you can deploy, manage, and share your R and Python content, including Shiny applications, Dash, Streamlit, and Voilà applications, R Markdown reports, Jupyter Notebooks, Quarto documents, dashboards, APIs (Plumber, Flask), and more.

Give stakeholders authenticated access to the content they need, and schedule reports to update automatically

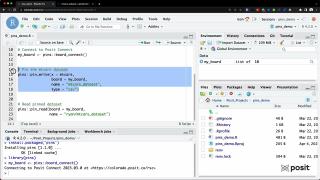

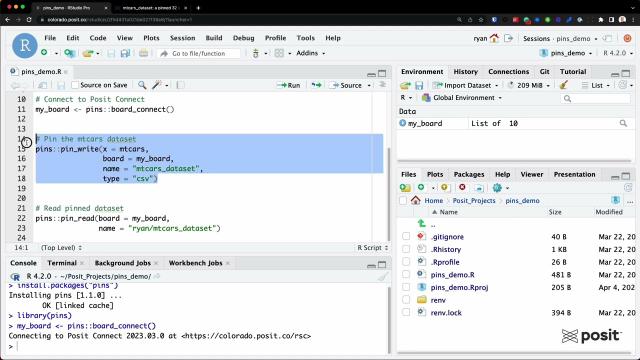

Leveraging Pins with Posit Connect

Leveraging Pins with Posit Connect Ryan Johnson, Data Science Advisor at Posit

You might find this helpful if:

- You have reports that need to be regularly updated so you want to schedule them to run with the newest data each week

- You reuse data across multiple projects or pieces of content (Shiny app, Jupyter Notebooks, Quarto doc, etc.)

- You’ve chased a CSV through a series of email exchanges, or had to decide between data-final.csv and data-final-final.csv

- You haven’t heard of pins yet!

For some workflows, a CSV file is the best choice for storing data. However, for the majority of cases, the data would do better if stored somewhere centrally accessible by multiple people where the latest version is always available. This is particularly true if that data is reused across multiple projects or pieces of content. With the pins package it’s easier than ever to have repeatable data.

Timestamps: 0:17 - install the pins package and load into your environment 0:32 - register a board 0:59 - connecting to your Posit Connect instance from Posit Workbench or RStudio IDE 1:43 - define the Connect instance as your board 2:01 - pin the mtcars dataset to your Connect instance 2:38 - a pinned dataset on Posit Connect 2:50 - reading a pinned dataset

Additional resources:

- Example workflow that involves Quarto, pins, plumber API, vetiver and shiny: machine-learning-pipeline-with-vetiver-and-quarto/

- Connect User Guide - Pins for R: https://docs.posit.co/connect/user/pins/

- Connect User Guide - Pins for Python: https://docs.posit.co/connect/user/python-pins/

- 9 ways to use Posit Connect that you shouldn’t miss: https://posit.co/blog/9-ways-posit-connect/

Learn more: If you haven’t had a chance to try Posit Connect before or you’d like to learn how your team can better leverage pins, schedule a demo with our team to learn more! https://posit.co/schedule-a-call/?booking_calendar__c=RSC_Demo

On the last Wednesday of every month, we host a Posit Team demo and Q&A session that is open to all. You can use this to add the event to your own calendar: pos.it/team-demo

Dan Negrey @ MarketBridge | Creating a framework for consistent measurement | Data Science Hangout

We were joined by Dan Negrey, Director, Analytics at MarketBridge.

At (15:11) we asked Dan about a tip for impacting the business with data science.

So I think every business is going to have KPIs (Key Performance Indicators), and there’s going to be other metrics besides KPIs, things that lead into that. As crazy as it sounds, some organizations struggle to measure those and to do so in a consistent and repeatable way.

Maybe they measure something that just comes from one person sitting at a desk, and they’ve done it for six years, and they leave. All of a sudden, who knows how they do that?

Creating a framework for consistent measurement is huge for an organization.

The measurement is consistent and the outcomes are measured consistently. Then taking action to improve those outcomes can be thought of as more reliable because the measurement process is consistent.

So that would be one thing for sure. Another – on that note, is decision making. Every company makes decisions. A lot of us are here because we like to do this kind of work, but most of our companies exist because they like to make money, and they like to grow. So we find a balance between doing what we do to help our company to achieve their goals.

Find ways to help your company optimize cost, reduce waste and increase growth.

All of that is through measuring and looking at decisions that have been made in the past and thinking about how they could have been made differently. This could be through historical analysis or building models to help make those decisions more effectively.

That’s a huge win for any organization.

There was also lot of love for repeatable data with the pins package at this Data Science Hangout.

Dan Negrey shared: “Pins has been a huge package that we’ve started using a year or so ago…if you’ve never used pins, it’s definitely worth checking out.”

Helpful resources on pins: Pins for R: http://pins.rstudio.com/ Pins for Python: https://lnkd.in/ghmxiEHV Great repo that uses pins: https://lnkd.in/ezvBkav Workflow that involves Quarto, pins, plumber API, vetiver and shiny: https://lnkd.in/e6gnMXfD Link to Ryan’s video & stepping stones: https://lnkd.in/erR-Mjr9

Other resources: MarketBridge career page (with open data science roles): https://marketbridge.applytojob.com/

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co LinkedIn: https://www.linkedin.com/company/posit-software Twitter: https://twitter.com/posit_pbc

To join future data science hangouts, add to your calendar here: pos.it/dsh (All are welcome! We’d love to see you!)

December 2022 Webinar: The R Workflow – Dr Ryan Johnson from Posit

The R Workflow Wednesday 21st December.

Ryan Johnson, Posit discusses the following (using a Shiny app as the end product):

- R Markdown Job Scheduling

- Pins - https://pins.rstudio.com/

- Plumber APIs

This session is a show-and-tell getting you familiar with various open source tools (Pins, Plumber) and how they can be used in combination with pro tools (Posit Connect) to improve workflows.

Resource links can be found on the NHS-R Community website: https://nhsrcommunity.com/events/december-2022-webinar-the-r-workflow-dr-ryan-johnson-from-posit/

Meetup | The Looma Project | Why I Stopped Worrying and Learned to Love the API

Why I stopped worrying and learned to love the API Led by Davis DeRodes, Director of Data Science at The Looma Project

Link to slides: https://github.com/RStudioEnterpriseMeetup/Presentations/blob/main/Looma_LearnedToLoveAPIs_PositMeetup.pdf

Source code: https://github.com/DavisDeRodesLooma/positconnect-demo-api

Helpful resources: ⬢ Brief intro to APIs blog post: https://rigor.com/blog/what-is-an-api-a-brief-intro/ ⬢ Isabella Velasquez, Intro to APIs blog post: https://posit.co/blog/creating-apis-for-data-science-with-plumber/ ⬢ Plumber package: https://www.rplumber.io/ ⬢ Public APIs: https://github.com/public-apis/public-apis ⬢ Aviation API that Davis used: https://docs.aviationapi.com/# ⬢ Posit Connect: https://posit.co/products/enterprise/connect/

APIs are the ticket to getting easy access to new data sources and creating automated ways to share data externally. Even the most stats-oriented data scientist with just R and Posit Connect can do some super useful and cool things with APIs. This talk will go over some best practices and interesting use cases for querying, posting to, and creating APIs using R packages, Shiny, Plumber and the Posit Connect ecosystem.

Speaker bio: Davis DeRodes is the Director of Data Science at the retail media start-up Looma whose work focuses on the intersection of human-centric content and sales. Davis’ focuses are full stack data science, causal inference, data quality, explainability and R. Davis is an ATL-ien who currently resides in Durham, NC and is an alumni of Columbia’s Data Science Institute.

Please note this event will be live streamed through YouTube Live and the recording will be available at the same link immediately following the event.

During the event you can ask questions through this link as well: pos.it/meetup-questions

Full playlist of meetup recordings: https://www.youtube.com/playlist?list=PL9HYL-VRX0oRKK9ByULWulAOO5jN70eXv

All upcoming events: pos.it/community-events

Model Monitors and Alerting at Scale with RStudio Connect | Adam Austin, Socure

Deploying a predictive model signals the end of one long journey and the start of another. Monitoring model performance is crucial for ensuring the long-term quality of your work.

A monitor should provide insights into model inputs and output scores, and should send alerts when something goes wrong. However, as the number of deployed models increases or your customer base grows, the maintenance of your monitor portfolio becomes daunting. In this talk we’ll explore a solution for orchestrating monitor deployment and maintenance using RStudio Connect. I will show how applications of R Markdown, Shiny, and Plumber can unburden data scientists of time-consuming report upkeep by empowering end-users to deploy, update, and own their monitors.

Timestamps: 2:01 - Start of presentation 3:43 - About Socure 6:12 - Model performance matters, deployment isn’t the end of the story 7:26 - What is a monitor? 8:55 - What is an alert? 11:00 - Monitor example 18:09 - Firing an alert from RStudio Connect 19:00 - Why monitor from RStudio Connect? 24:33 - How monitoring drives success at Socure 30:00 - Git-backed deployment in RStudio Connect 36:00 - Shiny app that their account managers see 46:00 - Architecture of a monitoring system 56:00 - Connect hot tip System-wide packages 57:00 - Why did we try Connect for monitoring 59:02 - Why do we keep using it for that :)

Resources shared: Blastula package: https://github.com/rstudio/blastula connectapi package: https://github.com/rstudio/connectapi rsconnect package: https://rstudio.github.io/rsconnect/ Intro to APIs blog post: https://www.rstudio.com/blog/creating-apis-for-data-science-with-plumber/

Speaker Bio: Adam Austin is a senior data scientist and RStudio administrator at Socure, a leading provider of identity verification and fraud prevention services. His work focuses on data science enablement through tools, automation, and reporting

Tom Schenk & Bejan Sadeghian | Making Microservices Part of Your Data Team

Making microservices a part of your data science team Led by Tom Schenk & Bejan Sadeghian at KPMG

Timestamps: 1:09 - Start of presentation 4:00 - Challenges and trade-offs of a growing team (how I stopped worrying about hiring) 8:14 - What are microservices? (help separate out the different layers of an app) 9:36 - Hosting other web technologies on RStudio Connect (ex. React) 12:25 - Simple Hello World example of microservices 16:00 - Reason to separate out logging 17:15 - How to design & plan microservices (moving from a monolithic Shiny app) 17:51 - Challenges to getting started with microservices 21:02 - How do you address getting started? (domain driven design) 23:17 - Applying cloud design patterns 25:37 - Separation of development duties 27:22 - Addressing any risks that come with microservices 29:17 - Considering costs and benefits 30:11 - Microservices in action: demo of KODA app (making changes to the organization) 36:21 - PowerBI interacting with the same microservice from Connect 38:30 - Growing teams face a trade-off of complexity and simplicity (KPMG’s path)

Questions: 43:28 - Can you use a Shiny front end together with a microservice backend? 44:09 - Do you hire separately for back-end data science development and front-end Shiny UI development? 46:00 - Are all microservices managed by a centralized unit? 47:02 - Who can access RStudio Connect in your organization? 48:29 - When you decided to go the microservice route, what was your first step? 50:47 - What roles are you hiring for? 52:20 - Might you suggest some web service servers that host R-based or Python services? 53:33 - Are apps build with microservices as responsive as those that adopt a monolithic architecture or do microservices introduce a lag? 55:09 - Can you show the back-end response data through developer tools? 56:45 - Can you speak more about the logging microservice? Did you build it ground-up or did you adopt an off-the-shelf package or app?

Abstract: Whether or not you’ve heard of microservices architecture, you may want to know how microservices can help you scale R-based applications across an enterprise.

As data science teams—and their applications—grow larger, teams can experience growing pains that make applications complex, difficult to customize, or challenging to collaborate across large teams. This meetup will discuss what microservices are, how it compares to Shiny, how it can help a data science team, and how you can deploy microservices using your RStudio Connect environment.

This meetup will help you understand several key items: • The basic concept of microservices and benefits, such as making your code modular, domain-driven design, and reducing the complexity of application development, and facilitate larger development teams. • How to use the Plumber package to deploy APIs as part of a microservices architecture. • How you can work with front-end development teams using their preferred framework (e.g., React, Angular, Vue) using RStudio Connect.

We will show a widely-used application built using a microservices architecture and hosted in RStudio, including before-and-after comparisons to show the strengths of a microservices framework leads to a better-looking and better-functioning application. Our team will discuss the journey and growth to arrive at the new approach to make development easier within a quickly growing group.

Speaker Bios: Tom Schenk Jr. is a researcher and author on applying technology, data, and analytics to make better decisions. He’s currently a managing director at KPMG. He has previously served as Chief Data Officer for City of Chicago.

Bejan Sadeghian is a director of analytics at KPMG and leads data science development, which spans from advanced analytics to machine learning engineering.

For upcoming events: rstd.io/community-events-calendar Info on RStudio Connect: https://www.rstudio.com/products/connect/ To chat with RStudio: rstd.io/chat-with-rstudio

Veerle van Leemput | Analytic Health | Optimizing Shiny for enterprise-grade apps

Can you use Shiny in production? A: Yes, you definitely can.

Link to slides: https://github.com/RStudioEnterpriseMeetup/Presentations/blob/main/VeerlevanLeemput-OptimizingShiny-20220525.pdf

Packages mentioned: ⬢ shiny: https://shiny.rstudio.com/ ⬢ pins: https://pins.rstudio.com/ ⬢ plumber: https://www.rplumber.io/ ⬢ blastula: https://github.com/rstudio/blastula ⬢ callR: https://github.com/r-lib/callr ⬢ shinyloadtest: https://rstudio.github.io/shinyloadtest/ ⬢ shinycannon: https://github.com/rstudio/shinycannon ⬢ shinytest2: https://rstudio.github.io/shinytest2/ ⬢ feather: https://github.com/wesm/feather ⬢ shinipsum: https://github.com/ThinkR-open/shinipsum ⬢ bs4Dash: https://rinterface.github.io/bs4Dash/index.html

Timestamps: 2:44 - Start of presentation 5:41 - What qualifies as an enterprise-grade app? 10:46 - UI first / user experience / prototyping 13:20 - Separating code into separate scripts and creating code that’s easy to test 17:15 - Golem 19:28 - Functionize your code 20:50 - Rhino package, framework for developing enterprise-grade apps at speed 22:33 - Infrastructure, how do you bring this to your users? (lots of ways to do this. They do this with R, pins, plumber, rmd, blastula, and Posit Connect on Azure) 31:17 - Optimizing Shiny (process configuration, cache, callR, API, feather) 47:35 - Testing your app (shinyloadtest and shinycannon) 50:23 - Testing for outcomes (shinytest2) 52:15 - Monitor app performance & usage (blastula, shinycannon, usage metrics with Shiny app)

Questions: 57:38 - What’s the benefit of using pins rather than pulling the data from your database? 59:30 - Are there package license considerations you had to think about when monetizing shiny applications? 1:00:45 - Do you use promises to scale the application? (they use CallR) 1:01:49 - For beginners, golem or rhino? 1:02:50 - The myth is that only Python can be used for production apps, what made you choose to use R? 1:05:12 - Is feather strictly better than using JSON? 1:06:38 - Where do you see the line between BI (business intelligence) and Shiny for your applications? 1:08:36 - Any tips for enterprise-grade UI development? Making beautiful apps (bs4Dash app) 1:10:25 - Have you found an upper limit for users? 1:12:19 - Any tips for more dynamic data? (optimizing database helps here) 1:13:50 - Where do you install shinycannon? (on our development Linux server) 1:15:00 - Can you share other resources or examples of code? (Slides here with resources: https://github.com/RStudioEnterpriseMeetup/Presentations/blob/main/VeerlevanLeemput-OptimizingShiny-20220525.pdf )

For upcoming events: rstd.io/community-events-calendar Info on Posit Connect: https://www.rstudio.com/products/connect/ To chat with Posit: rstd.io/chat-with-rstudio

James Blair || Getting Started with {plumbertableau} || RStudio

00:00 Introduction 01:19 Setting up the problem - capitalizing text with a custom function 02:18 Using Plumber to create an API for our function 04:08 Using Run API + Swagger from the RStudio IDE 05:44 Giving Tableau access to the function with PlumberTableau 09:16 Reviewing what we’ve done so far 09:47 Comparing results between Plumber and PlumberTableau 10:12 Overview of what PlumberTableau does 14:27 Centralized hosting with RStudio Connect 15:17 Looking at our API in RStudio Connect 18:14 How to access the deployed API from Tableau 21:03 Overview of RStudio Connect, Tableau, and PlumberTableau process 21:52 More in-depth example using sample sales data 22:36 Example with the Python equivalent of PlumberTableau, FastAPITableau 25:15 Overview of how these Tableau extension packages work 27:21 Setting up a connection between Tableau and RStudio Connect

Read more about the plumbertableau package here: https://rstudio.github.io/plumbertableau/

And learn about the fastapitableau package here: https://rstudio.github.io/fastapitableau/

If you’re unfamiliar with Plumber, this Quickstart guide gives a good overview of the package: https://www.rplumber.io/articles/quickstart.html And you can learn more about Shiny here: https://shiny.rstudio.com/

Got questions? The RStudio Community site is a great place to get assistance: https://community.rstudio.com/

Content: James Blair (@Blair09M) Design and editing: Jesse Mostipak (@kierisi)

Music: Borough by Blue Dot Sessions https://app.sessions.blue/browse/track/89821

Barret Schloerke || Maximize computing resources using future_promise() || RStudio

00:00 Introduction 01:45 Setting up a multisession using the future package 02:05 Simulation using two workers 04:14 Simulation using 10 workers 05:20 What happens when we run out of workers? 05:35 How Shiny handles future processes like promises 07:16 Introduction to future_promise() 07:45 Demo of the promises package 09:21 Setting the number of workers 10:40 Demo of processing without future_promise() 14:11 Wrapping a slow calculation in a future() 14:53 Demo of processing using Plumber 16:25 Considerations on the number of cores to use 17:21 What happens if we run out of workers? 19:44 Decrease in execution times using future_promise()

In an ideal situation, the number of available future workers (future::nbrOfFreeWorkers()) is always more than the number of future::future() jobs. However, if a future job is attempted when the number of free workers is 0, then future will block the current R session until one becomes available.

The advantage of using future_promise() over future::future() is that even if there aren’t future workers available, the future is scheduled to be done when workers become available via promises. In other words, future_promise() ensures the main R thread isn’t blocked when a future job is requested and can’t immediately perform the work (i.e., the number of jobs exceeds the number of workers).

You can read more about the promises package here: https://rstudio.github.io/promises/articles/shiny.html And you can learn more about Shiny here: https://shiny.rstudio.com/

Got questions? The RStudio Community site is a great place to get assistance: https://community.rstudio.com/

Content: Barret Schloerke (@schloerke) Design and editing: Jesse Mostipak (@kierisi)

Capacity Planning for Microsoft Azure Data Centers | Using R & RStudio Connect

Capacity Planning for Microsoft Azure Data Centers | An Explainable Data Science Workflow using R & RStudio Connect | Presented by Paul Chang

2:12 - Start of presentation 47:43 - Start of Q&A session

Thank you for watching! Here are a few helpful links:

- Link to Paul’s slides: https://lnkd.in/gh-hGScE

- More information on RStudio Connect: https://www.rstudio.com/products/connect/

- How to open an Azure account: https://azure.microsoft.com/en-us/

- Getting started with SAML authentication on RStudio Connect: https://support.rstudio.com/hc/en-us/articles/360022321494-Getting-Started-with-SAML-in-RStudio-Connect

- pins package: https://pins.rstudio.com/

- plumber package: https://www.rplumber.io/

- Upcoming events: rstd.io/community-events

- Chat with our team to start an RStudio Connect evaluation: rstd.io/chat-with-rstudio

Abstract: The Long Range Capacity Planning team at Microsoft is responsible for producing plans for expanding Microsoft Azure Data Centers around the world. These are multi-billion dollar plans that enable the full suite of IaaS and PaaS cloud offerings for our customers, over a 5+ year time horizon. In this talk, we will present the data science software stack that we have built using RStudio Connect and Azure, for producing these data center capacity plans. We will discuss how RStudio Connect has empowered our data scientists to connect more directly with internal stakeholders and decision makers, and how RStudio Connect has enabled us to streamline our data science and business processes.

Speaker Bio: Paul Chang, Senior Data & Applied Scientist, Microsoft

Paul Chang is the Systems Architect of the Long Range Capacity Planning team for Microsoft Azure Data Centers. He received his Applied Math PhD from Simon Fraser University and has worked in a variety of fields including Applied Functional Analysis, Hydrogen Fuel Cell modeling, and A.I. Applications in Vehicular Traffic Engineering. He was also a software engineer in SQL Azure for a couple of years.

Thank you for joining us!

- If you ever have suggestions or general feedback, please let us know! Here’s an anonymous google form: rstd.io/meetup-feedback

- We’d love to hear from you too! Here’s a talk submission form as well: rstd.io/meetup-speaker-form

- If you’d like to learn more about RStudio Connect: https://www.rstudio.com/products/connect/

- If you’re just starting to advocate for data science in general or RStudio tools: rstudio.com/champion

Leveraging R & Python in Tableau with RStudio Connect | James Blair | RStudio

Leveraging R & Python in Tableau with RStudio Connect Overview Demo / Q&A with James Blair

Tableau combines the ease of drag-and-drop visual analytics with an open, extensible platform. RStudio develops free and open tools for data science, including the world’s most popular IDE for R. RStudio also develops an enterprise-ready, modular data science platform to help data science teams using R and Python scale and share their work.

Now, with new functionality in RStudio Connect, users can have the best of both worlds. Tableau users can call R and Python APIs from Tableau calculated fields, getting access to all the power and analytic depth of these open-source data science ecosystems in real-time.

For Tableau users, this makes it easy to add dynamic, advanced analytic features from R and Python to a Tableau dashboard, such as scoring predictive models on Tableau data. They can leverage all the great work done by their organization’s data science team and even call both R and Python APIs from a single dashboard.

Data science teams can continue to use the code-first development and deployment tools from RStudio that they know and love. Using these tools, they can build and share R APIs (using the plumber package) and Python APIs (using the FastAPI framework).

Speaker Bio: James is a Solutions Engineer at RStudio, where he focuses on helping RStudio commercial customers successfully manage RStudio products. He is passionate about connecting R to other toolchains through tools like ODBC and APIs. He has a background in statistics and data science and finds any excuse he can to write R code.

A few other helpful links: Tableau Integration Documentation: https://docs.rstudio.com/rsc/integration/tableau/

Tableau / RStudio Connect Blog Post: https://blog.rstudio.com/2021/10/12/rstudio-connect-2021-09-0-tableau-analytics-extensions/

Embedding Shiny Apps in Tableau using shinytableau blog: https://blog.rstudio.com/2021/10/21/embedding-shiny-apps-in-tableau-dashboards-using-shinytableau/

James’ slides: https://github.com/blairj09-talks/rstudio-tableau-webinar

James Blaire & Barret Schloerke | Integrating R with Plumber APIs | RStudio (2020)

Full title: Expanding R Horizons: Integrating R with Plumber APIs

In this webinar we will focus on using the Plumber package as a tool for integrating R with other frameworks and technologies. Plumber is a package that converts your existing R code to a web API using unique one-line comments. Example use cases will be used to demonstrate the power of APIs in data science and to highlight new features of the Plumber package. Finally, we will look at methods for deploying Plumber APIs to make them widely accessible.

Webinar materials: https://rstudio.com/resources/webinars/expanding-r-horizons-integrating-r-with-plumber-apis/

About James: James is a Solutions Engineer at RStudio, where he focusses on helping RStudio commercial customers successfully manage RStudio products. He is passionate about connecting R to other toolchains through tools like ODBC and APIs. He has a background in statistics and data science and finds any excuse he can to write R code.

About Barret: I specialize in Large Data Visualization where I utilize the interactivity of a web browser, the fast iterations of the R programming language, and large data storage capacity of Hadoop

James Blair | Democratizing R with Plumber APIs | RStudio (2019)

The Plumber package provides an approachable framework for exposing R functions as HTTP API endpoints. This allows R developers to create code that can be consumed by downstream frameworks, which may be R agnostic. In this talk, we’ll take an existing Shiny application that uses an R model and turn that model into an API endpoint so it can be used in applications that don’t speak R.

VIEW MATERIALS https://bit.ly/2TXfFR5

About the Author James Blair James holds a master’s degree in data science from the University of the Pacific and works as a solutions engineer. He works to integrate RStudio products in enterprise environments and support the continued adoption of R in the enterprise. His past consulting work centered around helping businesses derive insight from data assets by leveraging R. Outside of R and data science, James’s interests include spending time with his wife and daughters, cooking, camping, cycling, racquetball, and exquisite food. Also, he never turns down a funnel cake

Jeff Allen | RStudio Connect Past, present, and future | RStudio (2019)

RStudio Connect is a publishing platform that helps to operationalize the data science work you’re doing. We’ll review the current state of RStudio including its ability to host Shiny applications and Plumber APIs, schedule and render R Markdown documents, and manage access. Then we’ll unveil some exciting new features that we’ve been working on, and give you a sneak peek at what’s coming up next.

Materials: http://rstd.io/rsc170