reticulate

R Interface to Python

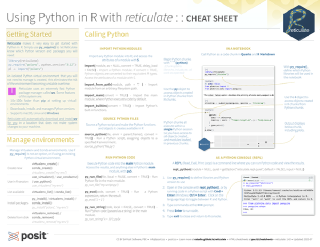

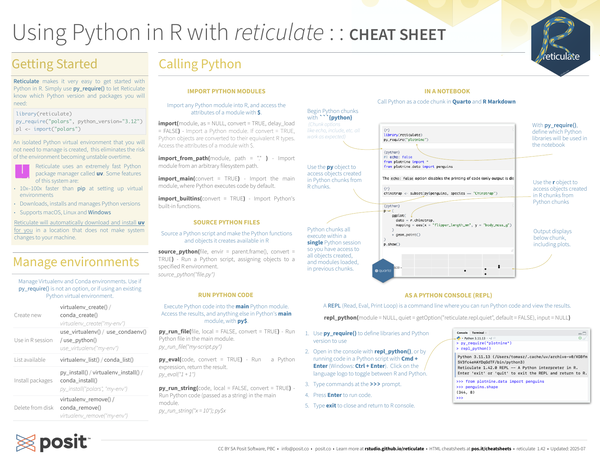

The reticulate package provides comprehensive tools for interoperability between Python and R, allowing you to call Python code from R in multiple ways including R Markdown, importing modules, sourcing scripts, and interactive Python consoles. It embeds a Python session within your R session, enabling seamless integration of both languages in a single workflow.

The package handles automatic conversion between R and Python data types, including translation between R data frames and Pandas DataFrames, R matrices and NumPy arrays, and other common objects. It supports flexible Python version management through virtual environments and Conda environments. This makes it valuable for developers and data scientists who work in both languages or collaborate on mixed-language teams, eliminating the need to choose between R and Python tools.

Contributors#

Resources featuring reticulate#

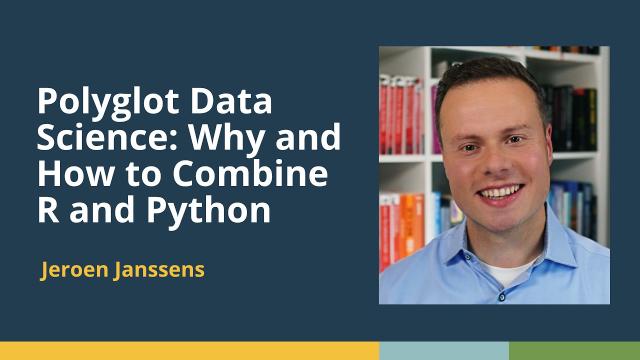

Polyglot Data Science: Why and How to Combine R and Python (Jeroen Janssens) | posit::conf(2025)

Polyglot Data Science: Why and How to Combine R and Python

Speaker(s): Jeroen Janssens

Abstract:

Doing everything in one language is convenient but not always possible. For example, your Python app might need an algorithm only available as an R package. Or your R analysis might need to fit into a Python pipeline. What do you do? You take a polyglot approach! Many data scientists hesitate to explore beyond their main language, but combining R and Python can be powerful. In my talk, I’ll explain why polyglot data science is beneficial and address common concerns. Then, I’ll show you how to make it happen using tools like Quarto, Positron, Reticulate, and the Unix command line. By the end, you’ll gain a fresh perspective and practical ideas to start. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Data Science at the Command Line and Polars | Jeroen Janssens | Data Science Hangout

To join future data science hangouts, add it to your calendar here: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Jeroen Janssens, Senior Developer Relations Engineer at Posit, to chat about his career journey from machine learning to developer relations, the advantages of using the command line for data science, his books “Data Science at the Command Line” and “Python Polars”, and advice for aspiring DevRel professionals.

In this Hangout, we explore the benefits of working on the command line versus not. Jeroen explained that while the initial command line interface might seem stark, it offers a very different and powerful way to interact with your computer. The Unix command line is ubiquitous across various systems, from Raspberry Pis to supercomputers. Its strength lies in the ability to connect tools together through standard output and input, allowing for quick and iterative solutions by combining specialized tools. This fosters an interactive nature with a short feedback loop and provides closer interaction with the file system, making ad hoc data exploration efficient.

Resources mentioned in the video and zoom chat: Jeroen’s LinkedIn → https://www.linkedin.com/in/jeroenjanssens/ Data Science at the Command Line → https://jeroenjanssens.com/dsatcl/ Python Polars: The Definitive Guide → https://polarsguide.com/ Plotnine → https://plotnine.org/ Winner of the 2024 plotnine Plotting Contest → https://posit.co/blog/winner-of-the-2024-plotnine-plotting-contest/ Talk about plotnine → https://www.youtube.com/watch?v=xdD8r84sqYY R for Data Science → https://r4ds.had.co.nz/ Jeroen’s plotnine translation of R for Data Science → https://jeroenjanssens.com/plotnine/ froggeR package → https://azimuth-project.tech/froggeR/ Reticulate → https://rstudio.github.io/reticulate/ Install Windows Subsystem for Linux (WSL) → https://learn.microsoft.com/en-us/windows/wsl/install UTM for macOS (Virtualization) → https://mac.getutm.app fish shell → https://fishshell.com/ Quartodoc → https://github.com/machow/quartodoc Focusmate (Accountability Partner Tool) → https://www.focusmate.com/ Surface Area of Luck → https://modelthinkers.com/mental-model/surface-area-of-luck CRAN R Extensions Manual → https://cran.r-project.org/doc/manuals/r-release/R-exts.html

If you didn’t join live, one great thing you missed from the zoom chat was people sharing their varied experiences with the command line, with many admitting they primarily use it for basic navigation or only when necessary, and some sharing helpful tools and tips for those less familiar. Let us know below if you’d like to hear more about this topic!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Computing and recommending company-wide employee training pair decisions at scale… posit conf 2024

Regis A. James developed MAGNETRON AI, an innovative, patent-pending tool that automates at-scale generation of high-quality mentor/mentee matches at Regeneron to enable rapid, yet accurate, company-wide pairings between employees seeking skill training/growth in any domain and others specifically capable of facilitating it. Built using R, Python, LLMs, shiny, MySQL, Neo4j, JavaScript, CSS, HTML, and bash, it transforms months of manual collaborative work into days. The reticulate, bs4dash, DT, plumber API, dbplyr, and neo4r packages were particularly helpful in enabling its full-stack data science. The expert recommendation engine of the AI tool has been successfully used for training a 400-member data science community of practice, and also for larger career development mentoring cohorts for thousands of employees across the company, demonstrating its practical value and potential for wider application.

Talk by Regis A. James

Slides: https://drive.google.com/file/d/1jq-WjuFz1Lp3m6v0SYjNoW1WcCBKPMs3/view?usp=sharing Referenced talks: https://www.youtube.com/@datadrivendecisionmaking

Full Title: Computing and recommending company-wide employee training pair decisions at scale via an AI matching and administrative workflow platform developed completely in-house

Validating and Testing R Dataframes with Pandera via reticulate - R-Python Interoperability

Presented by Niels Bantilan

Original Full Title: Validating and Testing R Dataframes with Pandera via reticulate: A Case Study in R-Python Interoperability

Data science and machine learning practitioners work with data every day to analyze and model them for insights and predictions. A major component of any project is data quality, which is a process of cleaning, and protecting against flaws in data that may invalidate the analysis or model. Pandera is an open source data testing toolkit for dataframes in the Python ecosystem: but can it validate R dataframes?

This talk is composed of three parts: first I’ll describe what data testing is and motivate why you need it. Then, I’ll introduce the iterative process of creating and refining dataframe schemas in Pandera. Finally, I’ll demonstrate how to use it in R with the reticulate package using a simple modeling exercise as an example.

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: R or Python? Why not both!. Session Code: TALK-1123

Data Science Hangout | Javier Orraca-Deatcu, Centene | Excel to data science to lead ML engineer

We were joined by Javier Orraca-Deatcu, Lead Machine Learning Engineer at Centene.

Among many topics covered, Javier shared how his background in finance and consulting led to his interest in data science to automate some of his work - and how he helped get other data scientists together in his organization.

(26:31) How did you organize and recruit people for the data science community group at Centene?

So I sort of piggybacked from a general data science community chat that we had at the company. There were several hundred people on this of varying backgrounds and expertise levels so there was a lot of conversation happening. There was already a Python group that was meeting– I think every other month.

So three weeks after I started, I got really excited about the possibility of potentially creating something similar for R users.

-

It started by just trying to figure out who owns that already existing data science chat and see if they could help support the idea of creating an R user group, something to meet once a month or once every two months. At larger companies especially, getting that type of top-level executive stamp of approval and support can go a long way, especially if that individual is part of the already existing IT or data science function.

-

At the time, I created a Blogdown site. For those of you who are familiar with R Markdown, Blogdown is a package that allows you to create static websites and blogs with R Markdown. Now with Quarto you can do the same thing and create websites. I love the syntax of Quarto.

-

We had partnerships with Posit, so we were able to get some people to come in and do workshops as well.

-

We also had reticulate sessions, where it was a co-branded Python & R workshop where we were looking at ways in which we can actually communicate between teams of different languages a lot easier. I had a great experience with it. Everyone was so collaborative and it was such a great way to see the excitement around what you could do with both R and Python.

I think what started as 13 users the first month, jumped to about 100 - 125 monthly users on this monthly meetup.

…And on the journey to machine learning engineer, what was the hardest part? (49:10):

Because of SQL, I had a really good understanding of at least how tabular data could be joined and the different transformations that could be done to these data objects.

I think I would have really struggled without that basic understanding. But having said that, I think the part where I really struggled at first was function writing. Function writing was not intuitive to me.

Basic function writing was but in general, I found it to be very complicated and it took a solid three to six months of practice to feel actually comfortable with it.

Even when I started building Shiny apps– basic Shiny is quite easy but large functions underpin the entirety of a Shiny app. Everything you do within Shiny is effectively writing functions.

The process of learning Shiny and becoming more comfortable with Shiny was very difficult and something that just took a lot of repetitions but it all sort of played together.

While people may think of Shiny more as a frontend type of system, it did make me a much better programmer in the way I thought about actual functions and function writing.

Other things that I found hard, looking back, I’m sort of embarrassed to say this, was reproducibility of machine learning – being able to reproduce a code set and get the exact same predictions every time.

I wasn’t quite sure why this wasn’t working or how to create these fixed views, setting a seed or whatever you need to do to ensure that someone else downstream could replicate your study or analysis and get the exact same findings themselves.

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co LinkedIn: https://www.linkedin.com/company/posit-software Twitter: https://twitter.com/posit_pbc

To join future data science hangouts, add to your calendar here: pos.it/dsh (All are welcome! We’d love to see you!)

R Markdown Advanced Tips to Become a Better Data Scientist & RStudio Connect | With Tom Mock

R Markdown is an incredible tool for being a more effective data scientist. It lets you share insights in ways that delight end users.

In this presentation, Tom Mock will teach you some advanced tips that will let you get the most out of R Markdown. Additionally, RStudio Connect will be highlighted, specifically how it works wonderfully with tools like R Markdown.

Please provide feedback: https://docs.google.com/forms/d/e/1FAIpQLSdOwz3yJluPR2fEqE0hBt92NtKZzzNACR8KJhHUt9rhFj3HqA/viewform?usp=sf_link

More resources if you’re interested: https://docs.google.com/document/d/1VKGs1G9GcQcv4pCYFbK68_LDh72ODiZsIxXLN0z-zD4/edit

04:15 Literate Programming 09:00 - Rstudio Visual Editor Demo 15:44 - R and python in same document via {reticulate} 18:10 - Q&A: Options for collaborative editing (version control, shared drive etc.) 19:30 - Q&A: Multi-pane support in Rstudio 20:46 Data Product (reports, presentations, dashboards, websites etc.) 24:15 - Distill article 26:27 - Xaringan presentation (add three dashes — for new slide) 28:58 - Flexdashboard (with shiny) 30:30 - Crosstalk (talk between different html widgets instead of {shiny} server) 35:03 - Q&A: Jobs panel – parallelise render jobs in background 36:50 - Q&A: various data product packages, formats 39:35 Control Document (modularise data science tasks, control code flow) 39:58 - Knit with Parameters (YAML params: option) 41:20 - Reference named chunks from .R files (knitr::read_chunk()) 43:00 - Child Documents (reuse content, conditional inclusion, {blastula} email) 47:07 Templating (don’t repeat yourself) 47:38 - rmarkdown::render() with params, looping through different param combinations 49:30 - Loop templates within a single document 50:40 - 04-templating/ live code demo 54:37 - {whisker} vs {glue} – {{logic-less}} vs {logic templating} 55:30 - {whisker} for generating markdown files that you can continue editing 57:49 RMarkdown + Rstudio Connect 1:00:41 Follow-up Reading and resources 1:04:49 Q&A - {shiny} apps, {webshot2} for screenshots of html, reading in multiple .R files, best practice for producing MSoffice files, {blastula}