tidymodels

Easily install and load the tidymodels packages

tidymodels is a meta-package that provides a unified framework for modeling and statistical analysis in R, following tidyverse design principles. It loads a core set of packages that cover the complete modeling workflow, from data preprocessing to model evaluation.

The package standardizes modeling tasks through specialized tools for each step: creating train/test splits, preprocessing data with feature engineering, building models with a consistent interface across algorithms, tuning hyperparameters, and evaluating performance. It solves the problem of inconsistent interfaces across R’s modeling functions by providing a coherent grammar and shared data structures. The framework integrates seamlessly with tidyverse workflows, making it easier to build, tune, and validate predictive models.

Contributors#

Resources featuring tidymodels#

Strategic Budget Optimization through Marketing Mix Modeling (MMM)

Stop guessing which channels drive growth.

Join Isabella Velásquez and Daniel Chen for this end-to-end workflow demo on modeling marketing spend, building ‘what-if’ scenario planners in Shiny, and deploying automated Quarto reports that give non-technical stakeholders a clear, data-backed view of ROI.

We will explore:

- The Workflow Architecture: A look at the “plumbing” required to move from data to insights.

- The Interface: Wrapping an existing model in a Shiny dashboard for real-time “what-if” scenario planning.

- The Delivery: Automating communication via Quarto reports to keep stakeholders aligned.

- The Deployment: Using Posit Connect to turn your code into an accessible business asset for leaders.

This workflow demo grew out of the incredible energy at our recent Data Science Hangout with Ryan Timpe at LEGO. We were so inspired by the community’s interest in MMM that we decided to build this session to address your specific questions. Thank you for the great discussion. We’re excited to show you what we’ve put together!

Demo References MMM Demo GitHub Repo (https://github.com/chendaniely/dashboard-mmm ) MMM Demo Dashboard (https://danielchen-dashboard-mmm.share.connect.posit.cloud/ ) Ryan Timpe’s posit::conf(2023) talk, tidymodels: Adventures in Rewriting a Modeling Pipeline - posit::conf(2023) (https://www.youtube.com/watch?v=R7XNqcCZnLg )

MMM Resources Bayesian Media Mix Modeling for Marketing Optimization, PyMC Labs (https://www.pymc-labs.com/blog-posts/bayesian-media-mix-modeling-for-marketing-optimization ) Modernizing MMM: Best Practices for Marketers, IAB (https://www.iab.com/wp-content/uploads/2025/12/IAB_Modernizing_MMM_Best_Practices_for_Marketers_December_2025.pdf ) The Future of Media Mix Modeling, Measured (https://info.measured.com/hubfs/Guides/Measured_Guide%20The%20Future%20of%20Media%20Mix%20Modeling.pdf?hsCtaAttrib=184767817336 ) Media Mix Modeling: How to Measure the Effectiveness of Advertising, Hajime Takeda, PyData (https://www.youtube.com/watch?v=u4U_PUTasPQ ) An Analyst’s Guide to MMM, Meta (https://facebookexperimental.github.io/Robyn/docs/analysts-guide-to-MMM/ ) Full Python Tutorial: Bayesian Marketing Mix Modeling (MMM) SPECIAL GUEST: PyMC Labs, Matt Dancho (https://www.youtube.com/watch?v=lJ_qq_IVUgg ) Reading MMM Outputs: Dashboards and Decisions for Small Teams, SmartSMSSolutions (https://smartsmssolutions.com/resources/blog/business/reading-mmm-dashboards-article )

Simon Couch - Practical AI for data science

Practical AI for data science (Simon Couch)

Abstract: While most discourse about AI focuses on glamorous, ungrounded applications, data scientists spend most of their days tackling unglamorous problems in sensitive data. Integrated thoughtfully, LLMs are quite useful in practice for all sorts of everyday data science tasks, even when restricted to secure deployments that protect proprietary information. At Posit, our work on ellmer and related R packages has focused on enabling these practical uses. This talk will outline three practical AI use-cases—structured data extraction, tool calling, and coding—and offer guidance on getting started with LLMs when your data and code is confidential.

Presented at the 2025 R/Pharma Conference Europe/US Track.

Resources mentioned in the presentation:

- {vitals}: Large Language Model Evaluations https://vitals.tidyverse.org/

- {mcptools}: Model Context Protocol for R https://posit-dev.github.io/mcptools/

- {btw}: A complete toolkit for connecting R and LLMs https://posit-dev.github.io/btw/

- {gander}: High-performance, low-friction Large Language Model chat for data scientists https://simonpcouch.github.io/gander/

- {chores}: A collection of large language model assistants https://simonpcouch.github.io/chores/

- {predictive}: A frontend for predictive modeling with tidymodels https://github.com/simonpcouch/predictive

- {kapa}: RAG-based search via the kapa.ai API https://github.com/simonpcouch/kapa

- Databot https://positron.posit.co/dat

Precision Medicine for All: Using Tidymodels to Validate PRS in Brazil (Flávia Rius) | posit::conf

Precision Medicine for All: Using Tidymodels to Validate Breast Cancer PRS in Brazil

Speaker(s): Flávia E. Rius

Abstract:

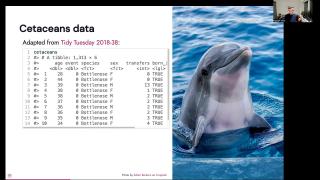

Polygenic risk scores (PRS) are a powerful way to measure someone’s risk for common diseases, such as diabetes, cardiovascular disease, and cancer. However, most PRS are developed using data from European populations, making it challenging to generalize results to other ancestries. In this talk, I’ll show how I used tidymodels tools—like yardstick, recipes, and workflows—to calculate metrics and validate a breast cancer PRS in the highly admixed Brazilian population. You will learn how to leverage tidymodels to make precision medicine more inclusive. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

The Power of Snowflake and Posit Workbench (Jonathan Regenstein, Snowflake) | posit::conf(2025)

The Power of Snowflake and Posit Workbench: Macroeconomic Data Exploration in the Cloud

Speaker(s): Jonathan Regenstein

Abstract:

In this talk, we will utilize the Posit Workbench Native App to demonstrate how macroeconomic research can be run in the Snowflake cloud, powered by R & RStudio.

Starting with data sourced from the Snowflake marketplace, we will import, transform, visualize, and, finally, model data using the Orbital framework to push tidymodels down to the cloud. This is full-stack, R-driven macroeconomic research in the cloud. posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Data Science in the Energy Industry | Frank Hull | Data Science Hangout

To join future data science hangouts, add it to your calendar here: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Frank Hull, Director of Data Science and Analytics at ACES, to chat about forecasting energy demand and prices, managing over a thousand data models, full-stack data science, and advanced machine learning techniques for time series analysis.

In this Hangout, we explore the necessity of managing a vast number of data models in the energy industry. Frank’s team at ACES oversees over a thousand models (nearly 2,000, actually!), a staggering number explained by the complexity and fragmentation of the wholesale energy market. The United States is divided into various Independent System Operators (ISOs), each possessing unique regulations and diverse resource mixes. Each of ACES’s 40+ portfolios can operate in different geographical areas within an ISO, presenting distinct challenges that necessitate individual modeling. These models are used to simulate a wide range of time horizons, from the next hour or day-ahead market to long-term financial planning and infrastructure decisions spanning 25 years. This intricate modeling helps in understanding hourly price shapes, demand patterns, supply mixes, and evaluating the effectiveness of new energy generators or hedging strategies, all with the goal of lowering variable costs for cooperatives and mitigating critical risks like blackouts during peak demand.

Resources mentioned in the video and zoom chat: Tidymodels → https://www.tidymodels.org/ Orbital Project → https://orbital.tidymodels.org/ U.S. Energy Information Administration (EIA) Open Data → https://www.eia.gov/opendata/ Kuzco R Package → https://posit.co/blog/kuzco-computer-vision-with-llms-in-r/

If you didn’t join live, one great discussion you missed from the zoom chat was about handling imbalanced binary classification models. Participants discussed why techniques like SMOTE might not perform well in production with real-world data, shared experiences with alternative methods such as standard up/downsampling, and highlighted challenges in maintaining prediction accuracy in deployment despite strong training results. Let us know below if you’d like to hear more about this topic!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps: 00:00 Introduction 04:05 “What’s ISO?” 08:20 “What are your go to models for analysis in the energy field?” 10:48 “Do you tend to use traditional stochastic models for time series analysis or more of the recent ML methods?” 13:30 “What is a full stack data scientist? What’s the overlap between a full stack data scientist and something like an ML engineer or a data engineer?” 18:38 “Is there a specific data science skill set that’s needed to get into energy analysis?” 19:59 “What is the portfolio model?” 23:36 “How have you found convincing regulators and other stats oriented stakeholders to trust and believe your AI fancy machine learning models that they can’t really dive in and and prove to themselves that that’s being statistically valid? Or have you found some good ways to demonstrate that?” 26:50 “Are there any good examples of open data in energy?” 27:54 “How are you keeping on top of the documentation for all of these models? Over a thousand models is a lot. Is there any learning you could share from that experience to help other people keep on top of their documentation?” 30:33 “How would you suggest handling missing data in time series forecasting?” 33:10 “Do you see long term electricity prices decreasing in the next twenty five years due to the abundance of renewables like wind and solar in lower population areas?” 35:14 “Do you have any career advice?” 36:50 “How do you see data science evolving within the energy industry?” 38:39 “How do you keep up to date on new packages?”

Deploying Scikit-learn models for in-database scoring with Snowflake and Posit Team

Modern data science workflows often face a critical challenge: how to efficiently deploy machine learning models where the data lives. Traditional approaches require moving large datasets out of the database for scoring, creating bottlenecks, security concerns, and unnecessary data movement costs.

In this demo, Nick Pelikan at Posit highlights how orbital bridges the gap between Python’s rich ML ecosystem and database-native execution by converting scikit-learn pipelines to optimized SQL. This enables data scientists to:

- Use familiar Python tools for model development

- Automatically translate complex feature engineering to SQL

- Deploy models without rewriting code in different languages

- Maintain model accuracy through faithful SQL translations

While this example focuses on Python, Nick also gave this demo using Orbital for R users: https://youtu.be/pnEjYNgOG9c?feature=shared

Helpful resources:

- Follow-along blog post: https://posit.co/blog/snowflake-orbital-scikit-learn-models/

- Supported models for Orbital: https://orbital.tidymodels.org/articles/supported-models.html

- Orbital (Python) GitHub Repo: https://github.com/posit-dev/orbital

- Orbital (R) GitHub Repo: https://github.com/tidymodels/orbital

- Databricks and Orbital for R and Python model deployment: https://posit.co/blog/databricks-orbital-r-python-model-deployment/

- Emily Riederer’s XGBoost example with Orbital: https://www.emilyriederer.com/post/orbital-xgb/

- Sara Altman’s blog post on Shiny + Databases: https://posit.co/blog/shiny-with-databases/

- Emil’s introduction of orbital: https://emilhvitfeldt.com/talk/2024-08-13-orbital-positconf/

- Nick’s Blog on Running Tidymodel Prediction Workflows with Orbital: https://posit.co/blog/running-tidymodel-prediction-workflows-inside-databases/

If you’d like to learn more about using Posit Team, you can always schedule time to chat with Posit here: https://posit.co/schedule-a-call/

Easier data and asset sharing across projects and teams with {pins} and Databricks

Led by Edgar Ruiz, Software Engineer at Posit PBC April 30th at 11 am ET / 8 am PT

Sharing data assets can be challenging for many teams. Some may rely on emailed files to keep analyses up to date, making it difficult to keep current or know what version of the data is used. {pins} improves sharing data and other assets across projects and teams. It enables us to publish, or ‘pin’, to a variety of places, such as Amazon S3, Posit Connect and Dropbox.

Given recent customer feedback, the ability to publish, or ‘pin’ to Databricks Volumes has been added to R. The same capability is also currently in the works for the Python version of {pins}.

This session on April 30th will showcase the acceleration of predictions by distributing a ‘pinned’ model using pins and Spark in Databricks. We’ll walk through integrating {pins} with Databricks in your team’s projects and cover novel uses of pins inside the Databricks ecosystem.

GitHub repo: https://github.com/edgararuiz/talks/tree/main/end-to-end

Here are a few additional resources that you might find interesting:

- Pins for R: https://pins.rstudio.com/

- Pins for Python: https://rstudio.github.io/pins-python/

- More information on how Posit and Databricks work together: https://posit.co/use-cases/databricks/

- Customer Spotlight: Standardizing a safety model with tidymodels, Posit Team & Databricks at Suffolk Construction: https://youtu.be/yavHEWpgrCQ

- Q&A Recording: https://youtube.com/live/HDTDmEaK5zQ?feature=share

Standardizing a safety model with tidymodels, Posit Team & Databricks at Suffolk Construction

If you’ve ever struggled with standardizing machine learning workflows, ensuring secure data access, or scaling insights across your organization, this month’s Posit Team Workflow demo is for you.

Maxwell Patterson, Data Scientist at Suffolk walked us through how their team is:

Standardizing model workflows using tidymodels, vetiver, Shiny, and Quarto Leveraging row-level permissions in Shiny apps to improve data governance Using Databricks and Posit to gain insights faster and more securely

A few helpful links for this demo: Suffolk Customer Spotlight: https://posit.co/about/customer-stories/suffolk/ Quarto email customization: https://docs.posit.co/connect/user/quarto/#email-customization Vetiver package: https://rstudio.github.io/vetiver-r/reference/vetiver_deploy_rsconnect.html Pins package: https://pins.rstudio.com/ Tidymodels “meta-package” https://tidymodels.tidymodels.org/ More information on how Posit and Databricks work together: https://posit.co/use-cases/databricks/

Do you use both Databricks and Posit, but not together yet. You can use this link to chat more with our team as well: https://pos.it/chat-databricks

Q&A Recording: https://youtube.com/live/zU-bBUJMyQ4?feature=share To add future workflow demos on your calendar: https://pos.it/team-demo

^ These demos happen the last Wednesday of every month

The Power of Snowflake and Posit Workbench: Macroeconomic Data Exploration in the Cloud

In this live event, we will utilize the Posit Workbench Native App to demonstrate that macroeconomic research can be run in the Snowflake cloud but powered by R and RStudio.

Starting with data sourced from the Snowflake marketplace, we will import, transform, visualize, and, finally, model data using the Orbital framework to push tidymodels down to the cloud. This is full-stack, R-driven macroeconomic research in the cloud.

Add the event to your calendar: https://evt.to/eugmedshw Learn more about the Snowflake and Posit partnership: https://posit.co/use-cases/snowflake/

Wes McKinney & Hadley Wickham (on cross-language collaboration, Positron, career beginnings, & more)

We hosted a special event hosted by Posit PBC with Wes McKinney (Pandas & Apache Arrow) and Hadley Wickham (rstats & tidyverse) to ask questions, share your thoughts, and exchange insights about cross-language collaboration with fellow data community members.

Here’s a preview into what came up in conversation:

- Cross-language collaboration between R and Python

- Positron, a new polyglot data science IDE

- Open source development, how Wes and Hadley got involved in open source and their experiences in building and maintaining open-source projects such as Pandas and the tidyverse.

- Documentation for R and Python, especially in the context of teams that use both languages (shoutout to Quarto!)

- The use of LLMs in data science

- The emergence of libraries like Polars and DuckDB

- Challenges of switching between the two languages

- Package development and maintenance for polyglot teams that have internal packages in both languages

- The future of data science

The chat was on fire for this conversation and we’ve gathered most of the links shared among the community below:

Documentation mentioned: Positron, next-generation data science IDE built by Posit: https://positron.posit.co/ Quarto tabset documentation: https://quarto.org/docs/output-formats/html-basics.html#tabset-groups

Packages / Extensions mentioned: Pins: https://pins.rstudio.com/ Vetiver: https://vetiver.posit.co Orbital: https://orbital.tidymodels.org Elmer: https://elmer.tidyverse.org Tabby Extension: https://quarto.thecoatlessprofessor.com/tabby/

Blog posts: AI chat apps with Shiny for Python: https://shiny.posit.co/blog/posts/shiny-python-chatstream/ Using an LLM to enhance a data dashboard written in Shiny: R Sidebot & Python Sidebot Marco Gorelli Data Science Hangout (polars): https://youtu.be/lhAc51QtTHk?feature=shared Emily Riederer’s blog post on Polars: https://www.emilyriederer.com/post/py-rgo-polars/ Jeffrey Sumner’s tabset example: https://rpy.ai/posts/visualizations%20with%20r%20and%20python/r_python_visualizations Emily Riederer’s blog post on Python and R ergonomics: https://www.emilyriederer.com/post/py-rgo/11 Sam Tyner’s blog post on Lessons from “Tidy Data”: https://medium.com/@sctyner90/10-lessons-from-tidy-data-on-its-10th-anniversary-dbe2195a82b7

Other: Hadley Wickham’s cocktails website: https://cocktails.hadley.nz 5 Posit subscription management to find out about new tools, events, etc.: https://posit.co/about/subscription-management/

New to Posit? Posit builds enterprise solutions and open source tools for people who do data science with R and Python. (We are also the company formerly called RStudio) We’d love to have you join us for future community events!

Every Thursday from 12-1pm ET we host a Data Science Hangout with the community and invite you to join us! You can add that event to your calendar with this link: https://www.addevent.com/event/Qv9211919

Tidymodel prediction workflows inside databases with orbital and Snowflake

Nick Pelikan, Senior Solution Architect at Posit highlighted how you can:

- Fit and deploy R models directly in Snowflake

- Use Snowflake’s compute to drastically reduce model runtime

- Save and share models across your team, making them accessible through tools like Snowsight and Python

This end-to-end workflow makes it easier to implement models and scale insights across your organization using Posit and Snowflake.

You can learn more about the workflow in our blog as well→ https://posit.co/blog/running-tidymodel-prediction-workflows-inside-databases/

Helpful links: Q&A Recording → https://youtube.com/live/VWKRYdikf7s?feature=share Blog post highlighting this example → https://posit.co/blog/running-tidymodel-prediction-workflows-inside-databases/ orbital package → https://orbital.tidymodels.org/ Want to chat more about using Posit & Snowflake together? → https://posit.co/schedule-a-call/?booking_calendar__c=Snowflake

To add future Workflow Demo events to your calendar → https://www.addevent.com/event/Eg16505674

Simon Couch - From hours to minutes: Accelerating your tidymodels code

From hours to minutes: Accelerating your tidymodels code - Simon Couch

Abstract: This talk demonstrates a 145-fold speedup in training time for a machine learning pipeline with tidymodels through 4 small changes. By adapting a grid search on a canonical model to use a more performant modeling engine, hooking into a parallel computing framework, transitioning to an optimized search strategy, and defining the grid to search over carefully, users can drastically cut down on the time to develop machine learning models with tidymodels without sacrificing predictive performance.

Resources mentioned in the talk:

- Presentation slides https://simonpcouch.github.io/rpharma-24/#/

- GitHub repository for talk https://github.com/simonpcouch/rpharma-24

- Efficient Machine Learning with R: Low-Compute Predictive Modeling with tidymodels https://emlwr.org

- Optimizing model parameters faster with tidymodels https://www.simonpcouch.com/blog/2023-08-04-parallel-racing/

Presented at the 2024 R/Pharma Conference

Emil Hvitfeldt - Tidypredict with recipes, turn workflow to SQL, spark, duckdb and beyond

Tidypredict is one of my favorite packages. Being able to turn a fitted model object into an equation is very powerful! However, in tidymodels, we use recipes more and more to do preprocessing. So far, tidypredict didn’t have support for recipes, which severely limited its uses. This talk is about how I fixed that issue. After spending a couple of years thinking about this problem, I finally found a way! Being able to turn a tidymodels workflow into a series of equations for prediction is super powerful. For some uses, being able to turn a model to predict inside SQL, spark or duckdb allows us to handle some problems with more ease.

Talk by Emil Hvitfeldt

Slides: https://emilhvitfeldt.github.io/talk-orbital-positconf/ GitHub Repo: https://github.com/EmilHvitfeldt/talk-orbital-positconf/tree/main

Hannah Frick - tidymodels for time-to-event data

Time-to-event data can show up in a broad variety of contexts: the event may be a customer churning, a machine needing repairs or replacement, a pet being adopted, or a complaint being dealt with. Survival analysis is a methodology that allows you to model both aspects, the time and the event status, at the same time. tidymodels now provides support for this kind of data across the framework.

Talk by Hannah Frick

Slides: https://hfrick.github.io/2024-posit-conf/ GitHub Repo: https://github.com/hfrick/2024-posit-conf

Max Kuhn - Evaluating Time-to-Event Models is Hard

Censoring in data can frequently occur when we have a time-to-event. For example, if we order a pizza that has not yet arrived after 5 minutes, it is censored; we don’t know the final delivery time, but we know it is at least 5 minutes. Censored values can appear in clinical trials, customer churn analysis, pet adoption statistics, or anywhere a duration of time is used. I’ll describe different ways to assess models for censored data and focus on metrics requiring an evaluation time (i.e., how well does the model work at 5 minutes?). I’ll also describe how you can use tidymodel’s expanded features for these data to tell if your model fits the data well. This talk is designed to be paired with the other tidymodels talk by Hannah Frick.

Talk by Max Kuhn

Slides: https://topepo.github.io/2024-posit-conf/ GitHub Repo: https://github.com/topepo/2024-posit-conf

Simon Couch - Fair machine learning

In recent years, high-profile analyses have called attention to many contexts where the use of machine learning deepened inequities in our communities. After a year of research and design, the tidymodels team is excited to share a set of tools to help data scientists develop fair machine learning models and communicate about them effectively. This talk will introduce the research field of machine learning fairness and demonstrate a fairness-oriented analysis of a machine learning model with tidymodels.

Talk by Simon Couch

Slides: https://simonpcouch.github.io/conf-24 GitHub Repo: https://github.com/simonpcouch/conf-24

Live Q&A following Workflow Demo - June 26th

Please join us for the live Q&A session for the June 26th Workflow Demo - this will start immediately following the demo.

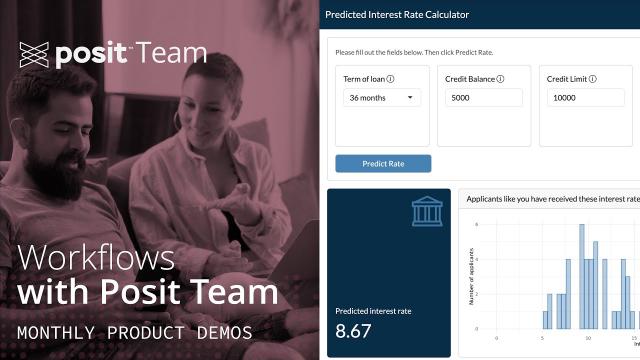

Predicting Lending Rates with Databricks, tidymodels, and Posit Team

Anonymous questions: https://pos.it/demo-questions

Demo: 11 am ET [Happening here! https://youtu.be/qIzKJKcmh-s?feature=shared] Q&A: ~11:35 am ET

Predicting Lending Rates with Databricks, tidymodels, and Posit Team

Machine learning algorithms are reshaping financial decision-making, changing how the industry manages financial risk.

For our workflow demo today on June 26th at 11 am ET, Garrett Grolemund at Posit will show how to use both Posit and Databricks to apply machine learning methods to the consumer credit market, where accurately predicting lending rates is critical for customer acquisition.

*Please note that while the workflow focuses on a financial example, the general workflow will be useful to those using Databricks and R together across any industry.

During this workflow demo, you will learn how to:

- Connect to historical lending rate data stored in Databricks Delta Lake

- Tune and cross-validate a penalized linear regression (LASSO) that predicts interest rates

- Select variables with the penalized linear regression model (LASSO)

- Build an interactive Shiny app to provide a customer-facing user interface for our model

- Deploy the app to production on Posit Connect, and arrange for the app to access Databricks

Resources for the demo: GitHub repo for today’s materials: https://github.com/posit-dev/databricks-finance-app Accompanying Guide: https://pub.demo.posit.team/public/predicting-lending-rates/lending-rate-prediction.html Q&A Recording: https://youtube.com/live/wNI3AhHP7uM

Additional follow-up links: GitHub Repo: https://github.com/posit-dev/databricks-finance-app Accompanying Guide: https://pub.demo.posit.team/content/fec42b3d-3aa9-43e1-8312-0ff553d09851/lending-rate-prediction.html While this demo uses ODBC package to connect to Databricks, you can also use sparklyr R package. Learn more about both here: https://docs.posit.co/ide/server-pro/user/rstudio-pro/guide/databricks.html Example using sparklyr instead of ODBC: https://posit.co/blog/reporting-on-nyc-taxi-data-with-rstudio-and-databricks/ Posit Workbench provides additional features for managing Databricks Credentials, learn more here: https://docs.posit.co/ide/server-pro/user/posit-workbench/guide/databricks.html#databricks-with-r For more on the Posit x Databricks partnership: https://posit.co/solutions/databricks/ Blog post on Edgar’s workshop on Databricks at conf: https://posit.co/blog/using-databricks-with-r-conf-workshop/ Solutions article on ODBC and Databricks: https://solutions.posit.co/connections/db/databases/databricks/

Want to chat more with Posit? To talk with Posit about integrating Posit & Databricks: https://posit.co/schedule-a-call/?booking_calendar__c=DatabricksJune2024Demo

Had fun and want to join again? You can add the monthly recurring event to your calendar with this link: https://pos.it/team-demo

Conformal Inference with Tidymodels - posit::conf(2023)

Presented by Max Kuhn

Conformal inference theory enables any model to produce probabilistic predictions, such as prediction intervals. We’ll demonstrate how these analytical methods can be used with tidymodels. Simulations will show that the results have good coverage (i.e., a 90% interval should include the real point 90% of the time).

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Tidy up your models. Session Code: TALK-1085

Making a (Python) Web App is easy! - posit::conf(2023)

Presented by Marcos Huerta

Making Python Web apps using Dash, Streamlit, and Shiny for Python

This talk describes how to make distribution-free prediction intervals for regression models via the tidymodels framework.

By creating and deploying an interactive web application you can better share your data, code, and ideas easily with a broad audience. I plan to talk about several Python web application frameworks, and how you can use them to turn a class, function, or data set visualization into an interactive web page to share with the world. I plan to discuss building simple web applications with Plotly Dash, Streamlit, and Shiny for Python.

Materials:

- Comprehensive talk notes here: https://marcoshuerta.com/posts/positconf2023/

- https://www.tidymodels.org/learn/models/conformal-regression/

- https://probably.tidymodels.org/reference/index.html#regression-predictions

Corrections: In my live remarks, I said a Dash callback can have only one output: that is not correct, a Dash callback can update multiple outputs. I was trying to say that a Dash output can only be updated by one callback, but even that is no longer true as of Dash 2.9. https://dash.plotly.com/duplicate-callback-outputs""

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: The future is Shiny. Session Code: TALK-1086

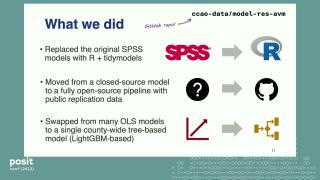

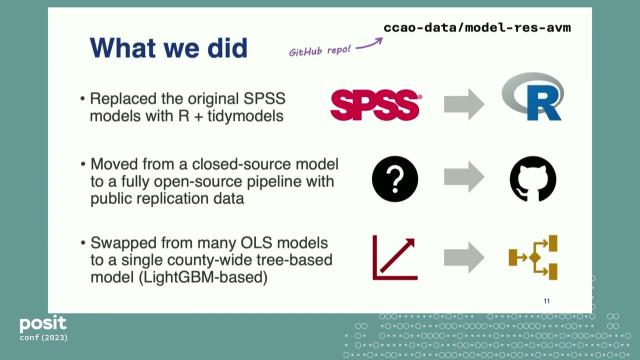

Open Source Property Assessment: Tidymodels to Allocate $16B in Property Taxes - posit::conf(2023)

Presented by Nicole Jardine and Dan Snow

How the Cook County Assessor’s Office uses R and tidymodels for its residential property valuation models.

The Cook County Assessor’s Office (CCAO) determines the current market value of properties for the purpose of property taxation. Since 2020, the CCAO has used R, tidymodels, and LightGBM to build predictive models that value Cook County’s 1.5 million residential properties, which are collectively worth over $400B. These predictive models are open-source, easily replicable, and have significantly improved valuation accuracy and equity over time.

Join CCAO Chief Data Officer Nicole Jardine and Director of Data Science Dan Snow as they walk through the CCAO’s modeling process, shares lessons learned, and offer a sneak peek at changes planned for the 2024 reassessment of Chicago.

Materials: https://github.com/ccao-data

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: End-to-end data science with real-world impact. Session Code: TALK-1147

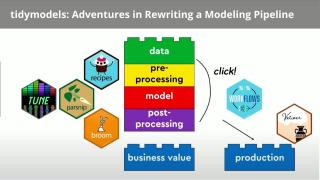

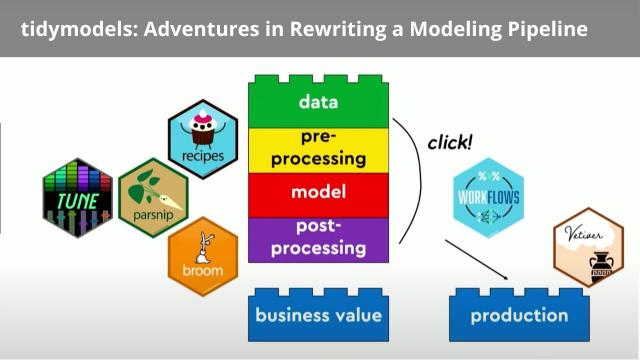

tidymodels: Adventures in Rewriting a Modeling Pipeline - posit::conf(2023)

Presented by Ryan Timpe

An overview of the benefits unlocked on our data science team by adopting tidymodels.

Data science sure has changed over the past few years! Everyone’s talking about production. RStudio is now Posit. Models are now tidy.

This talk is about embracing that change and updating existing models using the tidymodels framework. I recently completed this change, letting go of our in-production code and revisioning it with tidymodels. My team ended up with a faster, more scalable pipeline that enabled us to better automate our workflow and increase our scale while improving our stakeholders’ experiences.

I’ll share tips and tricks for adopting the tidymodels framework in existing products, best practices for learning and upskilling teams, and advice for using tidymodel packages to build more accessible data science tools.

Materials: https://www.ryantimpe.com/files/tidymodels_adventures_positconf2023.pdf

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Tidy up your models. Session Code: TALK-1082

webR 0.2: R Packages and Shiny for WebAssembly | George Stagg | Posit

WebR makes it possible to run R code in the browser without the need for an R server to execute the code: the R interpreter runs directly on the user’s machine. But just running R isn’t enough, you need the R packages you use every day too.

webR 0.2.0 makes many new packages available (10,324 packages - about 51% of CRAN!) and it’s now possible to run Shiny apps under webR, entirely client side.

George Stagg shares how to load packages with webR, know what ones are available, and get started running Shiny apps in the web browser. There’s a demo webR Shiny app too!

00:15 Loading R packages with webR 01:50 Wasm system libraries available for use with webR 05:30 Tidyverse, tidymodels, geospatial data, and database packages available 08:00 Shiny and httpuv: running Shiny apps under webR 11:05 Example Shiny app running in the web browser 12:05 Links with where to learn more

Shiny webR demo app: https://shinylive.io/r/examples/

Website: https://docs.r-wasm.org/ webR REPL example: https://webr.r-wasm.org/latest/

Demo webR Shiny app in this video: https://shiny-standalone-webr-demo.netlify.app/ Source: https://github.com/georgestagg/shiny-standalone-webr-demo/

See the overview of what’s new in webR 0.2.0: https://youtu.be/Mpq9a6yMl_w

Bite-sized tricks for machine learning with tidymodels | Posit

The tidymodels framework is a collection of R packages for modeling and machine learning using tidyverse principles. This video highlights a number of tidymodels features that could improve your modeling workflows.

0:03 Switching modeling engines is easy 0:21 Never lose your tuning results 0:36 Built-in visualizations for modeling objects 1:03 Grouped resampling 1:16 Case weights 1:32 Select variables based on role and type 2:00 Spatial resampling 2:16 Keep your tidymodels objects small

Learn more at https://www.tidymodels.org/

How to train, evaluate, and deploy a machine learning workflow with tidymodels & Posit Team

Helpful resources: Github: https://github.com/simonpcouch/mutagen Follow-up Q&A Session: https://youtube.com/live/vwBVOBQfc_U If you want to book a call with our team to chat more about Posit products: pos.it/chat-with-us Don’t want to meet, but curious who else on your team is using Posit? pos.it/connect-us Blog post on tidymodels + Posit Connect: https://posit.co/blog/pharmaceutical-machine-learning-with-tidymodels-and-posit-connect/ Tidy Modeling with R book: https://www.tmwr.org/

Timestamps: 1:44 - Three steps for developing a machine learning model 3:35 - What is a machine learning model? 7:02 - Overview of machine learning with Posit Team 7:36: Step 1: Understand and clean data 11:05 - Step 2: Train and evaluate models (why you might be interested using tidymodels) 23:02 - Step 3: Deploying a machine learning model from Posit Workbench to Posit Connect 30:14 - Summary 31:21 - Helpful resources

Machine learning models are all around us, from Netflix movie recommendations to Zillow property value estimates to email spam filters.

As these models play an increasingly large role in our personal and professional lives, understanding and embracing them has never been more important; machine learning helps us make better, data-driven decisions.

The tidymodels framework is a powerful set of tools for building—and getting value out of—machine learning models with R.

Data scientists use tidymodels to:

- Gain access to a wide variety of machine learning methods

- Guard against common mistakes

- Easily deploy models through tidymodels’ integration with vetiver

Join Simon Couch from the tidyverse team on Wednesday, October 25th at 11am ET as he walks through an end-to-end machine learning workflow with Posit Team.

No registration is required to attend - simply add it to your calendar using this link: pos.it/team-demo

Workflow Demo Q&A - Oct 25th

Live Q&A for the end-to-end machine learning workflow with tidymodels & Posit Team on October 25th.

Machine Learning with Tidymodels & Posit Team Demo: https://www.youtube.com/watch?v=O0Dklq-IZhw&list=PL9HYL-VRX0oRsUB5AgNMQuKuHPpNDLBVt&index=1

Emil Hvitfeldt - Slidecraft: The Art of Creating Pretty Presentations

Slidecraft: The Art of Creating Pretty Presentations by Emil Hvitfeldt

Visit https://rstats.ai/nyr to learn more.

Abstract: Do you want to make slides that catch the eye of the room? Are you tired of using defaults when making slides? Are you ready to spend every last hour of your life fiddling with css and js? Then this talk is for you! Making slides with Quarto and revealjs is a breeze and comes with many tools and features. This talk gives an overview of how we can improve the visuals of your slides with the highest effect to effort ratio.

Bio: Emil Hvitfeldt is a software engineer at Posit and part of the tidymodels team’s effort to improve R’s modeling capabilities. He maintains several packages within the realms of modeling, text analysis, and color palettes. He co-authored the book Supervised Machine Learning for Text Analysis in R with Julia Silge.

Twitter: https://twitter.com/Emil_Hvitfeldt

Presented at the 2023 New York R Conference (July 13, 2023)

posit::conf(2023) Workshop: Advanced tidymodels

Register now: http://pos.it/conf Instructor: Max Kuhn, Software Engineer, Posit Workshop Duration: 1-Day Workshop

This workshop is for you if you: • have used tidymodels packages like recipes, rsample, and parsnip • are comfortable with tidyverse syntax (e.g. piping, mutates, pivoting) • have some experience with resampling and modeling (e.g., linear regression, random forests, etc.), but we don’t expect you to be an expert in these

In this workshop, you will learn more about model optimization using the tune and finetune packages, including racing and iterative methods. You’ll be able to do more sophisticated feature engineering with recipes. Time permitting, model ensembles via stacking will be introduced. This course is focused on the analysis of tabular data and does not include deep learning methods.

Participants who have completed the “Introduction to tidymodels” workshop will be well-prepared for this course. Participants who are new to tidymodels will benefit from taking the Introduction to tidymodels workshop before joining this one

posit::conf(2023) Workshop: Introduction to tidymodels

Register now: http://pos.it/conf Instructors: Hannah Frick, Simon Couch, Emil Hvitfeldt Workshop Duration: 1-Day Workshop

This workshop is for you if you: • have intermediate R knowledge, experience with tidyverse packages, and either of the R pipes • can read data into R, transform and reshape data, and make a wide variety of graphs • have had some exposure to basic statistical concepts such as linear models, random forests, etc.

Intermediate or expert familiarity with modeling or machine learning is not required.

This workshop will teach you core tidymodels packages and their uses: data splitting/resampling with rsample, model fitting with parsnip, measuring model performance with yardstick, and basic pre-processing with recipes. Time permitting, you’ll be introduced to model optimization using the tune package. You’ll learn tidymodels syntax as well as the process of predictive modeling for tabular data

Hannah Frick | Censored - Survival Analysis in Tidymodels | Posit (2022)

tidymodels is extending support for survival analysis and censored is a new parsnip extension package for survival models. It offers various types of models: parametric models, semi-parametric models like the Cox model, and tree- based models like decision trees, boosted trees, and random forests. They all come with the consistent parsnip interface so that you can focus on the modelling instead of details of the syntax. Happy modelling!

Talk materials are available at https://hfrick.github.io/rstudio-conf-2022

Session: Updates from the tidymodels team

Kelly Bodwin | Translating from {tidymodels} and scikit-learn: Lessons from a ‘bilingual’ course

The friendly competition between R and python has gifted us with two stellar packages for workflow-style predictive modeling: tidymodels in R, and scikit- learn in python. When I had to choose between them for a Machine Learning Course, I said: ¿Porque no los dos? (Why not both?)

In this talk, I will share how the differences in structure and syntax between tidymodels and scikit-learn impacted student understanding. Can a helper function hide an important decision about tuning parameters? Can a slight change in argument input influence the way we describe a model? The answer is a resounding, “¡Sí!”

Don’t despair, though, because I will also provide advice for avoiding pitfalls when switching between languages or implementations. Together, let’s think about the power that programming choices has to shape the mental model of the user, and the ways that we can responsibly document our modeling decisions to increase cross-language reproducibility.

Talk materials are available at https://www.kelly-bodwin.com/talks/rsconf22/

Session: Teaching data science

Data Science Hangout | Michael Chow, Posit | Exploring Team Structure w/ Data Scientists & Engineers

We were joined by Michael Chow, Data Scientist and Software Engineer at RStudio. Michael also previously led a team at the California Integrated Travel Project.

On this week’s hangout there were a lot of thoughts shared on structuring a data science team from both Michael and the broader group:

⬢ Jacqueline Nolis also shared thoughts on this on a data science hangout that there were virtues to different ones, but ended up sold on the decentralized model where data scientists are embedded in teams: https://youtu.be/CcPE29bYGVo?t=325

⬢ Michael agreed that data scientists and analysts should be sitting with the teams that they’re pushing out reports for. Otherwise, I would be trying to send people into those teams to figure out their priorities.

⬢ A data scientist should work with a Project Manager or whoever’s leading the team to push up metrics but also help change the roadmap.

⬢ It leaves a tricky question of where data engineers should be and how they should interact with the team. Today data engineers are often doing more tooling empowerment, so it can be okay to have them a bit more centralized and connect to the data scientists to enforce best practices or enable new pieces for them.

⬢ I think a nice model is for data scientists/analysts to live in the teams and data engineers to be like spokes of a wheel where then the data scientists connect with them and work closely to enforce better best practice and enable new important things.

⬢ Tatsu shared that in thinking of the structure, it’s also important to find your translators and to use the power of feedback. Reach out to those people to start to put that feedback into action.

⬢ George shared that insurance companies have come from a really traditional landscape where they have lots of actuaries working on lots of excel spreadsheets and there can be a lack of knowledge sharing and tool sharing. This is where the data science element comes in. To me, within the organization, you need to have this team which is a mini-spoke if you will, because they are central to the actuarial team. If they are too far removed and they’re back with the IT team, you end up with the old problems because they may not get the business concept communicated back. It’s all about getting enough skills, so they can get stuff done, especially proof of concepts. Maybe after that you can take a step back and then start to look at the centralized model again.

⬢ A central team can help converge to what they see as best practice, but if you’re pushing out something new, exploring a new line of work or area it can be important to set the data engineer there to actually do whatever they need to. Make sure that the converging doesn’t stifle creativity or prevent a team from doing the right thing.

⬢ Manny jumped in to share the perspective from data science being with IT as well, data science is a new field for their company (in real estate) and there’s an identity of where does data science fall. The IT team is fantastic and they’re very structured. Data science is so fluid and creative and non structured at the moment, so you kind of have to look at where it actually should fall.

- please note that some of the points above are summarized and not 100% actual quotes.

Resources shared:

⬢ Tatsu shared in the chat, a few projects that Michael is working on: vetiver: https://vetiver.tidymodels.org/articles/vetiver.html , siuba: https://github.com/machow/siuba ⬢ Libby shared a helpful tip on creating a 2 minutes YouTube video with a cover letter, to get the attention of a hiring manager ⬢ Javier shared an example Shiny app used in an interview: https://javierorraca.shinyapps.io/Bloomreach_Shiny_App/ ⬢ Michael mentioned David Robinson’s screencasts: https://www.youtube.com/channel/UCeiiqmVK07qhY-wvg3IZiZQ ⬢ Michael mentioned an article on “What data scientists really do according to 35 data scientists”: https://hbr.org/2018/08/what-data-scientists-really-do-according-to-35-data-scientists ⬢ Rachael shared a blog post link where Jacqueline Nolis talked about team structure as well: https://www.rstudio.com/blog/building-effective-data-science-team-answering-your-questions/#Structure

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu ► Add the Data Science Hangout to your calendar: rstd.io/datasciencehangout ► View the Data Science Hangout site here: rstudio.com/data-science-hangout

Follow Us Here: Website: https://www.rstudio.com LinkedIn:https://www.linkedin.com/company/rstudio-pbc Twitter: https://twitter.com/rstudio

Julia Silge | Monitoring Model Performance | RStudio

0:00 Project introduction 1:50 Overview of the setup code chunk 3:05 Getting new data 4:05 Getting model from RStudio Connect using httr and jsonlite 6:20 Bringing in metrics 9:45 Using the pins package 10:50 Using boards on RStudio Connect 13:30 Benefits of using pins 14:00 Visualizations using ggplot and plotly 17:00 Knitting the flexdashboard 18:10 Project takeaways

You can read Julia’s blogpost, Model Monitoring with R Markdown, pins, and RStudio Connect, here: https://blog.rstudio.com/2021/04/08/model-monitoring-with-r-markdown/

Modelops playground GitHub repo: https://github.com/juliasilge/modelops-playground

pins package documentation: https://pins.rstudio.com/

flexdashboard documentation: https://rmarkdown.rstudio.com/flexdashboard/

tidymodels documentation: https://www.tidymodels.org/

Max Kuhn | parsnip A tidy model interface | RStudio (2019)

parsnip is a new tidymodels package that generalizes model interfaces across packages. The idea is to have a single function interface for types of specific models (e.g. logistic regression) that lets the user choose the computational engine for training. For example, logistic regression could be fit with several R packages, Spark, Stan, and Tensorflow. parsnip also standardizes the return objects and sets up some new features for some upcoming packages.

VIEW MATERIALS https://github.com/rstudio/rstudio-conf/tree/master/2019/Parsnip--Max_Kuhn

About the Author Max Kuhn Dr. Max Kuhn is a Software Engineer at RStudio. He is the author or maintainer of several R packages for predictive modeling including caret, Cubist, C50 and others. He routinely teaches classes in predictive modeling at rstudio::conf, Predictive Analytics World, and UseR! and his publications include work on neuroscience biomarkers, drug discovery, molecular diagnostics and response surface methodology. He and Kjell Johnson wrote the award-winning book Applied Predictive Modeling in 2013