tidyverse

Easily install and load packages from the tidyverse

The tidyverse package provides a single command to install and load a collection of R packages that share common data structures and design principles. It bundles core packages for data analysis workflows, including tools for visualization (ggplot2), manipulation (dplyr), tidying (tidyr), import (readr), and functional programming (purrr).

The package solves the problem of managing multiple dependencies by loading nine core packages at once and providing utilities to check for package conflicts and updates. It also installs additional packages for working with specific data types (dates, times, factors, strings) and importing data from various sources (Excel, SPSS, JSON, web APIs). The shared API design across all tidyverse packages means they work together seamlessly without requiring different syntax or data structure conversions.

Contributors#

Resources featuring tidyverse#

Coding vs. thinking programmatically | Samia Baig | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

This week’s guest was Samia Baig, Senior Data Scientist/Data Engineer at Johnson & Johnson Innovative Medicine!

Some topics covered in this week’s Hangout were transitioning from a background in pharmacy and public health to a data career in pharma, distinguishing the responsibilities of data scientists versus analytics engineers, strategies for making data pipelines more robust (and convincing your team that you NEED robust pipelines in the first place), and the value of joining open-source communities like Tidy Tuesday.

Resources mentioned in the video and chat: Posit Data Science Lab → https://pos.it/dslab Tidy Tuesday GitHub Repository → https://github.com/rfordatascience/tidytuesday {dbplyr} → https://dbplyr.tidyverse.org/ The Missing Semester of Your CS Education → https://missing.csail.mit.edu/

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh The Lab: https://pos.it/dslab LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps 00:00 Introduction 03:22 “Do you feel like analytics engineer is a good descriptor for what you do?” 04:44 “How did you get into data from being on a pharmacist’s job path?” 11:55 “What was it like in the move that you made from public health to pharma?” 16:57 “What do you say are distinguishing factors between data science and engineering?” 20:16 “What are the most popular tools that you and your team use, in your job at J&J?” 24:00 “What do you use SQL in?” 27:40 “How would you go about convincing a team of the need for a more robust pipeline?” 31:10 “Can you define robust?” 33:31 “Do you happen to have any specific resources or strategies or examples that might help students or others with that mindset of thinking programmatically?” 37:06 “Are there any non data science skills that are very helpful in your either current or former job?” 40:23 “Is there any kind of community among data scientists across the whole company?” 45:44 “What are your biggest data challenges that you have?” 46:12 “If you had a magic wand, what problem would you solve in that area?” 49:52 “What is a piece of career advice that maybe you wish you could go back in time and give yourself?”

Data analysis with Posit AI-assistants | Sara Altman & Simon Couch | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Sara Altman who walks through using Posit’s AI assistants to analyze data, including a sneak peek at Posit Assistant, and Simon Couch drops by to give us a demo of the reviewer package! Together, Sara and Simon author the Posit AI Newsletter, the best place to stay up-to-date with all the cool tools and advice on staying an informed and level-headed AI user.

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Sara Altman, Simon Couch

Sara’s Bluesky: https://bsky.app/profile/sara-altman.bsky.social Sara’s LinkedIn: https://www.linkedin.com/in/sarakaltman/ Sara’s GitHub: https://github.com/skaltman Posit AI Newsletter by Sara and Simon: https://posit.co/blog/?category=roundups

Resources from the hosts and chat:

Positron IDE → https://positron.posit.co/ Databot Extension → https://positron.posit.co/databot.html Getting started with Positron Assistant → https://positron.posit.co/assistant-getting-started.html Posit Assistant (Private Beta) → https://posit-ai-beta.share.connect.posit.cloud/ Reviewer Package (by Simon Couch) → https://github.com/simonpcouch/reviewer ellmer Package → https://elmer.tidyverse.org/ chatlas Package → https://github.com/posit-dev/chatlas Read the Posit AI Newsletter → https://posit.co/blog/?category=roundups Sign up to get the Posit AI Newsletter → http://pos.it/ai-news Simon’s blog post about local LLMs not quite being ready for primetime → https://posit.co/blog/local-models-are-not-there-yet/ Join the waitlist for Posit AI in RStudio → https://posit.co/products/ai/ Posit AI Known Issues & FAQs → https://posit-ai-beta.share.connect.posit.cloud/#frequently-asked-questions-faqs Blog post from Simon and Sara about Privacy and LLMs → https://posit.co/blog/trust-llm-tools/ DS Lab YouTube playlist → https://youtube.com/playlist?list=PL9HYL-VRX0oSeWeMEGQt0id7adYQXebhT&si=7tmU6EAJpO5S7GBh

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction 07:23 “Would you mind real quick just briefly explaining the differences between Positron Assistant and Databot?” 15:01 “Is there any way to configure reasoning efforts when signing in with GitHub Copilot?” 15:49 “Does DataBot already support other providers beyond Cloud?” 20:36 “What is the cases with monetary penalty in the console output?” 22:14 “Do you happen to know if the column names of the dataset are very, very messy?” 23:18 “Can you add skills to DataBot?” 26:36 “This code isn’t being saved anywhere. So where does it go?” 27:38 “There a way to know what all the slash commands are?” 28:51 Requesting Databot to use the namespace operator 33:58 “Is there a way to search within that Databot pane?” 39:34 “Have you noticed any time differences with how quickly things run-in RStudio versus Positron?” 40:33 “What happens if you open that URL that it mentions at the bottom in your browser?” 40:50 Clarifying the difference between Posit Assistant and Positron Assistant 43:18 “What is the typical token burn rate?” 53:31 “Is this on CRAN and working in both Positron and RStudio?”

The mall package: using LLMs with data frames in R & Python | Edgar Ruiz | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Edgar Ruiz as they walk through how mall works (with ellmer) in R, and then python. The mall package lets you use LLMs to process tabular or vectors of data, letting you do things such as feeding it a column of reviews and asking mall to use an anthropic model via ellmer to add a column of summaries or sentiments. Follow along with the code here: https://github.com/LibbyHeeren/mall-package-r

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Edgar Ruiz

Edgar’s Bluesky: https://bsky.app/profile/theotheredgar.bsky.social Edgar’s LinkedIn: https://www.linkedin.com/in/edgararuiz/ Edgar’s GitHub: https://github.com/edgararuiz

Resources from the hosts and chat:

Ollama → https://ollama.com/download Posit Data Science Lab → https://posit.co/dslab mall package → https://mlverse.github.io/mall/ ellmer package → https://elmer.tidyverse.org/ Libby’s Positron theme (Catppuccin) → https://marketplace.visualstudio.com/items?itemName=Catppuccin.catppuccin-vsc GitHub repo with Libby and Edgar’s code → https://github.com/LibbyHeeren/mall-package-r LLM providers supported by ellmer → https://ellmer.tidyverse.org/index.html#providers vitals package → https://vitals.tidyverse.org/ chatlas package → https://posit-dev.github.io/chatlas/ polars package → https://pola.rs/ narwhals package → https://narwhals-dev.github.io/narwhals/ pandas package → https://pandas.pydata.org/ LM Studio → https://lmstudio.ai/ Simon Couch’s blog → https://www.simonpcouch.com/ Edgar’s dataset: TidyTuesday Animal Crossing Dataset (May 5, 2020) → https://github.com/rfordatascience/tidytuesday Libby’s dataset: Kaggle Tweets Dataset → https://www.kaggle.com/datasets/mmmarchetti/tweets-dataset Blog from Sara and Simon on evaluating LLMs → https://posit.co/blog/r-llm-evaluation-03/ Data Science Lab YouTube playlist → https://www.youtube.com/watch?v=LDHGENv1NP4&list=PL9HYL-VRX0oSeWeMEGQt0id7adYQXebhT&index=2 AWS Bedrock → https://aws.amazon.com/bedrock/ Anthropic → https://www.anthropic.com/ Google Gemini → https://gemini.google.com/ What is rubber duck debugging anyway?? → https://en.wikipedia.org/wiki/Rubber_duck_debugging

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction to Libby, Isabella, Edgar, and the mall package + ellmer package 07:14 “What’s the difference between using mall for these NLP tasks versus traditional or classical NLP?” 09:37 “Can mall be used with a local LLM?” 17:32 “What kind of laptop specs should I realistically have to make good use of these models?” 22:12 “Are you limited to three output options?” 22:55 “Can mall return the prediction probabilities?” 24:14 “What are a rule of thumb set of specs for a machine so local LLMs are practically feasible?” 24:47 “Would that be in the additional prompt area where you’re defining things?” 25:04 “You could use the vitals package to compare models, right?” 25:24 “Can we use LM Studio instead of Ollama?” 28:35 “How do you iterate and validate the model?” 36:39 “Why use paste if it is all text?” 37:31 “Are these recent tweets (from X) or older ones from actual Twitter?” 40:23 “Is there a playlist for the Data Science Labs on YouTube?” 46:11 “Does that mean that the python version does not work with pandas?” 50:14 “Where is this data set from?”

Getting Started with LLM APIs in R

Getting Started with LLM APIs in R - Sara Altman

Abstract: LLMs are transforming how we write code, build tools, and analyze data, but getting started with directly working with LLM APIs can feel daunting. This workshop will introduce participants to programming with LLM APIs in R using ellmer, an open-source package that makes it easy to work with LLMs from R. We’ll cover the basics of calling LLMs from R, as well as system prompt design, tool calling, and building basic chatbots. No AI or machine learning background is required—just basic R familiarity. Participants will leave with example scripts they can adapt to their own projects.

Resources mentioned in the workshop:

- Workshop site: https://skaltman.github.io/r-pharma-llm/

- ellmer documentation: https://ellmer.tidyverse.org/

- shinychat documentation: https://posit-dev.github.io/shinychat/

Inspecting websites to find JSON data APIs | Marcos Huerta | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Marcos Huerta, a Data Science Manager at Carmax, as he walks us through the guts of websites looking for data we can play with. He shows us how to find hidden REST/JSON APIs by using the web inspector in Safari/Firefox and then how to get what’s necessary to pull the same data programmatically in python or R.

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Daniel Chen

Marcos’s urls: Website: https://marcoshuerta.com GitHub: https://github.com/astrowonk/

Resources from the hosts and from participants in the Discord chat:

Postman: https://www.postman.com/ Insomnia (open source alternative to Postman): https://insomnia.rest/ Baseball Savant website Marcos is using: https://baseballsavant.mlb.com/gamefeed/?gamePk=777076 Isabella Velasquez’s blog on using {polite} R package to help scrape Wikipedia: https://ivelasq.rbind.io/blog/politely-scraping/ Festivas Mac app Marcos used to add the lights to his desktop: https://festivitas.app/ Ted Laderas blog post on parsing JSON in R: https://laderast.github.io/intro_apis_json_cascadia/#/how-does-r-translate-json New rvest read_html_live() function: https://rvest.tidyverse.org/reference/read_html_live.html yyjsonr R package: https://github.com/coolbutuseless/yyjsonr tuber R package: https://github.com/gojiplus/tuber WikipediaR R package: https://www.quantargo.com/help/r/latest/packages/WikipediaR/1.1/WikipediaR-package rookiepy python package: https://pypi.org/project/rookiepy/

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction 03:05 Web scraping vs. API calls 04:12 Server-side rendering vs. client-side JSON 06:12 Warning: Rate limits and business ethics (ahem) 08:39 Demo: Baseball Savant website 08:57 Using browser Developer Tools and the Network tab 12:15 “What is curl?” 13:30 Importing curl into Postman 16:03 Generating Python code from Postman 16:50 “Are there open source alternatives to Postman?” 17:50 Using the generated code in Python/Jupyter 22:28 R packages for JSON (jsonlite, yyjsonr) 25:09 Demo: Massachusetts Lottery website 28:17 Example: scripts Marcos automated with Cron jobs 30:17 Handling logins and cookies with RookiePie 32:19 Demo: CNN Election Data 34:26 Inspecting ESPN’s website 36:58 “Can you scrape YouTube?” 38:19 Finding hidden JSON in CardsMania history 45:00 Benefits of API inspection over Beautiful Soup 46:59 New rvest function: read_html_live 50:40 Inspecting LinkedIn and finding GraphQL 53:58 Encouragement on handling API pagination

Open source development practices | Isabel Zimmerman & Davis Vaughan | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Isabel Zimmerman and Davis Vaughan, Software Engineers at Posit, to chat about the life of an open source developer, strategies for navigating complex codebases, and how to leverage AI in data science workflows. Plus, NERDY BOOKS!

In this Hangout, we explore the differences between maintaining established ecosystems like the Tidyverse as well as building new tools like the Positron IDE. Davis and Isabel (and sometimes Libby ) share practical advice for developers, such as the utility of AI for writing tests and “rubber ducking”, and their various approaches to writing accessible documentation that bridges the expert-novice gap.

Resources mentioned in the video and zoom chat: Positron IDE → https://posit.co/positron/ Air (R formatter) → https://posit-dev.github.io/air/ Python Packages Book (free) → https://py-pkgs.org/ R Packages Book (free) → https://r-pkgs.org/ DeepWiki (AI tool mentioned for docs) → https://deepwiki.com/tidyverse/vroom

If you didn’t join live, one great discussion you missed from the zoom chat was about Brandon Sanderson’s Cosmere books and the debate between starting with Mistborn vs. The Stormlight Archive. Are you a Cosmere fan?! Which book did you start with? (Libby started with Elantris years before picking up Mistborn Era 1 book 1, but she’d now recommend maybe starting with Warbreaker!)

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh The Lab: https://pos.it/dslab LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps: 00:00 Introduction 04:41 “What does a day in the life of an open source dev look like?” 09:43 “What got you into building your own R packages?” 13:00 “Personal tips for working with code bases you’re not familiar with?” 16:35 “How much of what you build is in R/Python vs. lower-level languages?” 19:57 “Does Air work inside code chunks in Positron?” 20:12 “Changing the Python Quarto formatter in Positron without an extension” 22:56 “What do your side projects look like?” 26:40 “How do you approach writing documentation?” 30:55 “What interesting trends in data science are you noticing?” 33:38 “How do you leverage AI in your work?” 37:30 “What are the hexes on Davis’s back wall?” 38:50 “What career advice would you give to someone in a similar position?” 43:45 “How can I be more resilient when things go wrong?” 47:59 “Do you have keyboard preferences?” 49:25 “What is the best way to report bugs in packages?” 50:56 “Open source dev work vs. in-house dev work” 51:50 “Tips for getting started with Positron”

Advent of Code for R users | Emil Hvitfeldt | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Posit engineer Emil Hvitfeldt as he walks through Day 1 of Advent of Code 2026 using R. This is a super friendly, collaborative, and cheery intro to AoC! Don’t forget, you can do Advent of Code at any ole time of year

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Daniel Chen, Emil Hvitfeldt

Emil’s socials and urls: website: https://emilhvitfeldt.com/ GitHub: https://github.com/emilhvitfeldt Bluesky: https://bsky.app/profile/emilhvitfeldt.bsky.social LinkedIn: https://www.linkedin.com/in/emilhvitfeldt/

Resources from the hosts and chat:

Advent of Code: https://adventofcode.com/ Install Positron: https://positron.posit.co/ Eric Wastl, Advent of Code: Behind the Scenes: https://www.youtube.com/watch?v=_oNOTknRTSU AoC Subreddit: https://www.reddit.com/r/adventofcode/ Kieran Healy shared a reddit post with an Advent of Code answer done in Minecraft: https://www.reddit.com/r/adventofcode/comments/1pbeyxx/2025_day_01_part_2_advent_of_code_in_minecraft/ Emil’s Solutions: https://github.com/EmilHvitfeldt/rstats-adventofcode Emil’s helper package: https://github.com/EmilHvitfeldt/aocfuns purrr::accumulate() function: https://purrr.tidyverse.org/reference/accumulate.html

And, for anyone hangin’ in there at the end, Emil updated us on Discord that he figured out why his cumsum() didn’t work: he forgot to start the dial at 50! Once you fix that, it works to solve part 1 :)

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction 01:01 Tour of the Advent of Code website 02:30 Dashboard overview and puzzle schedule 03:23 How to view and access previous years’ events 03:37 Structure of puzzles: Two parts and stars 04:40 Understanding the global leaderboard 05:08 “Does that ASCII art build itself? 06:16 Setting up private leaderboards for friend 07:54 Starting Day 1: Story prompt and mechanics 09:30 Understanding unique puzzle inputs 10:51 Submission feedback and delay penalties 11:44 Safe dial logic: Left, Right, and circularity 12:50 Starting position and Part 1 success criteria 14:09 Setting up the project in Positron 16:26 Strategy for speed: Reading from the bottom up 18:49 Problem-solving strategies: Pen, paper, and visualization 19:22 Walking through the logic with a sample case 20:52 Coding Part 1: Data parsing and vectorization 23:17 Positron keyboard shortcuts for duplicating lines 24:40 Debugging the logic and handling negative numbers 26:03 Explaining the Modulo operator (%%) 28:15 Managing large inputs of over 4,000 instructions 29:21 Submitting Part 1 and transitioning to Part 2 32:03 Part 2 challenge: Counting zero “clicks” 34:02 Brainstorming Part 2 code modifications 36:19 Checking important warnings for edge cases 37:00 Coding Part 2: Nested loops and incrementing counters 38:23 Hint: Modulo vs. integer division 40:40 Success with the Part 2 test case 42:30 Alternative method: Vectorized cumulative sums 45:29 “What’s the difference between % and %%?” (percent vs modulo) 46:50 Mathematical optimization to avoid inner loops

Simon Couch - Practical AI for data science

Practical AI for data science (Simon Couch)

Abstract: While most discourse about AI focuses on glamorous, ungrounded applications, data scientists spend most of their days tackling unglamorous problems in sensitive data. Integrated thoughtfully, LLMs are quite useful in practice for all sorts of everyday data science tasks, even when restricted to secure deployments that protect proprietary information. At Posit, our work on ellmer and related R packages has focused on enabling these practical uses. This talk will outline three practical AI use-cases—structured data extraction, tool calling, and coding—and offer guidance on getting started with LLMs when your data and code is confidential.

Presented at the 2025 R/Pharma Conference Europe/US Track.

Resources mentioned in the presentation:

- {vitals}: Large Language Model Evaluations https://vitals.tidyverse.org/

- {mcptools}: Model Context Protocol for R https://posit-dev.github.io/mcptools/

- {btw}: A complete toolkit for connecting R and LLMs https://posit-dev.github.io/btw/

- {gander}: High-performance, low-friction Large Language Model chat for data scientists https://simonpcouch.github.io/gander/

- {chores}: A collection of large language model assistants https://simonpcouch.github.io/chores/

- {predictive}: A frontend for predictive modeling with tidymodels https://github.com/simonpcouch/predictive

- {kapa}: RAG-based search via the kapa.ai API https://github.com/simonpcouch/kapa

- Databot https://positron.posit.co/dat

Air: A blazingly fast R code formatter - Davis Vaughan, Lionel Henry

In Python, Rust, Go, and many other languages, code formatters are widely loved. They run on every save, on every pull request, and in git pre-commit hooks to ensure code consistently looks its best at all times.

In this talk, you’ll learn about Air, a new R code formatter. Air is extremely fast, capable of formatting individual files so fast that you’ll question if its even running, and of formatting entire projects in under a second. Air integrates directly with your favorite IDEs, like Positron, RStudio, and VS Code, and is available on the command line, making it easy to standardize on one tool even for teams using various IDEs.

Once you start using Air, you’ll never worry about code style ever again!

https://www.tidyverse.org/blog/2025/02/air/ https://github.com/posit-dev/air

Supporting 100 Data Scientists with a Small Team | Mike Thomson | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Mike Thomson, Data Science Manager at Flatiron Health, to chat about managing open source tools and maintaining R packages, creating reproducible reports for Word and Excel using Quarto, the “hub and spoke” support model for data scientists, and applying R and Posit tools in Real World Evidence (RWE) oncology space.

In this Hangout, we explore creating reproducible outputs using Quarto for formats like Word and Excel. Flatiron Health uses Quarto because it allows the reproducible publication of analyses to multiple formats simultaneously (like HTML and a downloadable Word document) from the same source code. A specific challenge discussed was outputting formatted analytic tables to Excel, as this is not natively supported by Quarto. Erica Yim, from Mike’s team, detailed how they built an internal R function that uses the flexlsx package along with flextable to easily output pre-existing formatted tables from a Quarto document into an Excel template.

Resources mentioned in the video and zoom chat: flexlsx R package GitHub repository → https://github.com/pteridin/flexlsx DBPlier PR for Snowflake Translations (contributed to by Flat Iron Health) → https://github.com/tidyverse/dbplyr/pull/860

If you didn’t join live, one great discussion you missed from the zoom chat was about the pain points of exporting data from Quarto to Word or Excel, particularly concerning table formatting and styles. Attendees in the chat strongly highlighted the difficulty of managing table formatting, including issues with table cross-references, headers, and footers. They noted that dealing with styles often requires workarounds, such as creating flextables that match desired Word styles instead of relying on default table styles. Let us know below if you’d like to hear more about this topic!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps: 00:00 Introduction 02:23 “Can you talk about what Flatiron does and what your teams do?” 03:29 “Could you give us a few examples of the data types or collections that you might be working with?” 05:00 “Do you have longitudinal data?” 07:46 “Are you aware of any computer vision applications in the health care industry from your perspective?” 09:38 “Do you use mixed models or Bayesian MCMC?” 10:56 “How does your team use Quarto?” 16:59 “How do you convince stakeholders of the value of going open source (and handle security concerns)?” 22:56 “Do you allow people to have a certain amount of time to contribute back to open source?” 26:03 “I just want to understand a little bit about your support model for that group.” 29:57 “Do you have any tips for asynchronous working?” 31:02 “Are you like a Jira team or an Asana team for assigning tasks or tickets?” 32:10 “How many people on your platform team support Posit teams?” 34:24 “What does your team use for unstructured document analysis?” 36:24 “How important is domain knowledge in your recruitment?” 40:02 “Where do you store all of this stuff (data storage and databases)?” 42:04 “What is the approximate timeline from the time you do analysis to final deployment of results in the real world?” 44:31 “Is there a process for people getting things approved to use in your environment?” 47:39 “How do you handle the challenge of going back from Word to Quarto source code (after changes are tracked)?” 50:22 “What does a typical Workday look like for you?” 51:47 “Is there a piece of career advice that has either really helped you, that you’ve really liked, that you try to give to other people?”

R & Python Interoperability in Data Science Teams | Dave Gruenewald | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Dave Gruenewald, Senior Director of Data Science at Centene, to chat about polyglot teams, data science best practices, right-sizing development efforts, and process automation.

In this Hangout, we explore working in a polyglot team and fostering interoperability (a word that Libby loves, but struggles to pronounce out loud). Dave Gruenewald emphasizes that teams should use the tools they are comfortable with, whether that’s R or Python. Some strategies for collaboration across languages that Dave suggests include tools like Quarto to seamlessly run R and Python code in the same report. Teams utilize data science checkpoints, saving outputs as platform-agnostic file types like Parquet so that they can be accessed by any language. The use of REST APIs allows R processes to be accessed programmatically by Python (and vice versa), which can be a real game-changer. The newly released nanonext package was also highlighted as a promising development for improved interoperability.

Resources mentioned in the video and zoom chat: Posit Conf 2025 Table and Plotnine Contests → https://posit.co/blog/announcing-the-2025-table-and-plotnine-contests/ nanonext 1.7.0 Tidyverse Blog Post → https://www.tidyverse.org/blog/2025/09/nanonext-1-7-0/

If you didn’t join live, one great discussion you missed from the zoom chat was about pivoting away from academia, including leaving PhD programs. Many attendees shared their personal experiences of making the difficult decision to drop out of a PhD program. The community suggested alternative terms like “pivot,” “reallocating your resources,” or being a “refugee fleeing academia” instead of “drop out.” Dave Gruenewald shared that he himself left a PhD program but has “no regrets about that.” Did you leave a PhD program? You’re not alone!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps: 00:00 Introduction 02:21 “What types of data do your teams use?” 06:53 “Which of the three pillars you mentioned is your personal favorite to work on?” 09:26 “How do you avoid or divert scope creep?” 11:41 “How much of the project should be “planning” before any code happens?” 13:53 “Do you feel like people are just hopping in and going, hey, LLM, make me a POC?” 14:28 “Do you give them what they say they want, or do you give them what they need?” 16:40 “I’m wondering what public data do you wish existed?” 18:48 “Why not Positron yet?” 20:43 “How do you unify as a team and make it so that I can always read everybody else’s code?” 23:10 “Could you talk a little bit about how R and Python work together?” 27:28 “How to start package development with a team who are very new to package development.” 33:01 “What’s your greatest regret career wise?” 35:53 “What about your biggest wins, specifically in your early career?” 39:40 “How would you recommend building a data science culture and community from scratch?” 41:49 “Would you set a specific timeline for EDA, exploratory analysis, to scope the project better?” 45:15 “How do you define fun projects, and how much time do you allocate for exploration in those?” 48:21 “Does your team use DVC or something similar for data version control?” 50:00 “Can you talk a bit more about your pivot from academia into data science?” 51:31 “Any advice on where to look for opportunities in data science after getting a masters degree?”

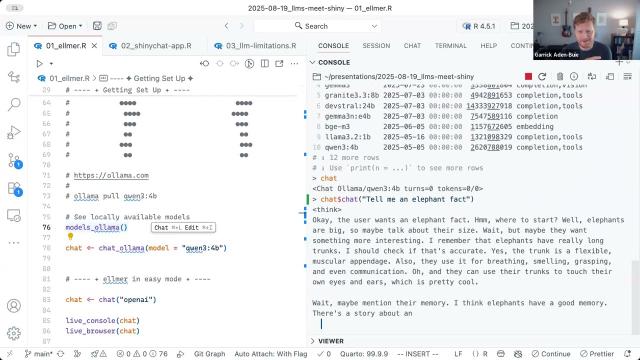

Building the Future of Data Apps: LLMs Meet Shiny

GenAI in Pharma 2025 kicks off with Posit’s Phil Bowsher and Garrick Aiden-Buie sharing a technical overview of how LLMs can integrate with Shiny applications and much more!

Abstract: When we think of LLMs (large language models), usually what comes to mind are general purpose chatbots like ChatGPT or code assistants like GitHub Copilot. But as useful as ChatGPT and Copilot are, LLMs have so much more to offer—if you know how to code. In this demo Garrick will explain LLM APIs from zero, and have you building and deploying custom LLM-empowered data workflows and apps in no time.

Resources mentioned in the session:

- GitHub Repository for session: https://github.com/gadenbuie/genAI-2025-llms-meet-shiny

- {mcptools} - Model Context Protocols servers and clients https://posit-dev.github.io/mcptools/

- {vitals} - Large language model evaluation for R https://vitals.tidyverse.org/

Purrrfectly parallel, purrrfectly distributed (Charlie Gao, Posit) | posit::conf(2025)

Purrrfectly parallel, purrrfectly distributed

Speaker(s): Charlie Gao

Abstract:

purrr is a powerful functional programming toolkit that has long been a cornerstone of the tidyverse. In 2025, it receives a modernization that means you can use it to harness the power of all computing cores on your machine, dramatically speeding up map operations.

More excitingly, it opens up the doors to distributed computing. Through the mirai framework used by purrr, this is made embarrassingly simple. For those in small businesses, or even large ones – every case where there is a spare server in your network, you can now put to good use in simple, straightforward steps.

Let us show you how distributed computing is no longer the preserve of those with access to high performance compute clusters.

Materials - https://shikokuchuo-posit2025.share.connect.posit.cloud/ posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Translating R for Data Science into Portuguese: A Community-Led Initiative (Beatriz Milz, UFABC)

Translating R for Data Science into Portuguese: A Community-Led Initiative

Speaker(s): Beatriz Milz

Abstract:

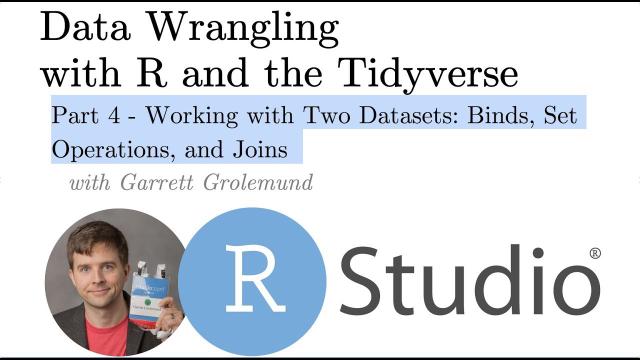

How can open-source collaboration help make data science more accessible and expand Posit’s global impact? The book “R for Data Science” by Hadley Wickham, Mine Çetinkaya-Rundel, and Garrett Grolemund is a key resource for learning R and the tidyverse. In a collaborative effort, volunteers from the R community translated the second edition into Brazilian Portuguese, making it freely available online. This talk explores the translation journey, the challenges of adapting technical content, and key lessons learned to support future translation teams.

Materials - https://beamilz.com/talks/en/2025-posit-conf/ posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

Using Quarto to Improve Formatting/Automate the Generation of Hundreds of Reports (Keaton Wilson)

Using Quarto to Improve Formatting and Automate the Generation of Hundreds of Reports

Speaker(s): Keaton Wilson

Abstract:

This presentation showcases how KS&R’s Decision Sciences and Innovation (DSI) team modernized a legacy reporting pipeline to automate and scale custom survey report generation. Using tidyverse and Quarto, the team produced hundreds of personalized PDFs weekly over three months. Hosted on GitHub, the project integrated version control and streamlined collaboration while documentation ensured easy onboarding and adaptability. Attendees will gain insights into automating report workflows, overcoming implementation challenges, integrating custom formatting and fostering collaboration using tidyverse, Quarto, and GitHub.

Materials - https://github.com/ksrinc/posit_conf_2025_quarto_automation posit::conf(2025) Subscribe to posit::conf updates: https://posit.co/about/subscription-management/

New data science tools & old laptops on fire | Jenny Bryan | Data Science Hangout

ADD THE DATA SCIENCE HANGOUT TO YOUR CALENDAR HERE: https://pos.it/dsh - All are welcome! We’d love to see you!

We were joined by Jenny Bryan, Senior Software Engineer at Posit, to chat about (setting laptops on fire,) adapting careers to embrace change and new technologies, behind-the-scenes technical advancements powering the R ecosystem with tools like Positron, demystifying project-based workflows, plus LLM integration and best practices in programming.

Listen to this episode to hear us chat about topics like this:

-

the benefits and limitations of using Large Language Models (LLMs) in programming. Jenny shared her initial skepticism towards LLMs for coding in R, but her attitude changed significantly when applying LLMs to problems involving languages she was less familiar with, like Rust or TypeScript.

-

adapting in your career to embrace change and new technologies. Jenny, who describes herself as being on a “third career”, transitioned from management consulting to a statistics professor, and then to a senior software engineer at Posit. She talks a bit about her career journey and how she’s embracing new stuff (ahem, Typescript) so that she gets to keep doing cool stuff!

-

Positron IDE for R package development. She specifically praises Positron’s unique test explorer and reliable console, and its integrated Data Explorer. For many, Positron offers out-of-the-box data science functionality, unlike other IDEs that require extensive customization.

-

what new technologies like Ark, Air, and Positron mean for the longterm health of R. Jenny’s been working on lots of nerdy things behind the scenes at Posit and she talks all about how they’re great for developers, package builders, data scientists, and engineers alike.

Another tidbit from this hangout: Jenny gave some advice for those looking to branch into software engineering without formal training: try reading code from admired developers, inviting code reviews, and undertaking small, recreational package development projects to gain practical experience and confidence. She also advocates for adopting a project-oriented workflow (associated with her famous “laptop on fire” remark, of course) using tools like the here package for managing project paths.

Resources mentioned in the video and zoom chat: Positron IDE → https://positron.posit.co/ Happy Git with R → https://happygitwithr.com/ Jenny Bryan’s “Project-oriented workflow” blog post → https://www.tidyverse.org/blog/2017/12/workflow-vs-script/ Air R code formatter → https://posit-dev.github.io/air/ The here() package → https://here.r-lib.org/ Posit Conf → https://posit.co/conference/ Tidy Dev Day 2025 → https://www.tidyverse.org/blog/2025/07/tdd-2025/ R Packages book → https://r-pkgs.org/

If you didn’t join live, you missed a ROARINGLY active chat. Let’s just say, if you’ve ever broken down in tears over a programming project, you’re not alone! Come join us live each week if you’d like to hang out in the chat with us!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Timestamps 00:00 Introduction 03:39 “Is that a Wooble on your desk?” (Spoiler, it’s a gnome!!) 06:23 “As a builder of data science tools, what are the tool features data scientists want most?” 08:43 “Have you experienced needing to adapt to change recently and how have you embraced it?” 13:46 “What is ‘setting laptops on fire’ about?” 13:50 “How did you decide to change your career a few times?” 21:23 “What are your thoughts on the ease of putting models into production in Python versus R and does it make sense to shift everybody to one language or the other?” 27:30 “How do you navigate the ‘I have a hammer so everything looks like a nail’ feeling when working with emerging tools like LLMs?” 33:24 “Do you have any general advice for those data scientists who find themselves wanting to branch out more into software engineering but don’t have formal training?” 39:39 “Why should I use Positron instead of Versus Code?” 47:57 “Can you speak to the value of developing an R package and how to clear the mental hurdle of it being a huge challenge?” 52:34 “What does your career trajectory look like and what is your advice for other people who are looking to grow their career but don’t know if they want to be an IC or a manager? Does being a manager mean you don’t get to write code anymore?”

Harnessing LLMs for Data Analysis | Led by Joe Cheng, CTO at Posit

When we think of LLMs (large language models), usually what comes to mind are general purpose chatbots like ChatGPT or code assistants like GitHub Copilot. But as useful as ChatGPT and Copilot are, LLMs have so much more to offer—if you know how to code. In this demo Joe Cheng will explain LLM APIs from zero, and have you building and deploying custom LLM-empowered data workflows and apps in no time.

Posit PBC hosts these Workflow Demos the last Wednesday of every month. To join us for future events, you can register here: https://posit.co/events/

Slides: https://jcheng5.github.io/workflow-demo/ GitHub repo: https://github.com/jcheng5/workflow-demo

Resources shared during the demo: Ellmer https://ellmer.tidyverse.org/ Chatlas https://posit-dev.github.io/chatlas/

Environment variable management: For R: https://docs.posit.co/ide/user/ide/guide/environments/r/managing-r.html#renviron For Python https://pypi.org/project/python-dotenv/

Shiny chatbot UI: For R, Shinychat https://posit-dev.github.io/shinychat/ For Python, ui.Chat https://shiny.posit.co/py/docs/genai-inspiration.html

Deployment Cloud hosting https://connect.posit.cloud On-premises (Enterprise) https://posit.co/products/enterprise/connect/ On-premises (Open source) https://posit.co/products/open-source/shiny-server/

Querychat Demo: https://jcheng.shinyapps.io/sidebot/ Package: https://github.com/posit-dev/querychat/

If you have specific follow-up questions about our professional products, you can schedule time to chat with our team: pos.it/llm-demo

Bringing data science to the construction industry | Blake Abbenante | Data Science Hangout

To join future data science hangouts, add it to your calendar here: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Blake Abbenante, Director of Analytics and Data Science at Suffolk Construction, to chat about his career journey in data science, implementing modern data practices in the construction industry, innovative applications of AI and data science in construction, and building a data-driven culture in a traditionally less tech-focused sector.

In this Hangout, we explore innovative applications of AI and data science in construction. Blake shared how Suffolk Construction is leveraging cutting-edge technologies like AI to revolutionize traditional processes. One focus is their GenAI scheduling tool, which aims to augment and speed up the design and planning phases of building projects. This tool has the potential to significantly reduce the time planners spend on creating schedules, moving from weeks to potentially minutes or hours for an 80% completion rate. Blake discussed the development and implementation of safety models that forecast risk on projects, enabling proactive measures to ensure safer construction sites by predicting which projects might require additional safety personnel based on historical data.

Resources mentioned in the video and zoom chat: The ellmer R package → https://ellmer.tidyverse.org/ The chatlas R package → https://github.com/posit-dev/chatlas Posit Blog Post on ellmer → https://posit.co/blog/announcing-ellmer/

If you didn’t join live, one great discussion you missed from the zoom chat was about the challenges of data collection and analysis when encountering pushback from those whose work is being analyzed, and strategies to build trust and demonstrate value. Let us know below if you’d like to hear more about this topic!

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Shiny community, hackathons, and his AI mindset | Joe Cheng | Data Science Hangout

To join future data science hangouts, add it to your calendar here: https://pos.it/dsh - All are welcome! We’d love to see you!

We were recently joined by Joe Cheng, CTO at Posit, to chat about the Shiny contest, the use of AI in data science, and designing hackathons for learning new technologies. We were joined by several past and present Shiny contest winners who gave great advice on how to get started if you want to participate (and we really hope you do)!

In this Hangout, we explore the evolution of the Shiny contest since its inception, including what made the 2024 submissions unique and the ways the contest encourages community contribution and learning. Joe also shared about his personal journey from feeling skepticism about AI to seeing and embracing its potential. We got some amazing questions from the Hangout attendees! We hope you join us live next time to ask some of your own questions

Resources mentioned in the video and zoom chat:

2024 Shiny Contest Winners → https://posit.co/blog/winners-of-the-2024-shiny-contest/

Joe’s AI Hackathon Slides → https://jcheng5.github.io/llm-quickstart/quickstart.html

Shiny Assistant → https://gallery.shinyapps.io/assistant/

Isabella’s blog post on prototyping with Shiny Assistant → https://posit.co/blog/ai-powered-shiny-app-prototyping/

Posit Conf Workshops → https://reg.rainfocus.com/flow/posit/positconf25/attendee-portal/page/sessioncatalog?tab.day=20250916&search.sessiontype=1675316728702001wr6r

Shiny Conference 2025 → https://www.shinyconf.com/

Call for Speakers Shiny Conf 2025 → https://sessionize.com/shiny-conf-2025/

Shiny Tableau → https://rstudio.github.io/shinytableau/

Echarts4r → https://echarts4r.john-coene.com

Elmer package on Github → https://github.com/tidyverse/ellmer

All the Shiny app links mentioned in the video and zoom chat: Eric Nantz 2021 Shiny Contest Submission → https://forum.posit.co/t/the-hotshots-racing-dashboard-shiny-contest-submission/104925 Eric Nantz’s R/Pharma conference keynote on AI → https://youtu.be/AfMa1CVUdXU?si=ThLsKFyonntxzBUF Eric Nantz’s Haunted Places app → https://youtu.be/vX09QGMuOfo?si=K5_uPfK5bcfZZ92l Umair Durrani’s Shiny Storytelling app → https://umair.shinyapps.io/storytimegcp/ Umair’s Blue Sky profile → https://bsky.app/profile/transport-talk.bsky.social Umair’s Shiny meetings project on Github → https://github.com/shiny-meetings/shiny-meetings Abby Stamm’s Shiny Accessibility app → https://github.com/ajstamm/shiny-a11y-app

If you didn’t join live, one great discussion you missed from the zoom chat was about everyone’s favorite interactive plotting tools. Someone asked whether Plotly was the best option, and lots of people said they loved ggiraph, echarts4r, ObservableJS, and others. What about you?! What’s your favorite interactive plotting library?

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for hanging out with us!

Wes McKinney & Hadley Wickham (on cross-language collaboration, Positron, career beginnings, & more)

We hosted a special event hosted by Posit PBC with Wes McKinney (Pandas & Apache Arrow) and Hadley Wickham (rstats & tidyverse) to ask questions, share your thoughts, and exchange insights about cross-language collaboration with fellow data community members.

Here’s a preview into what came up in conversation:

- Cross-language collaboration between R and Python

- Positron, a new polyglot data science IDE

- Open source development, how Wes and Hadley got involved in open source and their experiences in building and maintaining open-source projects such as Pandas and the tidyverse.

- Documentation for R and Python, especially in the context of teams that use both languages (shoutout to Quarto!)

- The use of LLMs in data science

- The emergence of libraries like Polars and DuckDB

- Challenges of switching between the two languages

- Package development and maintenance for polyglot teams that have internal packages in both languages

- The future of data science

The chat was on fire for this conversation and we’ve gathered most of the links shared among the community below:

Documentation mentioned: Positron, next-generation data science IDE built by Posit: https://positron.posit.co/ Quarto tabset documentation: https://quarto.org/docs/output-formats/html-basics.html#tabset-groups

Packages / Extensions mentioned: Pins: https://pins.rstudio.com/ Vetiver: https://vetiver.posit.co Orbital: https://orbital.tidymodels.org Elmer: https://elmer.tidyverse.org Tabby Extension: https://quarto.thecoatlessprofessor.com/tabby/

Blog posts: AI chat apps with Shiny for Python: https://shiny.posit.co/blog/posts/shiny-python-chatstream/ Using an LLM to enhance a data dashboard written in Shiny: R Sidebot & Python Sidebot Marco Gorelli Data Science Hangout (polars): https://youtu.be/lhAc51QtTHk?feature=shared Emily Riederer’s blog post on Polars: https://www.emilyriederer.com/post/py-rgo-polars/ Jeffrey Sumner’s tabset example: https://rpy.ai/posts/visualizations%20with%20r%20and%20python/r_python_visualizations Emily Riederer’s blog post on Python and R ergonomics: https://www.emilyriederer.com/post/py-rgo/11 Sam Tyner’s blog post on Lessons from “Tidy Data”: https://medium.com/@sctyner90/10-lessons-from-tidy-data-on-its-10th-anniversary-dbe2195a82b7

Other: Hadley Wickham’s cocktails website: https://cocktails.hadley.nz 5 Posit subscription management to find out about new tools, events, etc.: https://posit.co/about/subscription-management/

New to Posit? Posit builds enterprise solutions and open source tools for people who do data science with R and Python. (We are also the company formerly called RStudio) We’d love to have you join us for future community events!

Every Thursday from 12-1pm ET we host a Data Science Hangout with the community and invite you to join us! You can add that event to your calendar with this link: https://www.addevent.com/event/Qv9211919

Joe Cheng - Summer is Coming: AI for R, Shiny, and Pharma

Summer is Coming: AI for R, Shiny, and Pharma - Joe Cheng

Abstract: R users tend to be skeptical of modern AI models, given our weird insistence on answers being accurate, or at least supported by the data. But I believe the time has come—or maybe it’s a little late—for even the most AI-cynical among us to push past their discomfort and get informed about what these tools are truly capable of. And key to that is moving beyond using AI-enabled apps, and towards building our own scripts, packages, and apps that make judicious use of AI.

In this talk, I’ll tell you why I believe AI has more to offer the R community than just wrong answers from chat windows or mediocre code suggestions in our IDEs. I’ll also introduce brand-new tools we’re developing at Posit that put powerful AI tools within reach of every R user. And finally, I’ll show how adding some AI could make your next Shiny app dramatically more useful for your users.

Resources mentioned in the talk:

- Slides: https://jcheng5.github.io/pharma-ai-2024

- {elmer} Call LLM APIs from R: https://elmer.tidyverse.org/

- {shinychat} Chat UI component for Shiny for R https://github.com/jcheng5/shinychat

- R/Pharma GenAI Day Recordings: https://www.youtube.com/playlist?list=PLMtxz1fUYA5AYryl4t2mtqBngqWDrnMXJ

Presented at the 2024 R/Pharma Conference

817: The Positron IDE, Tidy NLP and MLOps — with Dr. @JuliaSilge

#PositronIDE #Tidyverse #MLOps

Dr. Julia Silge, Engineering Manager at Posit, joins @JonKrohnLearns to introduce the brand-new Positron IDE, perfect for exploratory data analysis and visualization. She also lays out her top picks for LLMs that boost coding efficiency and discusses when traditional NLP methods might be the smarter choice over LLMs. Plus, Julia highlights some must-know open-source libraries that make managing MLOps easier than ever. Tune in for insights that every data scientist, ML engineer, and developer will find useful.

This episode is brought to you by Gurobi (https://www.gurobi.com/personas/optimization-for-data-scientists/) , the Decision Intelligence Leader, and by ODSC (https://odsc.com/california) , the Open Data Science Conference. Interested in sponsoring a SuperDataScience Podcast episode? Email natalie@superdatascience.com for sponsorship information.

In this episode you will learn: • [00:00:00] Introduction • [00:03:23] Overview of Posit and Positron IDE • [00:08:33] How the needs of a data scientist differ from those of a software developer • [00:17:56] How to contribute to the open-source Positron • [00:34:52] MLOps and Vetiver: Tools for deploying and maintaining ML models • [00:48:34] Natural Language Processing (NLP) and the Tidyverse approach • [01:22:18] The role of AI and LLMs in data science education

Additional materials: https://www.superdatascience.com/817

Ask Hadley Anything

A unique opportunity to gain insights directly from a leading expert in open source data science and a driving force behind many popular R packages like ggplot2 and dplyr.

Links from the Q&A: gh-action webscraping demo: https://github.com/hadley/cran-deadlines tidyverse devday 2024: https://www.tidyverse.org/blog/2024/04/tdd-2024/

For the 3 questions on moving from SAS to R in Pharma: Posit and Atorus have partnered on a Posit Academy training: https://posit.co/blog/upskill-to-r-programming-with-posit-and-atorus-research/ And at least 3 pharma companies have shared resources to help people on the transition from statistical programming in SAS, to data science in R: Pfizer exercises: https://github.com/pfizer-opensource/pharma-hands-on-exercises Bayer SAS to R: https://bayer-group.github.io/sas2r/ Roche Coursera course: https://www.coursera.org/learn/making-data-science-work-for-clinical-reporting

Tom Mock @ Posit PBC | Data Science Hangout

We were recently joined by Tom Mock, Product Manager at Posit PBC to chat about career growth, starting out in a sales role, TidyTuesday, and being so good they can’t ignore you.

Speaker Bio: Tom Mock is a Product Manager at Posit, overseeing the Posit Workbench and RStudio team. He fell in love with R and data science through his graduate research, using R and RStudio to wrangle, analyze, model, and visualize my data. He became passionate about growing the R community, and founded #TidyTuesday to help newcomers and seasoned vets improve their Tidyverse skills.

Links mentioned: TidyTuesday: https://github.com/rfordatascience/tidytuesday Table Contest: https://posit.co/blog/announcing-the-2024-table-contest/ Posit Conference: https://posit.co/conference/ Monthly Workflow Demos: https://www.addevent.com/event/Eg16505674 gt package: https://gt.rstudio.com/ So Good They Can’t Ignore You book recommendation: https://www.goodreads.com/book/show/13525945-so-good-they-can-t-ignore-you Community Builder Quarto Site: https://pos.it/community-builder

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co LinkedIn: https://www.linkedin.com/company/posit-software

To join future data science hangouts, add to your calendar here: https://pos.it/dsh

We’d love to have you join us in the conversation live!

Thanks for hanging out with us!

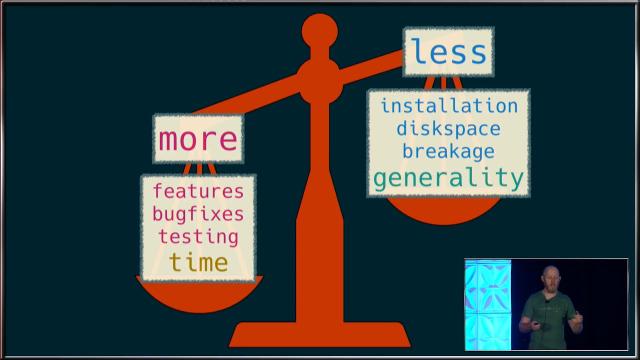

Hadley Wickham - R in Production

R in Production by Hadley Wickham

Visit https://rstats.ai for information on upcoming conferences.

Abstract: In this talk, we delve into the strategic deployment of R in production environments, guided by three core principles to elevate your work from individual exploration to scalable, collaborative data science. The essence of putting R into production lies not just in executing code but in crafting solutions that are robust, repeatable, and collaborative, guided by three key principles:

-

Not just once: Successful data science projects are not a one-off, but will be run repeatedly for months or years. I’ll discuss some of the challenges for creating R scripts and applications that run repeatedly, handle new data seamlessly, and adapt to evolving analytical requirements without constant manual intervention. This principle ensures your analyses are enduring assets not throw away toys.

-

Not just my computer: the transition from development on your laptop (usually windows or mac) to a production environment (usually linux) introduces a number of challenges. Here, I’ll discuss some strategies for making R code portable, how you can minimise pain when something inevitably goes wrong, and few unresolved auth challenges that we’re currently working on.

-

Not just me: R is not just a tool for individual analysts but a platform for collaboration. I’ll cover some of the best practices for writing readable, understandable code, and how you might go about sharing that code with your colleagues. This principle underscores the importance of building R projects that are accessible, editable, and usable by others, fostering a culture of collaboration and knowledge sharing.

By adhering to these principles, we pave the way for R to be a powerful tool not just for individual analyses but as a cornerstone of enterprise-level data science solutions. Join me to explore how to harness the full potential of R in production, creating workflows that are robust, portable, and collaborative.

Bio: Hadley is Chief Scientist at Posit PBC, winner of the 2019 COPSS award, and a member of the R Foundation. He builds tools (both computational and cognitive) to make data science easier, faster, and more fun. His work includes packages for data science (like the tidyverse, which includes ggplot2, dplyr, and tidyr)and principled software development (e.g. roxygen2, testthat, and pkgdown). He is also a writer, educator, and speaker promoting the use of R for data science. Learn more on his website, http://hadley.nz .

Mastodon: https://fosstodon.org/@hadleywickham

Presented at the 2024 New York R Conference (May 17, 2024) Hosted by Lander Analytics (https://landeranalytics.com )

Wes McKinney - The Future Roadmap for the Composable Data Stack

The Future Roadmap for the Composable Data Stack by Wes McKinney

Visit https://rstats.ai for information on upcoming conferences.

Abstract: In this talk, I plan to review the progress we have made in the last 10 years developing composable, interoperable open standards for the data processing stack, from such infrastructure projects as Parquet and Arrow to user-facing interface libraries like Ibis for Python and the tidyverse for R. In discussing the current landscape of projects, I will dig into the different areas where more innovation and growth is needed, and where we would ideally like to end up in the coming years.

Bio: Wes McKinney is an open source software developer and entrepreneur focusing on data processing tools and systems. He created the Python pandas and Ibis projects, and co-created Apache Arrow. He is a Member of the Apache Software Foundation and also a project PMC member for Apache Parquet. He is currently a Principal Architect at Posit PBC and a co-founder of Voltron Data.

Twitter: https://twitter.com/wesmckinn

Presented at the 2024 New York R Conference (May 17, 2024) Hosted by Lander Analytics (https://landeranalytics.com )

Hadley Wickham on R vs Python

Learn about tidyverse, ggplot2, and the secret to a tech company’s longevity as Hadley Wickham joins @JonKrohnLearns in this episode. He talks about Posit’s rebrand, why tidyverse needs to be in every data scientist’s toolkit, and why getting your hands dirty with open-source projects can be so lucrative for your career.

Watch the full interview “779: The Tidyverse of Essential R Libraries and their Python Analogues — with Dr. Hadley Wickham” here: https://www.superdatascience.com/779

779: The Tidyverse of Essential R Libraries and their Python Analogues — with Dr. Hadley Wickham

#Tidyverse #RProgramming #RLibraries

Tidyverse, ggplot2, and the secret to a tech company’s longevity: Hadley Wickham talks to @JonKrohnLearns about Posit’s rebrand, Tidyverse and why it needs to be in every data scientist’s toolkit, and why getting your hands dirty with open-source projects can be so lucrative for your career.

This episode is brought to you by Intel and HPE Ezmeral Software (https://bit.ly/hpeintel) . Interested in sponsoring a SuperDataScience Podcast episode? Visit https://passionfroot.me/superdatascience for sponsorship information.

In this episode you will learn: • [00:00:00] Introduction • [00:02:55] All about the Tidyverse • [00:15:19] Hadley’s favorite R libraries • [00:28:39] The goal of Posit • [00:34:12] On bringing multiple programming languages together • [00:50:19] The principles for a long-lasting tech company • [00:53:34] How Hadley developed ggplot2 • [01:03:52] How to contribute to the open-source community

Additional materials: https://www.superdatascience.com/779

The Future Roadmap for the Composable Data Stack

Discover cutting-edge advancements in data processing stacks. Listen in as Wes McKinney dives into pivotal projects like Parquet and Arrow, alongside essential interface libraries like Ibis and tidyverse. Wes navigates through the current state of these projects, highlighting areas for further innovation and growth.

Sign up for our “No BS” Newsletter to get the latest technical data & AI content: https://hubs.li/Q02vz6xC0

ABOUT THE SPEAKER: Wes McKinney, Principal Architect, Posit PBC (co-founder of Voltron Data)

ABOUT DATA COUNCIL: Data Council brings together the brightest minds in data to share industry knowledge, technical architectures and best practices in building cutting edge data & AI systems and tools.

FIND US: Twitter: https://twitter.com/datacouncilai LinkedIn: https://www.linkedin.com/company/datacouncil-ai/ Website: https://www.datacouncil.ai/

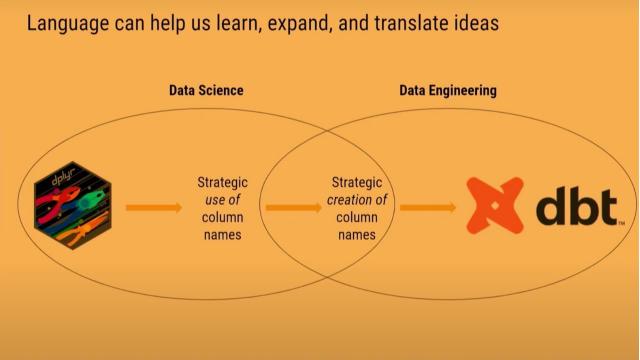

dbtplyr: Bringing Column-Name Contracts from R to dbt - posit::conf(2023)

Presented by Emily Riederer

starts_with(language): Translating select helpers to dbt. Translating syntax between languages transports concepts across communities. We see a case study of adapting a column-naming workflow from dplyr to dbt’s data engineering toolkit.

dplyr’s select helpers exemplify how the tidyverse uses opinionated design to push users into the pit of success. The ability to efficiently operate on names incentivizes good naming patterns and creates efficiency in data wrangling and validation.

However, in a polyglot world, users may find they must leave the pit when comparable syntactic sugar is not accessible in other languages like Python and SQL.

In this talk, I will explain how dplyr’s select helpers inspired my approach to ‘column name contracts,’ how good naming systems can help supercharge data management with packages like {dplyr} and {pointblank}, and my experience building the {dbtplyr} to port this functionality to dbt for building complex SQL-based data pipelines.

Materials:

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Databases for data science with duckdb and dbt. Session Code: TALK-1098

duckplyr: Tight Integration of duckdb with R and the tidyverse - posit::conf(2023)

Presented by Kirill Müller

The duckplyr R package combines the convenience of dplyr with the performance of DuckDB. Better than dbplyr: Data frame in, data frame out, fully compatible with dplyr.

duckdb is the new high-performance analytical database system that works great with R, Python, and other host systems. dplyr is the grammar of data manipulation in the tidyverse, tightly integrated with R, but it works best for small or medium-sized data. The former has been designed with large or big data in mind, but currently, you need to formulate your queries in SQL.

The new duckplyr package offers the best of both worlds. It transforms a dplyr pipe into a query object that duckdb can execute, using an optimized query plan. It is better than dbplyr because the interface is “data frames in, data frames out”, and no intermediate SQL code is generated.

The talk first presents our results, a bit of the mechanics, and an outlook for this ambitious project.

Materials: https://github.com/duckdblabs/duckplyr/

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Databases for data science with duckdb and dbt. Session Code: TALK-1100

How to Keep Your Data Science Meetup Sustainable - posit::conf(2023)

Presented by Ted Laderas

Many data science meetup organizers struggle with burnout. It can be daunting to plan a meetup schedule, especially with the added burden of work and life.

In this talk, I want to highlight some strategies for keeping your data science meetup sustainable. Specifically, I want to highlight the role of self-care in growing and sustaining your group, as well as low-key activities like a data scavenger hunt, watching videos together, styling plots together, and sharing useful tidyverse functions.

By making it easy for your members to contribute and empowering them, it takes a lot of the burden off you as an organizer. You don’t need to reinvent the wheel for meetups or have famous guests for each one. Let’s start the conversation and make your meetup last.

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: It takes a village: building and sustaining communities. Session Code: TALK-1129

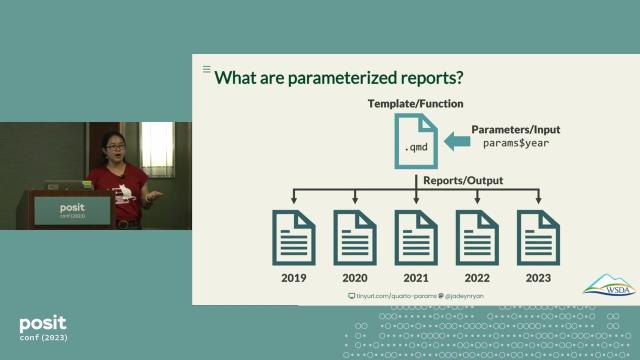

Parameterized Quarto Reports Improve Understanding of Soil Health - posit::conf(2023)

Presented by Jadey Ryan

Learn how to use R and Quarto parameterized reporting in this four-step workflow to automate custom HTML and Word reports that are thoughtfully designed for audience interpretation and accessibility.

Soil health data are notoriously challenging to tidy and effectively communicate to farmers. We used functional programming with the tidyverse to reproducibly streamline data cleaning and summarization. To improve project outreach, we developed a Quarto project to dynamically create interactive HTML reports and printable PDFs. Custom to every farmer, reports include project goals, measured parameter descriptions, summary statistics, maps, tables, and graphs.

Our case study presents a workflow for data preparation and parameterized reporting, with best practices for effective data visualization, interpretation, and accessibility.

Talk materials: https://jadeyryan.com/talks/2023-09-25_posit_parameterized-quarto/

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Elevating your reports. Session Code: TALK-1160

The ‘I’ in Team: Peer-to-Peer Best Practices for Growing Data Science Teams - posit::conf(2023)

Presented by Liz Roten

R users don’t always come in sets. Often, you may be the only user on in the cubicle-block. But, one miraculous day, your manager finally fills the void and you welcome more folks on your team. Suddenly, the little R system you created to suit your needs, like a custom package, code styling, and file organization, isn’t just for you.

Want to suddenly overhaul that one package you wrote two years ago? It probably won’t work when your colleagues try to update it.

Your new teammates are data.table fans, but you prefer the tidyverse. Do you need to refactor? Are style choices, like indentation important when collaborating, or are you just being persnickety?

In this talk, you will learn how to bring new teammates on board and blend your respective styles without pulling your hair out.

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Building effective data science teams. Session Code: TALK-1063

Visualizing Data Analysis Pipelines with Pandas Tutor and Tidy Data Tutor - posit::conf(2023)

Presented by Sean Kross

The data frame is a fundamental data structure for data scientists using Python and R. Pandas and the tidyverse are designed to center building pipelines for the transformation of data frames. However, within these pipelines it is not always clear how each operation is changing the underlying data frame. To explain each step in a pipeline data science instructors resort to hand-drawing diagrams to illustrate the semantics of operations such as filtering, sorting, and grouping.

In this talk, I will introduce Pandas Tutor and Tidy Data Tutor, step-by-step visual representation engines of data frame transformations. Both tools illustrate the row, column, and cell-wise relationships between an operation’s input and output data frames.

Presented at Posit Conference, between Sept 19-20 2023, Learn more at posit.co/conference.#

Talk Track: Teaching data science. Session Code: TALK-1096

webR 0.2: R Packages and Shiny for WebAssembly | George Stagg | Posit

WebR makes it possible to run R code in the browser without the need for an R server to execute the code: the R interpreter runs directly on the user’s machine. But just running R isn’t enough, you need the R packages you use every day too.

webR 0.2.0 makes many new packages available (10,324 packages - about 51% of CRAN!) and it’s now possible to run Shiny apps under webR, entirely client side.

George Stagg shares how to load packages with webR, know what ones are available, and get started running Shiny apps in the web browser. There’s a demo webR Shiny app too!

00:15 Loading R packages with webR 01:50 Wasm system libraries available for use with webR 05:30 Tidyverse, tidymodels, geospatial data, and database packages available 08:00 Shiny and httpuv: running Shiny apps under webR 11:05 Example Shiny app running in the web browser 12:05 Links with where to learn more

Shiny webR demo app: https://shinylive.io/r/examples/

Website: https://docs.r-wasm.org/ webR REPL example: https://webr.r-wasm.org/latest/

Demo webR Shiny app in this video: https://shiny-standalone-webr-demo.netlify.app/ Source: https://github.com/georgestagg/shiny-standalone-webr-demo/

See the overview of what’s new in webR 0.2.0: https://youtu.be/Mpq9a6yMl_w

Bite-sized tricks for machine learning with tidymodels | Posit

The tidymodels framework is a collection of R packages for modeling and machine learning using tidyverse principles. This video highlights a number of tidymodels features that could improve your modeling workflows.

0:03 Switching modeling engines is easy 0:21 Never lose your tuning results 0:36 Built-in visualizations for modeling objects 1:03 Grouped resampling 1:16 Case weights 1:32 Select variables based on role and type 2:00 Spatial resampling 2:16 Keep your tidymodels objects small

Learn more at https://www.tidymodels.org/

How to train, evaluate, and deploy a machine learning workflow with tidymodels & Posit Team

Helpful resources: Github: https://github.com/simonpcouch/mutagen Follow-up Q&A Session: https://youtube.com/live/vwBVOBQfc_U If you want to book a call with our team to chat more about Posit products: pos.it/chat-with-us Don’t want to meet, but curious who else on your team is using Posit? pos.it/connect-us Blog post on tidymodels + Posit Connect: https://posit.co/blog/pharmaceutical-machine-learning-with-tidymodels-and-posit-connect/ Tidy Modeling with R book: https://www.tmwr.org/

Timestamps: 1:44 - Three steps for developing a machine learning model 3:35 - What is a machine learning model? 7:02 - Overview of machine learning with Posit Team 7:36: Step 1: Understand and clean data 11:05 - Step 2: Train and evaluate models (why you might be interested using tidymodels) 23:02 - Step 3: Deploying a machine learning model from Posit Workbench to Posit Connect 30:14 - Summary 31:21 - Helpful resources

Machine learning models are all around us, from Netflix movie recommendations to Zillow property value estimates to email spam filters.

As these models play an increasingly large role in our personal and professional lives, understanding and embracing them has never been more important; machine learning helps us make better, data-driven decisions.

The tidymodels framework is a powerful set of tools for building—and getting value out of—machine learning models with R.

Data scientists use tidymodels to:

- Gain access to a wide variety of machine learning methods

- Guard against common mistakes

- Easily deploy models through tidymodels’ integration with vetiver

Join Simon Couch from the tidyverse team on Wednesday, October 25th at 11am ET as he walks through an end-to-end machine learning workflow with Posit Team.

No registration is required to attend - simply add it to your calendar using this link: pos.it/team-demo

Hadley Wickham @ Posit | Giving benefit to people using what you build | Data Science Hangout

We were recently joined by Hadley Wickham, Chief Scientist at Posit PBC. Listen in to hear our chat about building tools (like the tidyverse) to make data science easier, faster, and more fun.

36:57 - While I’m bought into developing open source packages to help deliver better processes, any advice to those of us doing that development in getting their company bought in?

You have to give some benefit to the people using (what you’re building)

You’ve got to either remove pain or add pleasure in some way because if you can’t do that and you’re not someone’s direct supervisor, it’s hard to get people to change.

The way I think about the tidyverse is, how do we give people some sort of quick wins so they can be motivated to do the things that are slower where they’re gonna have to learn some new ideas or some new tools. You kind of build up some equity with that person.

They build trust that you’ve helped them in the past and now they’re willing to invest a little bit more time before they see the payoff. But in the early days, it’s all about delivering payoffs as quickly as possible.

And I think if you’re doing, like, you know “my company’s first R package” - the easy pain points are: make themes for your company corporate style guide, make a ggplot2 theme, make an R Markdown, a Quarto theme. Make a Shiny theme that people can just use to get, you know, something that’s reasonably close to whatever your corporate style guide dictates.

That just feels like an easy win for people because it makes them look good inside the corporation and because you’ve put in all the hard work, it’s like three seconds for them to type the right function name to get the right theme.

I think the other bit is making it easier to get access to data. Set up some wrappers around DBI connections to the most important data sources. Provide some conventions around authentication so that stuff just works so that they’re not struggling with “What packages do I need to install? What’s the password? Where’s the path I need?” Just give them some, like, a list of the top ten most common data sources and people will love you by and large.

Follow-up question: Once you identify the things that you think would be useful for people - do you have a philosophy or a way in which you approach putting things together?

When you’re in an environment of scarcity when you’ve only got so much time that you can take out of your everyday job to invest in writing a package, it’s really tough to balance. Like, how do I add new stuff versus making sure the old stuff continues to work?

I think, again, some of it’s about building up trust. So, give people some wins so that when you inevitably break stuff, you’ve got some kind of cushion so people aren’t going to be really angry with you right away. They’re gonna be like, ok, well there’s a little bit of suffering now, but this person saved me so much time.

But yeah, it’s really hard. And particularly as you’re starting out, like, you’re going to make mistakes. That’s inevitable.

You’re going to do things that when you look back a year later, you’re like, why on earth did I do it that way? You’ll want to rip out the whole thing and ride it from scratch. And I think that if it feels horrible, you have to remember, that’s great. It means you’ve grown immensely as a programmer.

Certainly if you have my kind of mindset, you have to resist the temptation to rip things out and redo them as much as possible and just focus on making the next generation better rather than breaking what stuff people already have.

So I don’t have any great answers here, but I think you just have to think about those tensions of “how do I keep my forward velocity up while getting better as a programmer and evolving over time, but also thinking about how do you make the things you did a long time ago better?”

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co LinkedIn: https://www.linkedin.com/company/posit-software Twitter: https://twitter.com/posit_pbc

To join future data science hangouts, add to your calendar here: pos.it/dsh (All are welcome! We’d love to see you!)

Come hangout with us!

Teaching the tidyverse in 2023 | Mine Çetinkaya-Rundel

Recommendations for teaching the tidyverse in 2023, summarizing package updates most relevant for teaching data science with the tidyverse, particularly to new learners.

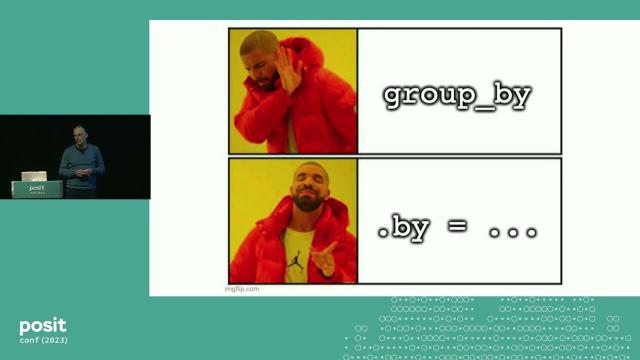

00:00 Introduction 00:46 Using addins to switch between RStudio themes (See https://github.com/mine-cetinkaya-rundel/addmins for more info) 01:40 Native pipe 03:08 Nine core packages in tidyverse 2.0.0 07:15 Conflict resolution in the tidyverse 11:30 Improved and expanded *_join() functionality 22:05 Per operation grouping 27:41 Quality of life improvements to case_when() and if_else() 31:41 New syntax for separating columns 34:51 New argument for line geoms: linewidth 36:08 Wrap up