vitals

Large language model evaluation for R

The vitals package provides a framework for evaluating large language model (LLM) applications built with ellmer in R. It helps developers measure and compare the performance, cost, and latency of LLM products like custom chat apps.

The package allows you to assess whether prompt changes or new tools improve your LLM application, compare different models’ effects on performance metrics, and identify problematic behaviors. It’s an R port of the Python Inspect framework and writes evaluation logs compatible with the Inspect log viewer, making it straightforward to transition between the two tools if needed. Evaluations are built from three components: datasets with input/target pairs, solvers that generate responses to inputs, and scorers that measure how well solver outputs match targets.

Contributors#

Events featuring vitals#

Resources featuring vitals#

The mall package: using LLMs with data frames in R & Python | Edgar Ruiz | Data Science Lab

The Data Science Lab is a live weekly call. Register at pos.it/dslab! Discord invites go out each week on lives calls. We’d love to have you!

The Lab is an open, messy space for learning and asking questions. Think of it like pair coding with a friend or two. Learn something new, and share what you know to help others grow.

On this call, Libby Heeren is joined by Edgar Ruiz as they walk through how mall works (with ellmer) in R, and then python. The mall package lets you use LLMs to process tabular or vectors of data, letting you do things such as feeding it a column of reviews and asking mall to use an anthropic model via ellmer to add a column of summaries or sentiments. Follow along with the code here: https://github.com/LibbyHeeren/mall-package-r

Hosting crew from Posit: Libby Heeren, Isabella Velasquez, Edgar Ruiz

Edgar’s Bluesky: https://bsky.app/profile/theotheredgar.bsky.social Edgar’s LinkedIn: https://www.linkedin.com/in/edgararuiz/ Edgar’s GitHub: https://github.com/edgararuiz

Resources from the hosts and chat:

Ollama → https://ollama.com/download Posit Data Science Lab → https://posit.co/dslab mall package → https://mlverse.github.io/mall/ ellmer package → https://elmer.tidyverse.org/ Libby’s Positron theme (Catppuccin) → https://marketplace.visualstudio.com/items?itemName=Catppuccin.catppuccin-vsc GitHub repo with Libby and Edgar’s code → https://github.com/LibbyHeeren/mall-package-r LLM providers supported by ellmer → https://ellmer.tidyverse.org/index.html#providers vitals package → https://vitals.tidyverse.org/ chatlas package → https://posit-dev.github.io/chatlas/ polars package → https://pola.rs/ narwhals package → https://narwhals-dev.github.io/narwhals/ pandas package → https://pandas.pydata.org/ LM Studio → https://lmstudio.ai/ Simon Couch’s blog → https://www.simonpcouch.com/ Edgar’s dataset: TidyTuesday Animal Crossing Dataset (May 5, 2020) → https://github.com/rfordatascience/tidytuesday Libby’s dataset: Kaggle Tweets Dataset → https://www.kaggle.com/datasets/mmmarchetti/tweets-dataset Blog from Sara and Simon on evaluating LLMs → https://posit.co/blog/r-llm-evaluation-03/ Data Science Lab YouTube playlist → https://www.youtube.com/watch?v=LDHGENv1NP4&list=PL9HYL-VRX0oSeWeMEGQt0id7adYQXebhT&index=2 AWS Bedrock → https://aws.amazon.com/bedrock/ Anthropic → https://www.anthropic.com/ Google Gemini → https://gemini.google.com/ What is rubber duck debugging anyway?? → https://en.wikipedia.org/wiki/Rubber_duck_debugging

► Subscribe to Our Channel Here: https://bit.ly/2TzgcOu

Follow Us Here: Website: https://www.posit.co The Lab: https://pos.it/dslab Hangout: https://pos.it/dsh LinkedIn: https://www.linkedin.com/company/posit-software Bluesky: https://bsky.app/profile/posit.co

Thanks for learning with us!

Timestamps 00:00 Introduction to Libby, Isabella, Edgar, and the mall package + ellmer package 07:14 “What’s the difference between using mall for these NLP tasks versus traditional or classical NLP?” 09:37 “Can mall be used with a local LLM?” 17:32 “What kind of laptop specs should I realistically have to make good use of these models?” 22:12 “Are you limited to three output options?” 22:55 “Can mall return the prediction probabilities?” 24:14 “What are a rule of thumb set of specs for a machine so local LLMs are practically feasible?” 24:47 “Would that be in the additional prompt area where you’re defining things?” 25:04 “You could use the vitals package to compare models, right?” 25:24 “Can we use LM Studio instead of Ollama?” 28:35 “How do you iterate and validate the model?” 36:39 “Why use paste if it is all text?” 37:31 “Are these recent tweets (from X) or older ones from actual Twitter?” 40:23 “Is there a playlist for the Data Science Labs on YouTube?” 46:11 “Does that mean that the python version does not work with pandas?” 50:14 “Where is this data set from?”

Simon Couch - Practical AI for data science

Practical AI for data science (Simon Couch)

Abstract: While most discourse about AI focuses on glamorous, ungrounded applications, data scientists spend most of their days tackling unglamorous problems in sensitive data. Integrated thoughtfully, LLMs are quite useful in practice for all sorts of everyday data science tasks, even when restricted to secure deployments that protect proprietary information. At Posit, our work on ellmer and related R packages has focused on enabling these practical uses. This talk will outline three practical AI use-cases—structured data extraction, tool calling, and coding—and offer guidance on getting started with LLMs when your data and code is confidential.

Presented at the 2025 R/Pharma Conference Europe/US Track.

Resources mentioned in the presentation:

- {vitals}: Large Language Model Evaluations https://vitals.tidyverse.org/

- {mcptools}: Model Context Protocol for R https://posit-dev.github.io/mcptools/

- {btw}: A complete toolkit for connecting R and LLMs https://posit-dev.github.io/btw/

- {gander}: High-performance, low-friction Large Language Model chat for data scientists https://simonpcouch.github.io/gander/

- {chores}: A collection of large language model assistants https://simonpcouch.github.io/chores/

- {predictive}: A frontend for predictive modeling with tidymodels https://github.com/simonpcouch/predictive

- {kapa}: RAG-based search via the kapa.ai API https://github.com/simonpcouch/kapa

- Databot https://positron.posit.co/dat

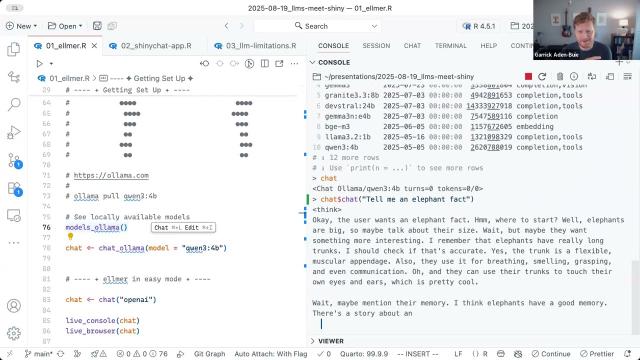

Building the Future of Data Apps: LLMs Meet Shiny

GenAI in Pharma 2025 kicks off with Posit’s Phil Bowsher and Garrick Aiden-Buie sharing a technical overview of how LLMs can integrate with Shiny applications and much more!

Abstract: When we think of LLMs (large language models), usually what comes to mind are general purpose chatbots like ChatGPT or code assistants like GitHub Copilot. But as useful as ChatGPT and Copilot are, LLMs have so much more to offer—if you know how to code. In this demo Garrick will explain LLM APIs from zero, and have you building and deploying custom LLM-empowered data workflows and apps in no time.

Resources mentioned in the session:

- GitHub Repository for session: https://github.com/gadenbuie/genAI-2025-llms-meet-shiny

- {mcptools} - Model Context Protocols servers and clients https://posit-dev.github.io/mcptools/

- {vitals} - Large language model evaluation for R https://vitals.tidyverse.org/